In the video, Eli, the Computer Guy, demonstrates a Raspberry Pi 5 project that uses speech recognition, the IBM Granite LLM (via the Olama framework), and pyttsx3 for text-to-speech, enabling the device to hold spoken conversations with users. He showcases the system’s capabilities and highlights how advanced AI features can be run on affordable hardware with minimal Python code.

Certainly! Here’s a five-paragraph summary of the video, with spelling and grammar corrected:

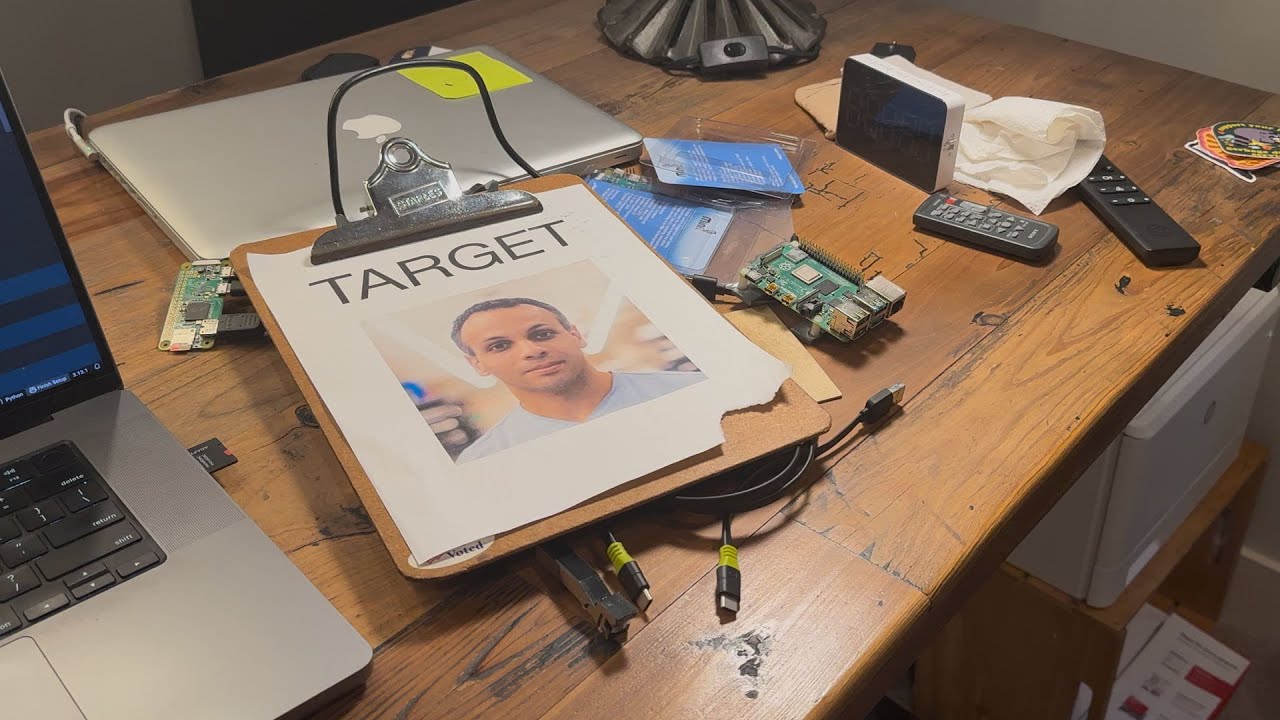

In this episode of “Stupid Geek Tricks,” Eli, the Computer Guy, demonstrates his latest AI project using a Raspberry Pi 5. The setup allows users to speak into a small microphone connected to the Raspberry Pi, which then converts the spoken words into text. This text is sent to the Olama framework, which runs the IBM Granite 3.32B large language model (LLM) locally on the device. The AI processes the input and generates a response, which is displayed on the screen.

The new feature added in this episode is text-to-speech functionality. Now, when the AI generates a response, the Raspberry Pi can speak the answer aloud using the pyttsx3 module. Eli notes that while pyttsx3 works well when set up correctly, getting it to function can sometimes be challenging. He demonstrates the system by asking the Raspberry Pi various questions, such as historical facts about George Washington and humorous queries about groundhogs, showcasing both the speech recognition and text-to-speech capabilities.

The technical workflow involves several components. Speech recognition is handled by sending the audio to Google’s voice service API, which returns the transcribed text. This text is then processed by the Olama framework running the Granite LLM locally on the Raspberry Pi. The AI’s response is then converted back into speech using pyttsx3, allowing for a full conversational loop between the user and the device.

Eli highlights the simplicity and power of the code behind this project. The entire system is built with just 67 lines of Python code, utilizing modules for speech recognition, AI processing, and text-to-speech. The main loop listens for audio input, processes it through the AI, and then speaks the response. He emphasizes that despite the code being somewhat “ugly,” it effectively demonstrates how to integrate these technologies on a small, affordable computer.

Finally, Eli discusses the broader goals of the project at Silicon Dojo, where he aims to make advanced AI functionality accessible on low-cost hardware. The next challenge is to optimize the architecture so that similar capabilities can run on even cheaper devices, such as a $10 computer. He encourages viewers interested in hands-on AI and hardware projects to visit SiliconDojo.com for more information and future updates.