Eli the Computer Guy discusses the challenges of developing AI agents amid a rapidly evolving tech landscape and highlights the importance of standardization efforts led by major tech companies through the Linux Foundation’s Agentic AI Foundation. He explains how protocols like the Model Context Protocol, Goose, and agents.md aim to create interoperable frameworks that will simplify AI development, improve reliability, and help the industry mature similarly to past technology standardizations like HTML5.

In this video, Eli the Computer Guy discusses the current state and challenges of AI technology, particularly focusing on AI agents and the importance of standardization in the field. He begins by highlighting the rapid evolution of AI tech stacks, comparing it to the early days of web development when there was no clear standard for building websites. Eli uses the example of Moonream, an AI computer vision model designed for edge devices like Raspberry Pi, to illustrate both the potential and the difficulties faced by developers, such as poor documentation and inconsistent code behavior. He emphasizes that AI is still a new and evolving technology, and developers often struggle with issues like AI drift, where AI responses change over time, causing previously working code to fail.

Eli then explains the concept of AI agents, which are AI systems capable of taking actions based on user inputs, such as booking appointments by understanding preferences and schedules. However, he points out that building and maintaining these agents requires a solid tech stack and reliable frameworks, which currently lack standardization. This lack of standards makes it difficult for developers to create scalable and maintainable AI solutions. To address this, major tech companies have joined forces with the Linux Foundation to create the Agentic AI Foundation (AIF), aiming to standardize key AI technologies and protocols, including the Model Context Protocol (MCP), Goose, and agents.md.

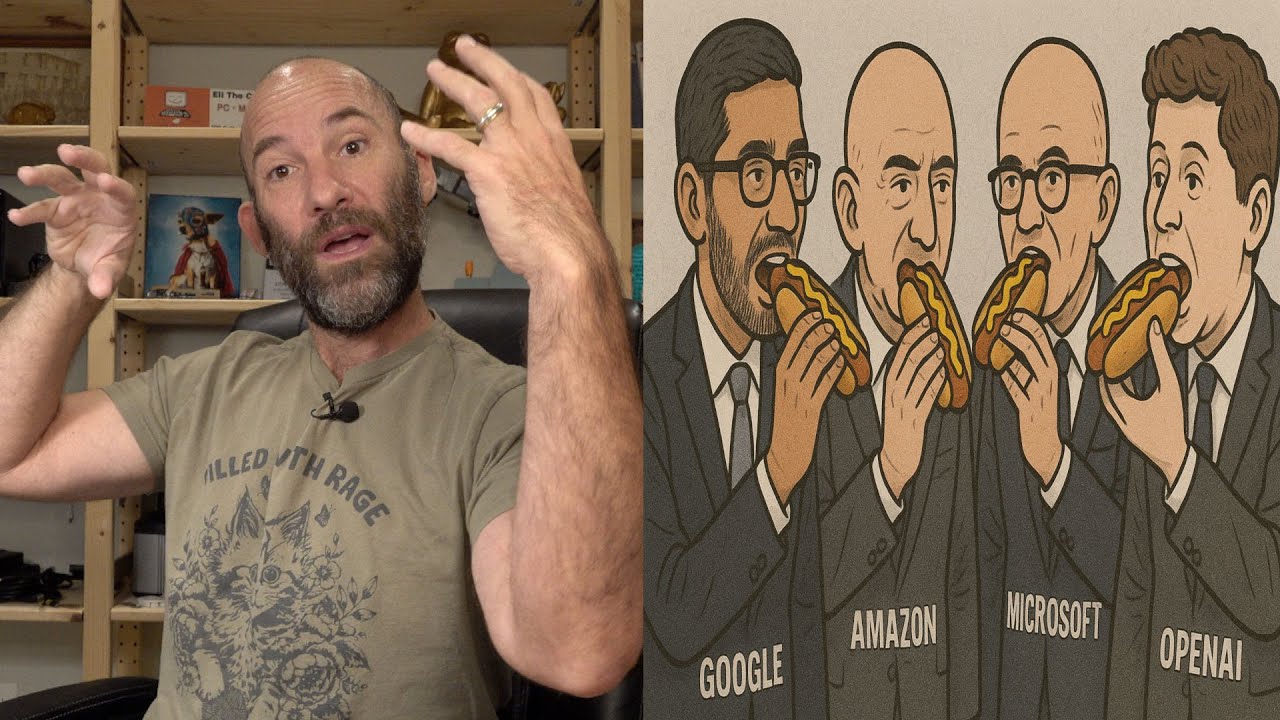

The Model Context Protocol (MCP), developed by Anthropic, is described as a “USB port for AI,” allowing AI agents to connect seamlessly to various data sources without needing custom integrations for each platform. Goose, contributed by Block (formerly Square), is an open-source customizable AI agent designed for coding tasks, supporting MCP and capable of running locally or in the cloud. Agents.md, introduced by OpenAI, is a markdown-based tool to guide AI agent behavior more predictably. The collaboration among major players like Amazon, Google, Microsoft, and others under the Linux Foundation’s umbrella aims to promote interoperability and create de facto standards for AI development.

Eli draws parallels between this effort and the standardization of web technologies like HTML5, which greatly simplified web development by providing a common framework. He acknowledges that while the AI standards being developed may not be perfect or final, having a unified system will help developers and companies build more reliable and maintainable AI applications. He also notes that, similar to how Python became the dominant language for APIs despite initial resistance, developers will need to adapt to whatever standards emerge in the AI space to stay relevant and effective.

In conclusion, Eli encourages viewers to stay informed about these developments and consider how standardization efforts like those led by the Linux Foundation will impact the future of AI technology. He invites feedback on the topic and promotes his free technology education initiative, Silicon Dojo, which offers hands-on classes on AI and computer vision. Eli remains cautiously optimistic about the move toward standardization, seeing it as a necessary step for the AI industry to mature and become more accessible for developers and organizations alike.