The video discusses a new Chinese paper introducing ASI Arch, an AI system designed to autonomously discover and improve neural network architectures by conducting experiments and learning from results without human intervention, potentially accelerating AI innovation beyond human capabilities. While the approach shows promising results and suggests a future where AI self-improves recursively, experts remain cautious about the paper’s methodology and claims, emphasizing the need for further validation.

The video discusses a new paper from China titled “AlphaGo Moment for model architecture discovery,” which makes bold claims about AI’s ability to self-improve by autonomously discovering better neural network architectures. The authors argue that human researchers are the bottleneck in AI progress and that AI systems can accelerate innovation by conducting their own experiments and refining their designs without human intervention. This approach builds on the legacy of systems like AlphaGo and AlphaZero, which demonstrated that AI can teach itself strategies more effectively than humans. The paper introduces ASI Arch, an AI framework designed to autonomously generate, test, and validate new neural architectures, marking a shift from automated optimization to automated innovation.

ASI Arch operates through four main modules: cognition, researcher, engineer, and analyst. The cognition module mines existing scientific literature and code repositories to gather prior knowledge. The researcher proposes new hypotheses and generates experimental code, which is then executed by the engineer module. The results are evaluated both by an AI judge assessing novelty, efficiency, and complexity, and by real training tests to produce a fitness score. The analyst module summarizes findings and feeds insights back into the researcher, creating a feedback loop that enables the system to learn from its own experiments and improve over time. This process led to nearly 2,000 autonomous experiments and the discovery of 106 state-of-the-art linear attention architectures.

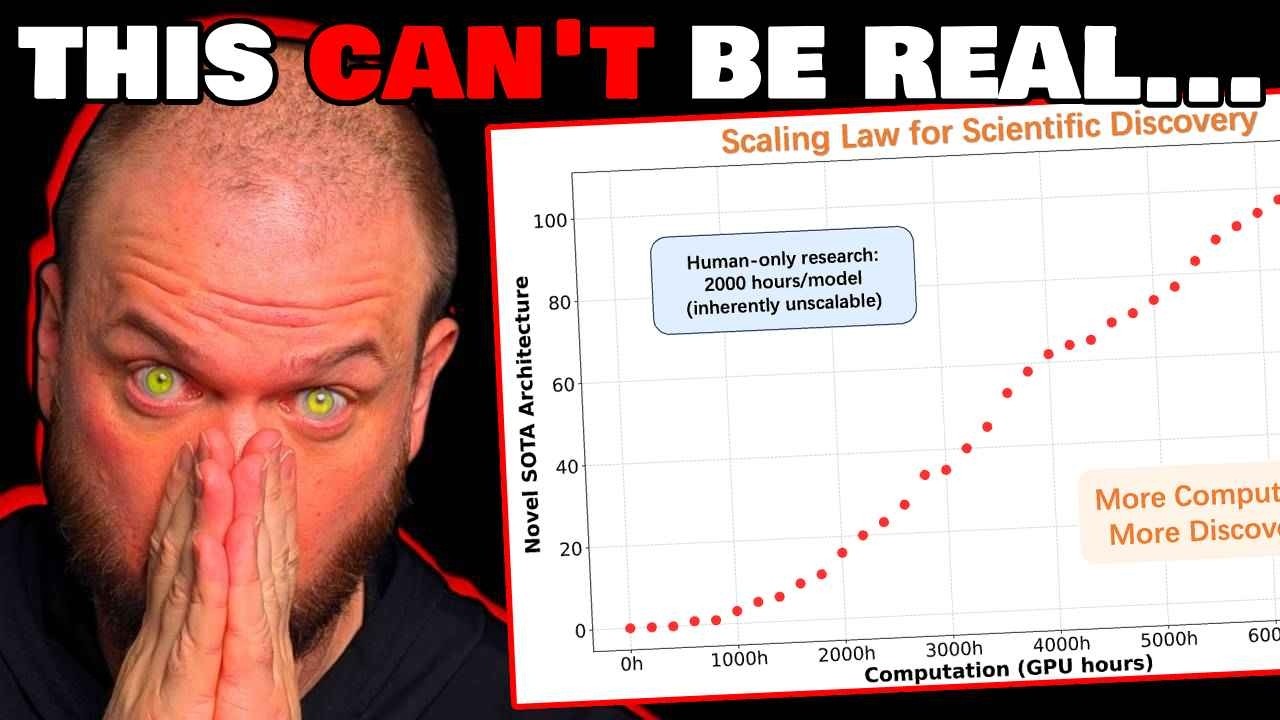

One of the key findings is the establishment of a scaling law for scientific discovery, analogous to the scaling laws in AI model training. The more computational resources (GPU hours) devoted to this process, the better the architectures become, suggesting that technological progress in AI research could become a function of compute power rather than human ingenuity. The system also revealed that a small subset of architectural components, such as gating mechanisms and convolutions, disproportionately contribute to breakthroughs, reflecting the Pareto principle where 20% of inputs yield 80% of results. Interestingly, about 48.6% of innovations came from mining existing knowledge, 44.8% from the system’s own experimental experience, and only 6% were entirely novel.

Despite the excitement, the video also highlights skepticism from experts like Lucas Beyer from Meta, who points out potential issues in the paper’s methodology, such as discarding experimental results that deviate significantly from the baseline, which could bias the findings. There is concern that some claims might be exaggerated or that the paper could be leveraging hype for academic citations. However, since the research is open source, the community can attempt to replicate and validate the results. The video emphasizes that while this particular paper’s claims remain to be confirmed, the broader trend of AI systems contributing increasingly to their own development is well-supported and likely to continue.

In conclusion, the video frames this research as a potentially transformative step toward recursive self-improving AI, where AI systems autonomously conduct scientific research and innovate beyond human capabilities. If validated, this could accelerate AI progress dramatically, leading to an intelligence explosion as AI improves itself faster and faster. While caution is warranted given the current uncertainties, the video suggests that the future of AI research may increasingly rely on AI-driven discovery, fundamentally changing how technological advancements are made and possibly reshaping the trajectory of AI development in the coming years.