The video explains how transformer architectures, originally developed for language, have been adapted for vision tasks through models like ViT, DiT, and MMDiT by converting images into tokens and enabling flexible conditioning (such as text prompts). It highlights the progression from simple image classification to complex multimodal generation, showing how each model builds on the previous to handle increasingly sophisticated vision challenges.

The video explores how transformer architectures, originally designed for language modeling, have been adapted for computer vision tasks such as image classification, image generation, and video generation. The transition from language to vision required addressing two main challenges: tokenization (converting continuous images into discrete tokens) and conditioning (enabling models to respond to prompts, such as text). The video focuses on three key transformer-based architectures in vision: the Vision Transformer (ViT), the Diffusion Transformer (DiT), and the Multimodal Diffusion Transformer (MMDiT), explaining how each builds upon the previous to handle increasingly complex tasks.

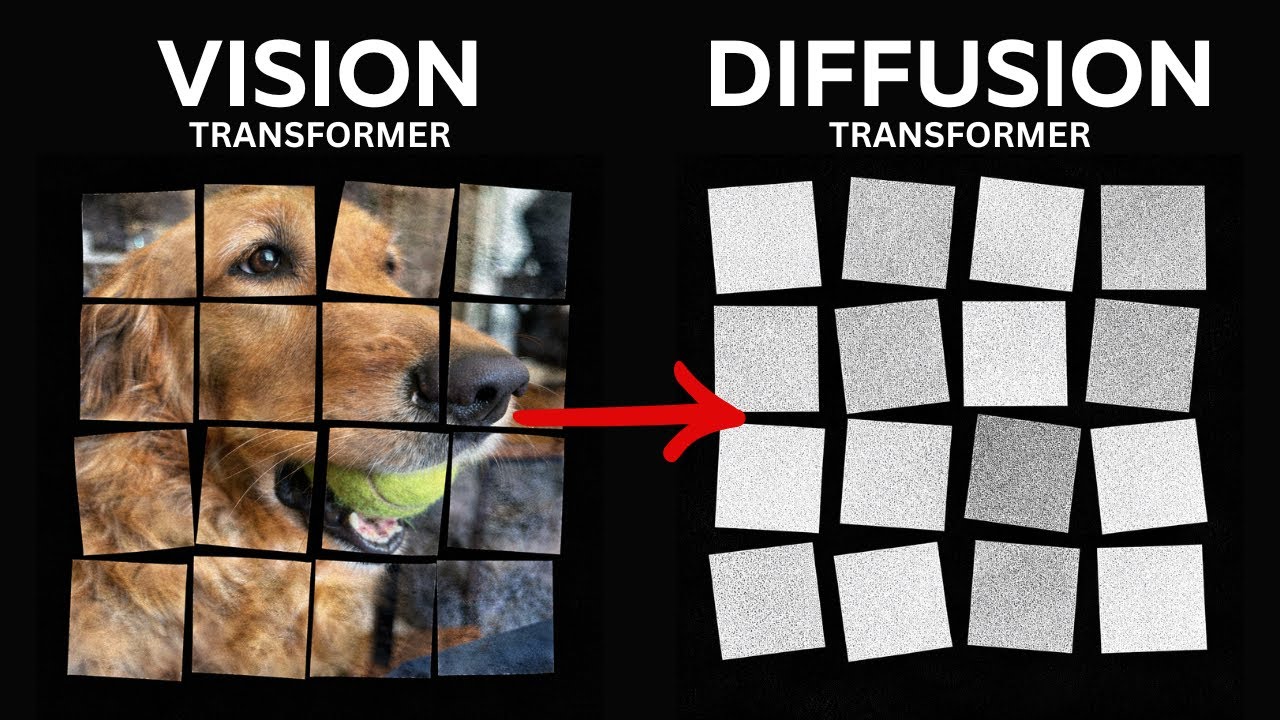

The Vision Transformer (ViT) adapts the transformer encoder for image classification by splitting an image into fixed-size patches (e.g., 16x16 pixels), treating each patch as a token similar to a word in text. These patches are flattened and embedded, with positional encodings added to retain spatial information. The transformer encoder processes these embeddings, and a special classification token (CLS) summarizes the image for the final classification. This approach allows the model to capture global context more effectively than traditional convolutional neural networks (CNNs), while also making computation tractable by reducing the number of tokens.

For image generation, the Diffusion Transformer (DiT) replaces the convolutional backbone of earlier diffusion models with a transformer-based architecture. Instead of operating on raw pixels, DiT works in a compressed latent space produced by a variational autoencoder (VAE), significantly reducing computational cost. The model learns to reverse a diffusion process that gradually adds noise to images, generating new images from noise. Conditioning is handled by incorporating time and class information, using various strategies such as concatenating conditioning tokens, cross-attention, feature modulation (adaptive layer normalization), and residual scaling, each with different trade-offs in effectiveness and efficiency.

A key limitation of the original DiT is that it is class-conditional, meaning it can only generate images based on a fixed set of classes. To enable more flexible text-based prompting, subsequent models like PixArt-Alpha introduced cross-attention with token embeddings from language models. However, this approach treats text tokens as static and does not allow for dynamic interaction between text and image, which can be restrictive for tasks like image editing where context matters.

The Multimodal Diffusion Transformer (MMDiT) addresses this by treating image and text inputs symmetrically, giving each its own encoder and allowing them to interact through joint attention. This enables both modalities to influence each other, removing ambiguity and improving performance on tasks that require nuanced understanding of both image and text. The video concludes by noting that these transformer-based architectures, initially developed for images, have also become foundational for video generation models, highlighting the growing versatility and impact of transformers in vision tasks.