The video explains how AI models like Anthropic’s Claude are being copied through large-scale automated interactions, highlighting that the economic incentive to distill and replicate advanced AI is universal and not limited to any one country. It argues that while copied models may perform well on benchmarks, they lack the depth and adaptability of original frontier models, urging users to understand these limitations and make informed choices based on their needs.

The video discusses a recent incident where Anthropic, an AI company, caught three Chinese labs—Deepseek, Moonshot, and Miniaax—using large-scale automated conversations to extract and copy the capabilities of Anthropic’s Claude model. These labs used sophisticated networks of fake accounts and proxies to evade detection, running millions of interactions to distill Claude’s intelligence into their own models. While the story has been widely framed as a Cold War-style act of espionage between the US and China, the video argues that this misses the broader, more important point: the real issue is the economic and technological pressure to copy valuable AI, which is not limited to any one country.

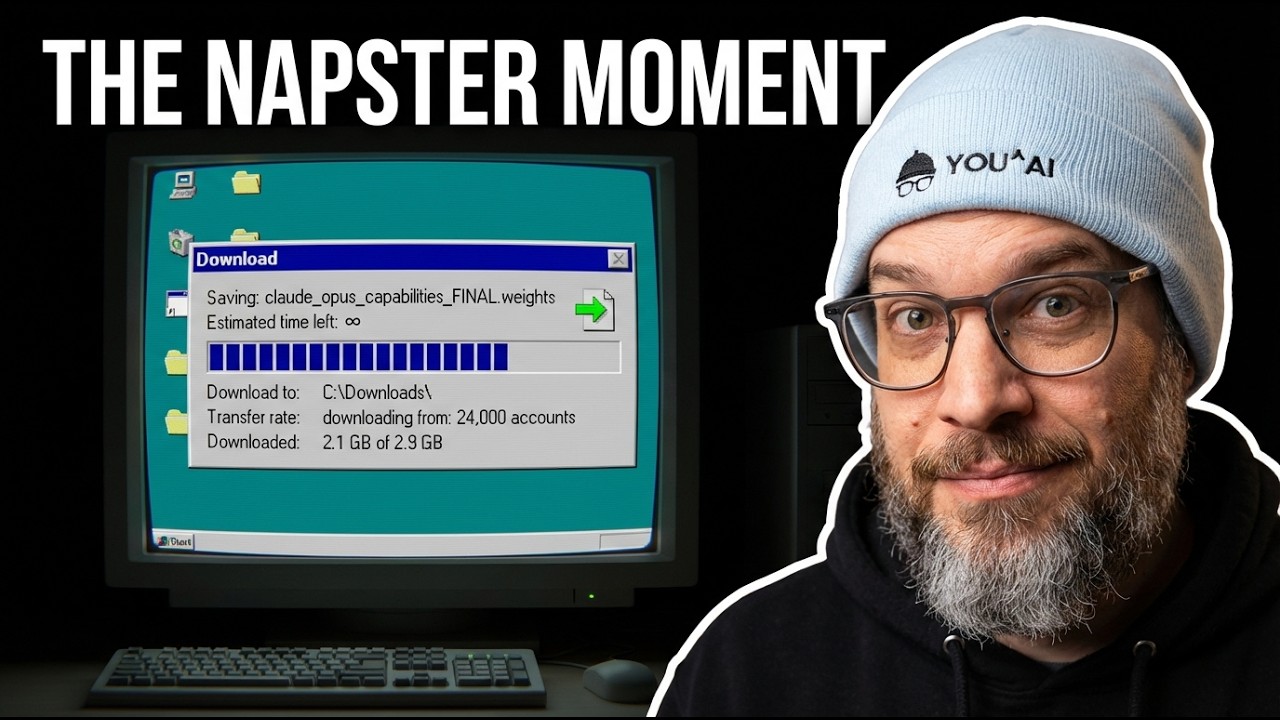

The core argument is that AI models, unlike physical technologies such as nuclear weapons, are fundamentally mathematical and easily copyable. The cost of developing a frontier AI model is measured in billions of dollars, but the cost of copying or distilling its capabilities through API access is orders of magnitude lower. This creates a “pressure gradient,” where the incentive to extract and replicate advanced AI is overwhelming and essentially universal. The video compares this to the Napster era of music piracy, emphasizing that such copying is inevitable and unstoppable, only slowed by technical and legal speed bumps.

A key insight is that models built through distillation—training on the outputs of another, more advanced model—are systematically less capable than the originals, especially for complex, sustained, or open-ended tasks. While distilled models may perform well on benchmarks and narrow tasks, they lack the broad, generalizable reasoning and adaptability of frontier models. This performance gap is most pronounced in “agentic” work, where AI must operate autonomously over long periods, adapt to new situations, and use tools in novel ways. Current evaluation methods and benchmarks often fail to capture these differences, making it easy for organizations to overestimate the capabilities of cheaper, distilled models.

The video also highlights that the incentive to distill is not unique to Chinese labs or adversarial states. Any organization that cannot afford the massive costs of frontier AI development—whether startups, academic groups, or even large companies outside the top tier—faces the same economic pressure to extract capabilities from the leaders. Even talent acquisition, where companies hire away researchers with valuable know-how, operates on the same principle: it’s cheaper to acquire intelligence than to build it from scratch. The line between legitimate research and illicit distillation is blurry, and the universal nature of this incentive means that AI capabilities will inevitably leak and spread.

Finally, the video urges viewers—especially those choosing or deploying AI tools—to understand the provenance and limitations of the models they use. For routine, well-defined tasks, distilled models may offer excellent value. But for high-stakes, open-ended, or autonomous work, only frontier models provide the necessary generality and robustness. The speaker recommends rigorous, real-world testing of AI models beyond standard benchmarks to assess their true capabilities. Ultimately, the video concludes that the challenge is not about stopping copying entirely, but about managing the speed of capability diffusion and making informed choices about which models to trust for which tasks. The framing of this issue as a Cold War is misleading; it’s fundamentally an economic and technological phenomenon that transcends borders.