The video criticizes Anthropic for cutting off access to their Claude AI models from competitors like Trey, Windsurf, and Cursor, viewing this as a fear-driven move to protect their technology and market position rather than a principled stance on safety. It calls for greater accountability and transparency from Anthropic, warning that their restrictive practices could stifle innovation and damage their reputation within the AI community.

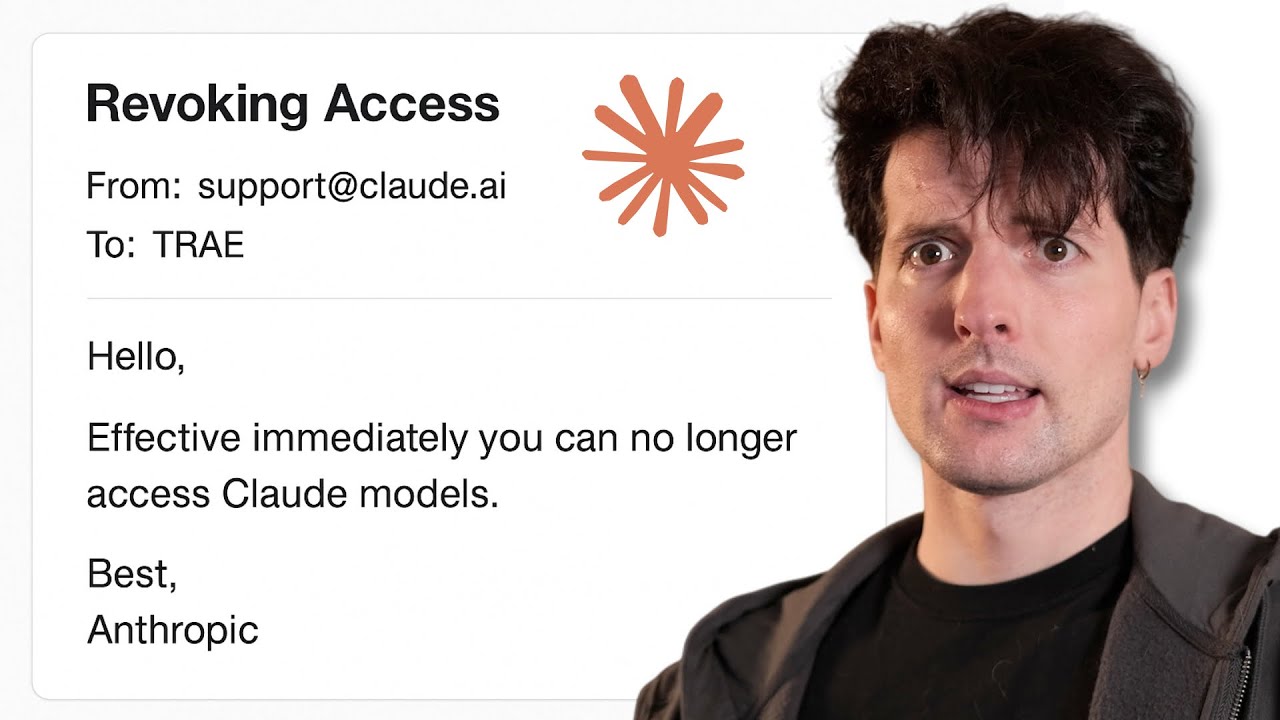

The video discusses the recent decision by Anthropic to cut off Trey’s access to their Claude AI models. Trey is an AI-powered IDE developed by TikTok and ByteDance, similar to Cursor and Windsurf, but more affordable. The creator of the video has had direct interactions with the Trey team and praises their dedication and product improvements. However, Anthropic’s move to restrict access to Claude models for Trey is seen as part of a broader pattern where Anthropic has also cut off Windsurf and OpenAI from using Claude, citing concerns over competition and data security. This has created tension in the AI developer community, especially since Anthropic is the only major AI lab to enforce such strict access restrictions.

Anthropic’s sensitivity appears to stem from fears around companies, especially those with ties to China like ByteDance, using their models to advance competing AI technologies through techniques like model distillation. The company recently updated its terms of service to restrict access from certain regions due to legal, regulatory, and national security risks. This has impacted subsidiaries of Chinese companies operating in the US, such as Trey, despite their American-based teams. Anthropic is concerned that these entities could use Claude models to develop AI that serves adversarial military or intelligence objectives or competes globally with US-based tech firms. This fear is heightened by the rapid progress made by competitors like Windsurf and Cursor, who are training their own models using data derived from user interactions with Claude.

The video highlights how Windsurf’s latest model, SWE 1.5, shows clear signs of being trained on data influenced by Claude’s behavior, resulting in a model that is fast and effective for code generation but still retains a “Claudy” style. Similarly, Cursor has developed models using large datasets of user feedback to improve performance. These developments threaten Anthropic’s competitive edge, especially since they have not released any open-weight models, unlike other major labs such as Google and OpenAI. Anthropic’s reluctance to open source their code and their selective sharing of research benchmarks further contribute to the perception that they are guarding their technology aggressively, sometimes at the expense of the broader AI community.

The video also critiques Anthropic’s business practices, suggesting that they strategically time their restrictions to minimize backlash while maximizing competitive advantage. The creator expresses frustration with Anthropic’s positioning as a safety-focused, developer-friendly company, arguing that their actions contradict this image. The decision to cut off Trey, a well-funded and promising competitor, is seen as a move driven by fear rather than principle. The video also touches on the broader implications of such restrictions, comparing them to other tech industry examples where companies limit access to services to stifle competition, which is viewed negatively.

In conclusion, the video calls for greater accountability from Anthropic and urges the AI community not to overlook these questionable practices simply because Anthropic’s models perform well in certain tasks. The creator emphasizes the importance of holding Anthropic to higher standards if they want to be seen as a positive force in AI development. While acknowledging Anthropic’s technical strengths, especially in agentic AI tasks, the video warns that their restrictive and secretive approach could hinder industry progress and innovation. The creator invites viewers to share their opinions on whether Anthropic deserves the positive reputation it currently holds.