Ashesh Rambachan explores whether foundation models, large-scale AI systems trained on diverse data, truly capture underlying “world models” like Newtonian mechanics or merely replicate surface-level patterns, using methods like inductive bias probes to evaluate their generalization. He concludes that current models often fail to learn fundamental structures, suggesting the need for new training approaches and highlighting the potential for these models to aid scientific discovery by revealing discrepancies with established theories.

In this presentation, Ashesh Rambachan, an assistant professor of economics at MIT, discusses the concept of foundation models, particularly their application and evaluation in economics. Foundation models are large-scale AI models pre-trained on massive and diverse datasets using unsupervised or self-supervised learning techniques. Unlike traditional supervised learning models trained for specific tasks, foundation models serve as a common base that can be fine-tuned for various downstream tasks with smaller datasets. Rambachan emphasizes the importance of understanding the underlying “world models” these foundation models encode, which represent the deeper structure that allows them to generalize across tasks.

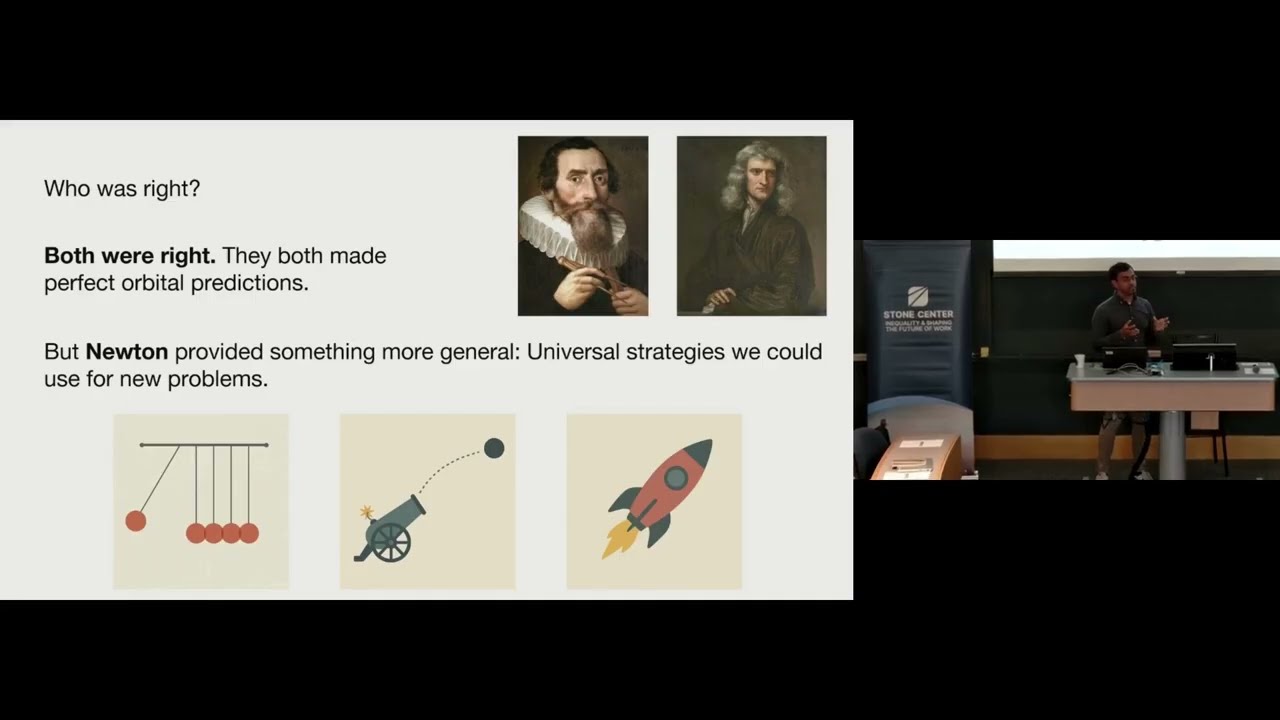

Rambachan illustrates the concept of world models using the example of planetary orbit prediction. He contrasts the historical models of Kepler, who accurately predicted orbits but without explaining the underlying forces, and Newton, who provided a more general and explanatory model through Newtonian mechanics. The question posed is whether a foundation model trained to predict planetary orbits can capture the deeper Newtonian mechanics or if it merely replicates surface-level predictions like Kepler’s model. This analogy extends to economics, where foundation models might or might not capture fundamental economic structures such as human capital or market beta.

To evaluate whether foundation models capture these underlying world models, Rambachan introduces a method called inductive bias probes. This approach involves generating synthetic datasets based on a hypothesized true world model, fine-tuning the foundation model on these datasets, and then comparing how the model extrapolates relative to the true world model. The key insight is that a foundation model’s inductive bias—the preference it has for certain types of extrapolations—can be revealed by how it generalizes from limited data. Applying this method to a transformer model trained on simulated orbital data, Rambachan finds that while the model predicts orbits well, it does not exhibit an inductive bias toward Newtonian mechanics, instead learning more complex and less generalizable heuristics.

Further experiments with large language models equipped with reasoning capabilities also show difficulty in systematically recovering the true physical laws governing orbits, suggesting that current foundation models may primarily optimize for their pre-training objectives (such as next token prediction) rather than uncovering fundamental world structures. Rambachan suggests that alternative training objectives or architectures explicitly designed to track state representations might improve the ability of foundation models to learn meaningful world models. This insight opens avenues for future research to develop models that better capture underlying structures in both physical and economic domains.

In conclusion, Rambachan highlights the broader implications of this work for economics and science. While current foundation models may not fully recover known world models, discrepancies between model predictions and established theories can serve as opportunities for scientific discovery and hypothesis generation. He encourages economists and researchers to use precise evaluation metrics to better understand foundation models’ capabilities and limitations, and to explore new training methods that might enable these models to uncover deeper, more generalizable structures in complex data.