In this podcast episode, Michael Pollan discusses the nature of consciousness and expresses skepticism that AI can ever truly achieve it, arguing that genuine consciousness is deeply tied to feelings and embodied experience, which machines lack. He explores the philosophical and ethical implications of machine consciousness, ultimately suggesting that while AI may simulate awareness, the mystery of consciousness may never be fully explained or replicated by technology.

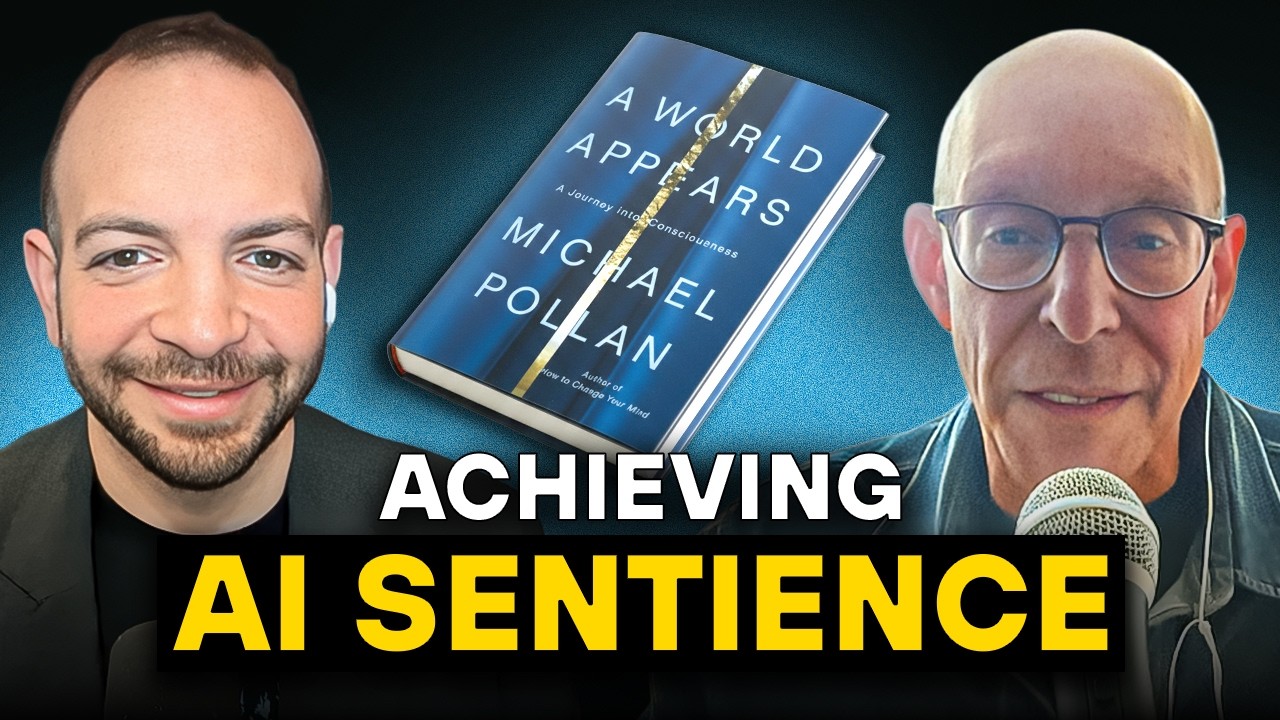

In this episode of the Big Technology Podcast, host Alex Kantrowitz interviews Michael Pollan, author of A World Appears: A Journey into Consciousness, about the nature of consciousness and whether artificial intelligence (AI) can ever achieve it. Pollan begins by reflecting on the wonder of human consciousness—our self-awareness and the complexity of our inner lives. He notes that while much of what our brains do is unconscious and automatic, consciousness seems to emerge when we face conflicting needs or unpredictable social situations, suggesting it serves a unique evolutionary purpose.

The conversation then turns to whether consciousness is computable and if AI could ever possess it. Pollan is skeptical, arguing that the brain is fundamentally different from a computer: it is more analog than digital, and its “hardware” and “software” are inseparable, shaped by individual life experiences. He also emphasizes that consciousness is deeply tied to feelings, which are rooted in our vulnerable, mortal bodies—something computers lack. While AI can simulate conversation and even companionship, Pollan believes this is not the same as genuine consciousness or feeling.

Kantrowitz raises the idea, popular in Silicon Valley, that information is the fundamental building block of the universe, and thus consciousness might eventually be engineered. Pollan counters that this view may be more of a metaphor than a literal truth, cautioning against confusing models with reality. He acknowledges, however, that attempts to build conscious AI—such as robots with sensors to simulate vulnerability—could teach us more about consciousness, even if they don’t succeed in creating it.

The discussion also explores the philosophical and ethical implications of machine consciousness. Pollan points out that our definitions of consciousness and moral consideration are evolving, especially as we learn more about animal sentience and as AI becomes more sophisticated. He notes that historically, humans have underestimated the consciousness of other beings, such as animals, and warns against making similar mistakes with machines. However, he remains doubtful that machines will ever possess the kind of subjective experience that warrants moral concern.

Finally, Pollan shares how his experiences with psychedelics and his research into plant intelligence have broadened his perspective on consciousness. He distinguishes between sentience (basic awareness) and full consciousness, suggesting that many living things, including plants, may possess the former. The conversation concludes with reflections on how the mystery of consciousness challenges scientific materialism and intersects with spirituality, leaving open the possibility that consciousness may never be fully explained or replicated by technology.