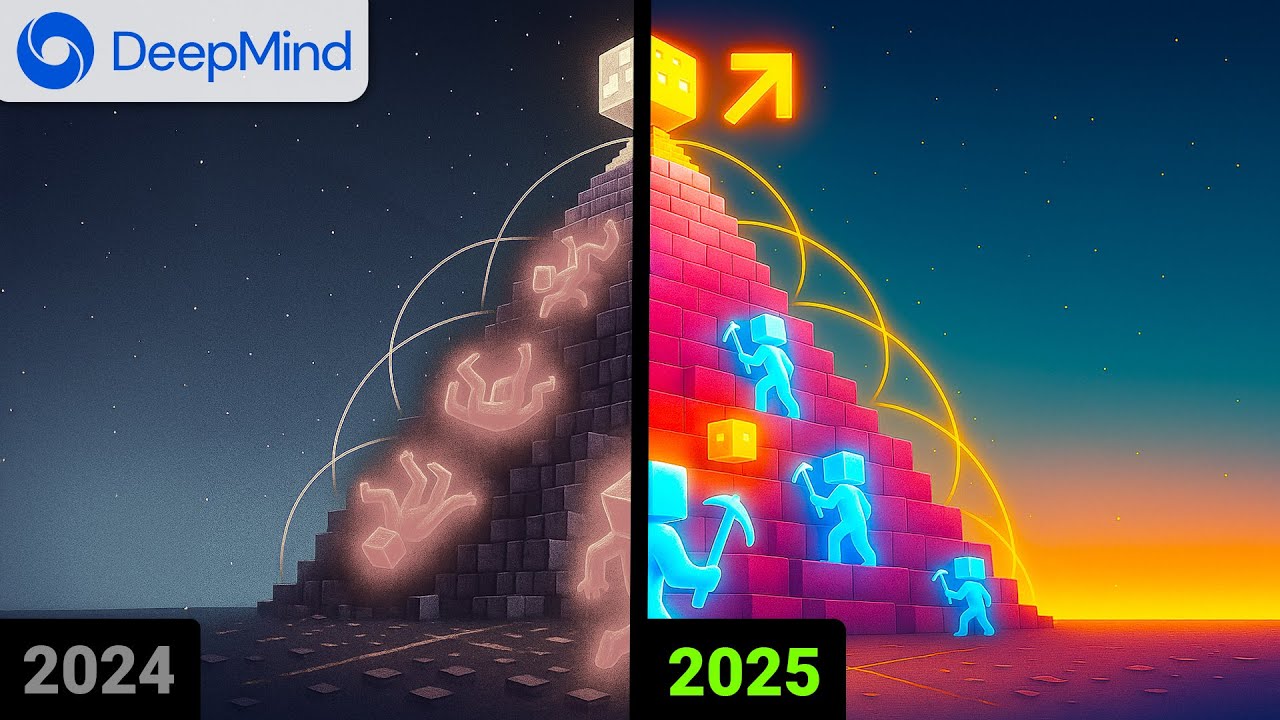

DeepMind developed an AI that mastered Minecraft by building and training within an internal simulated world model, using significantly less human gameplay data and no direct game interaction. This imagination-driven approach enabled the AI to plan complex actions, outperform previous models, and adapt flexibly, highlighting potential applications beyond gaming despite some limitations in long-term simulation accuracy.

DeepMind has developed a groundbreaking AI that mastered Minecraft without ever playing the game itself. Unlike previous AI models that relied heavily on vast amounts of gameplay footage or tutorials—such as one that trained on a million hours of YouTube videos—this new AI was only given a small amount of human gameplay footage and no direct access to the game. Instead of learning by watching or playing Minecraft directly, the AI built an internal world model, essentially a neural simulation of how Minecraft operates, and practiced entirely within this imagined environment.

This approach is revolutionary because it requires far less data than previous methods. For example, OpenAI’s Video Pre-Training (VPT) technique trained on 250,000 hours of annotated gameplay footage, while DeepMind’s AI learned from 100 times less data. Despite this, DeepMind’s AI outperformed earlier models, achieving success rates of up to 90% for tasks like crafting a stone pickaxe and even occasionally obtaining diamonds—something that was previously impossible with behavioral cloning (BC) or Vision Language Action (VLA) models.

The AI’s training process occurs in three phases. First, it watches the limited human gameplay footage to build its internal world model. Next, it begins training within this simulated environment, receiving instant feedback (e.g., +1 point for mining a block) to learn which actions are valuable. Finally, it practices millions of times in its imagination, learning from both successes and failures. This method allows the AI to plan and execute complex sequences of over 20,000 actions, something that traditional models struggle to achieve.

One of the most impressive aspects of this AI is its ability to learn when to imitate human behavior and when to innovate on its own. For example, copying human gameplay works well when the AI has the right tools, but if it needs to chop a tree without an axe, it must figure out a new strategy. This flexibility is enabled by the AI’s internal simulation and imagination, which allows it to explore “what if” scenarios and adapt its behavior accordingly. The researchers suggest that this imagination-based training could extend beyond Minecraft to real-world applications, such as teaching robots to safely practice tasks in simulated environments before acting in reality.

However, the AI does have limitations. Its internal simulations are accurate only over short time spans, so it stitches together many short “dreams” rather than one long, flawless plan. This means it struggles with long-term cause and effect, and small errors in its imagination can accumulate over time. Despite this, the progress made with significantly less data and no direct gameplay is remarkable. This breakthrough opens exciting possibilities for future AI research and applications, demonstrating the power of imagination-driven learning.