The video explains how short, AI-generated videos—typically around 8 seconds long due to technical limitations—are designed to quickly hijack viewers’ emotions and spread misinformation by exploiting social media algorithms and emotional triggers. It highlights the dangers of such content, offers tips to identify AI videos, and emphasizes the need for viewer vigilance, platform accountability, and regulatory measures to combat the spread of misleading synthetic media.

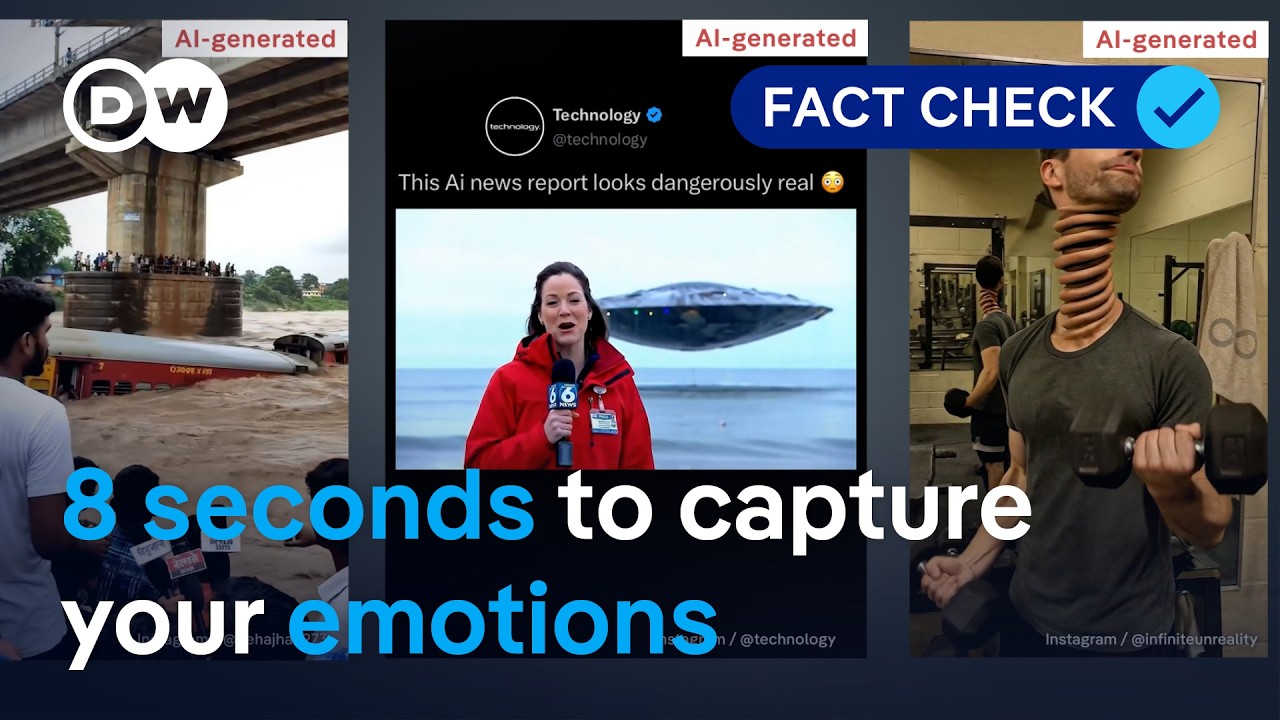

The video explores the phenomenon of AI-generated videos, particularly those that are around 8 seconds long, and how they are designed to hijack viewers’ emotions quickly. These short clips, often referred to as “AI slop,” are produced en masse using cheap tools that require minimal effort and expertise. While some AI videos can be humorous, many serve as tools for misinformation and disinformation, polluting online spaces and overshadowing authentic content. The video aims to answer why these clips are so short, how to identify them, and why they can be dangerous.

One key reason AI videos tend to be about 8 seconds long is due to technical limitations. Creating longer videos requires massive computing power, and current diffusion models for video generation typically produce clips of up to 10 seconds or 240 frames. Attempts to extend these videos often result in unnatural scene changes or motion. Despite these constraints, AI models like Google’s VO3 have made significant advances, generating highly realistic videos with consistent audio in just minutes. The short length of these videos leaves little time for critical thinking, making them highly sharable and effective at spreading misinformation.

The video highlights several telltale signs of AI-generated content. These include a lack of scene changes, no camera movement such as panning or zooming, gibberish or nonsensical text, typical AI errors like merging bodies or disproportionate body parts, and the frequent use of high-angle shots resembling drone footage in crisis or disaster scenes. Examples include AI-generated clips depicting mass funerals or floods, which contain subtle but identifiable mistakes. Such videos exploit emotional triggers and social media algorithms that curate content based on users’ interests, reinforcing a sense of belonging and making viewers more likely to engage and share.

The dangers of AI-generated videos extend beyond harmless entertainment. They can be used to spread false news, manipulate public opinion, and fuel harmful narratives such as racism and misogyny. The video cites instances where political figures have shared fake clips, and where AI-generated reporters deliver sexist content. The normalization of such content through repeated exposure on social media platforms exacerbates societal issues. The rise of hyperrealistic synthetic videos makes it increasingly difficult to distinguish fact from fiction, posing a significant challenge to information integrity.

To combat the spread of misleading AI videos, the video suggests several solutions. Social media platforms should label AI-generated content and incorporate invisible watermarks, as Google has done with its VO3 model. However, current AI detection tools are not very accurate, so much responsibility lies with viewers. The video advises viewers to be cautious with short, single-scene clips, verify information through credible sources, and be mindful of their emotional reactions. Ultimately, staying informed, sharing responsibly, and advocating for regulation are crucial steps to mitigate the risks posed by AI-generated misinformation.