The video critiques a flawed political science study that claims discussing AI existential risks doesn’t distract from immediate harms, highlighting its vague definitions, limited scope, and misleading conclusions. It emphasizes the importance of addressing real, current AI harms alongside existential risks and calls for meaningful regulation as AI technologies rapidly advance.

This follow-up video addresses a doomer talking point about AI risks that was raised in the comments of the creator’s previous video titled “No, AI Will Not Doom Us All, But We Need to Be Worrying About Much More Important AI Risk.” The creator highlights a recent article from the Journal of Medical Ethics presented in July 2024 that contradicts the doomer argument. The main issue discussed is the misinterpretation and sensationalism of a poorly conducted study from a political science department, which has a questionable reputation due to past unethical research involving Reddit. The creator emphasizes that the problem lies not with the comment itself but with the misleading articles and the study’s hyperbolic headline.

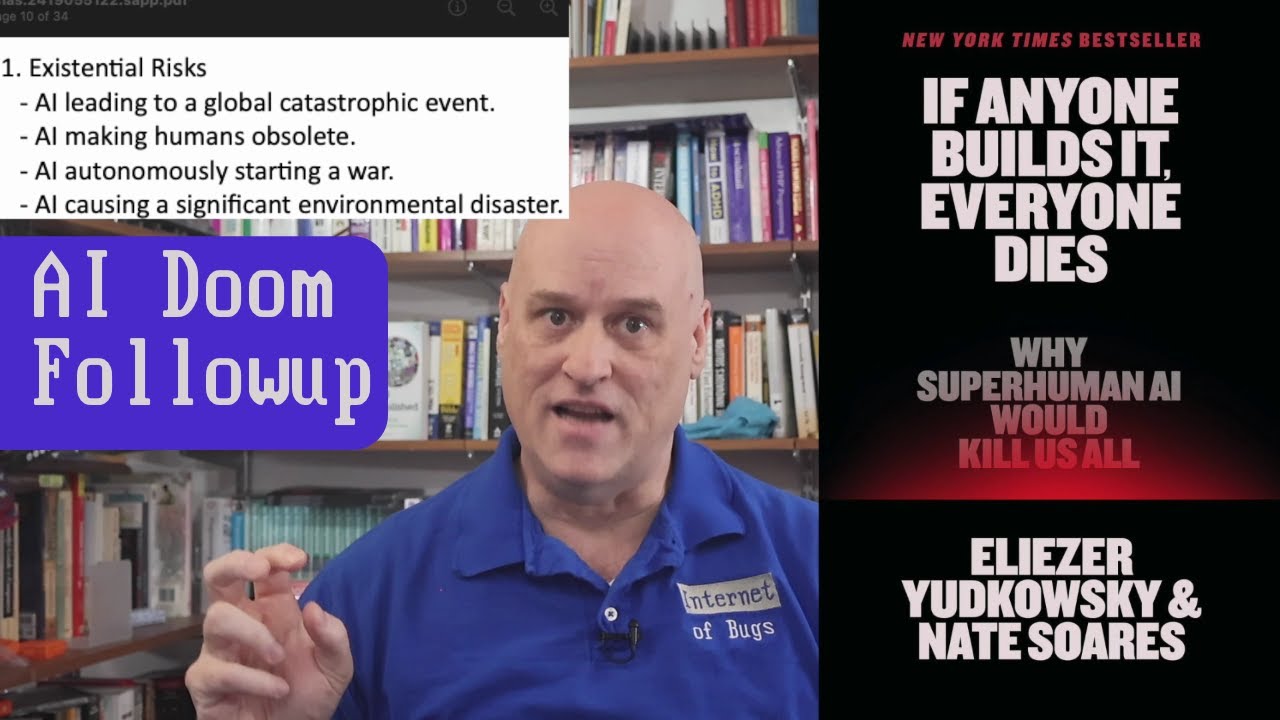

The study in question claims that “Existential risk narratives about AI do not distract from its immediate harms,” but the creator points out that the definitions of “existential,” “immediate,” and “distract” used by the authors are unclear and problematic. The study’s methodology involved a paid voluntary online survey where participants rated the likelihood and impact of randomly assigned AI risks. However, the survey only asked about four existential risks and four immediate risks, limiting its scope. The creator criticizes the study for equating rating risks on a scale with the concept of distraction, which is a flawed interpretation.

Breaking down the risks, the study’s existential risks include AI causing global catastrophic events, making humans obsolete, autonomously starting wars, and causing significant environmental disasters. The creator argues that these categories are vague and not well-defined, especially “making humans obsolete,” which was left open to individual interpretation. Additionally, the creator notes that global catastrophes and wars, while serious, have not historically resulted in the extinction of humanity, which contrasts with the more extreme doomer scenarios of AI wiping out all humans.

The immediate risks listed in the study are job losses in certain sectors, mass surveillance, misinformation, and biases in decision-making. The creator disagrees with this narrow definition of immediate harms, citing real-world examples of AI causing direct and serious harm right now, such as a teenager harmed by a chatbot, wrongful imprisonment due to faulty facial recognition, and dangerous medical advice from AI. These examples illustrate immediate, tangible harms that the study’s definition overlooks, reinforcing the creator’s argument that focusing on extreme existential risks distracts from addressing pressing current issues.

Finally, the creator reiterates that the study does not disprove concerns about existential AI risks but rather shows that discussing those risks does not reduce concern about immediate harms in a survey context. The video concludes by referencing the ethical paper “AI in the Falling Sky: Interrogating X-risk,” which aligns more closely with the creator’s perspective on existential risks. The creator urges viewers to focus on meaningful regulation and prevention of AI’s real harms, especially as companies like OpenAI are loosening restrictions, potentially increasing risks in the near future.