The video reviews ChatGPT 5.4, noting its significant improvements in quantitative modeling and tool use but highlighting its inconsistent performance—especially between its “thinking mode” and default “auto mode”—compared to competitors like Claude Opus 4.6. The reviewer concludes that while 5.4 excels at complex, agentic tasks, it still lags behind in writing quality and nuanced decision-making, advising users to choose models based on their specific needs.

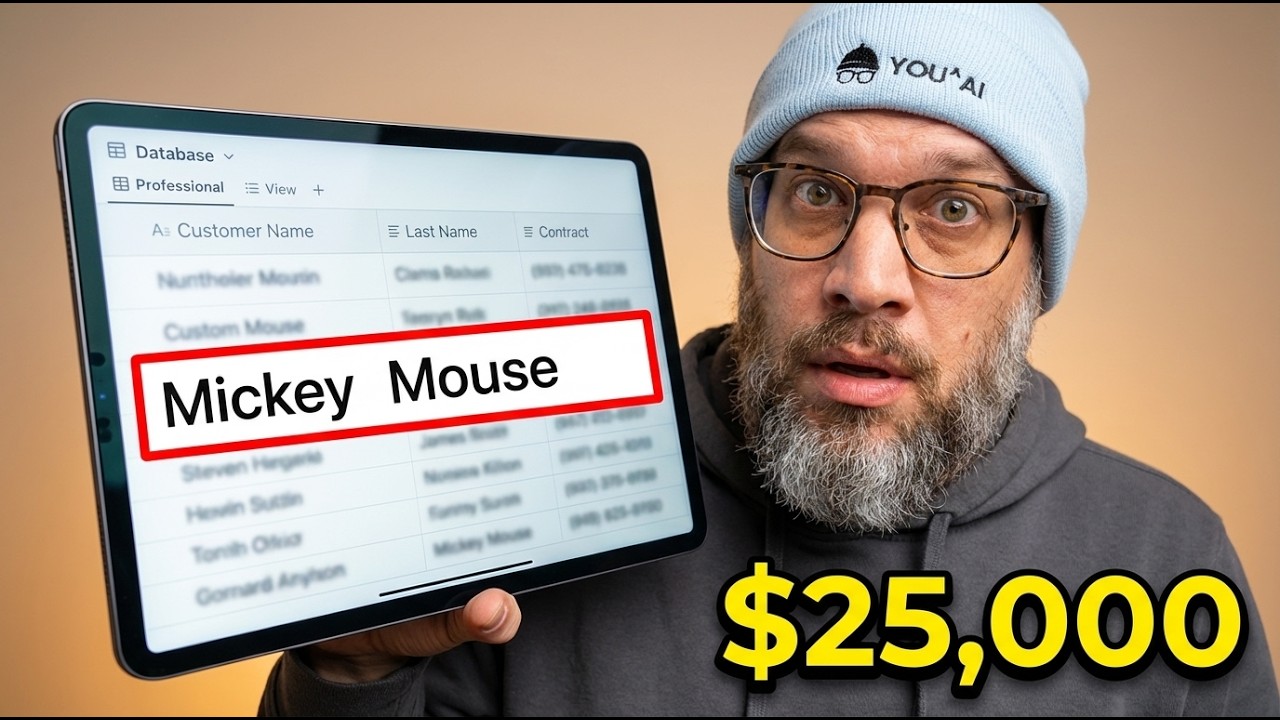

GPT-5.4 Let Mickey Mouse Into a Production Database. Nobody Noticed. (What This Means For Your Work)

The video provides a comprehensive review of ChatGPT 5.4, comparing it to leading AI models like Claude Opus 4.6 and Gemini 3.1. The creator begins with a simple, real-world test—asking whether to walk or drive a car to a nearby car wash—and highlights that ChatGPT 5.4’s “thinking mode” gave a well-structured but incorrect answer, while its competitors answered correctly and concisely. This sets the stage for a broader discussion about the strengths and weaknesses of ChatGPT 5.4, especially in practical, everyday scenarios. The video emphasizes that while OpenAI markets 5.4 as its most capable model, it sometimes fails basic reasoning tasks that other models handle with ease.

The reviewer conducted a series of blind evaluations, comparing the models on business writing, creative writing, verbal creativity, complex data migration, factual accuracy, and self-awareness. ChatGPT 5.4 showed significant improvements over previous versions, particularly in quantitative modeling and file processing, but still lagged behind Opus 4.6 in writing quality and nuanced decision-making. In creative and business writing, 5.4 struggled with tone and clarity, and in product management scenarios, it made logical but ultimately incorrect choices. However, it excelled in tasks requiring the integration and processing of diverse file types, demonstrating superior tool use and data discovery capabilities.

A major finding was the stark difference between ChatGPT 5.4’s “thinking mode” and its default “auto mode.” In thinking mode, the model performed at or near the top in factual accuracy and complex reasoning, but in auto mode, it often fell to last place, making basic factual errors. This inconsistency poses a challenge for users, especially in professional settings, as it requires constant vigilance to ensure the correct mode is enabled. The reviewer stresses the importance of training teams to use thinking mode for critical tasks, as relying on auto mode can lead to subpar results.

The video also explores the strategic direction of OpenAI, noting that ChatGPT 5.4 is optimized for agentic tasks—long-running, tool-driven workflows that resemble autonomous agents more than traditional chatbots. The model’s strengths in quantitative modeling, tool discovery, and computer use point toward a future where AI systems manage complex, sustained workflows rather than just generating text or answering questions. The reviewer connects this to recent hires at OpenAI and the company’s public narrative, suggesting that 5.4 is a foundational step toward more advanced agentic systems.

In conclusion, ChatGPT 5.4 is described as the most interesting, though not always the best, model currently available. Its strengths make it ideal for agentic infrastructure and complex data tasks, but it remains weaker in writing and nuanced judgment compared to competitors like Opus 4.6. The reviewer advises users to match the model to their specific needs—using 5.4 for quantitative, tool-heavy tasks and Opus for writing and decision-making. The video encourages viewers to look beyond benchmark scores and focus on practical capabilities, especially as AI models continue to evolve rapidly toward more autonomous, agentic roles in the workplace.