The video discusses the paper “GSM-Symbolic,” which investigates the limitations of mathematical reasoning in large language models (LLMs) and introduces a new dataset to better evaluate their performance, revealing significant discrepancies in LLM capabilities across different question variants. It critiques the paper’s conclusions about LLM reasoning, suggesting that the expectations placed on these models may be unfair, as both LLMs and humans exhibit similar challenges in problem-solving.

The video discusses a paper titled “GSM-Symbolic,” which explores the limitations of mathematical reasoning in large language models (LLMs). The paper, produced by researchers at Apple, questions whether LLMs genuinely engage in reasoning, particularly in mathematical contexts, or if they merely perform pattern matching. It also raises concerns about the benchmarks used to evaluate these models, specifically the GSM 8K Benchmark, suggesting that some models may have prior knowledge of the questions due to potential training set contamination. The researchers propose a new dataset, GSM-Symbolic, designed to mitigate these issues and better assess LLM performance.

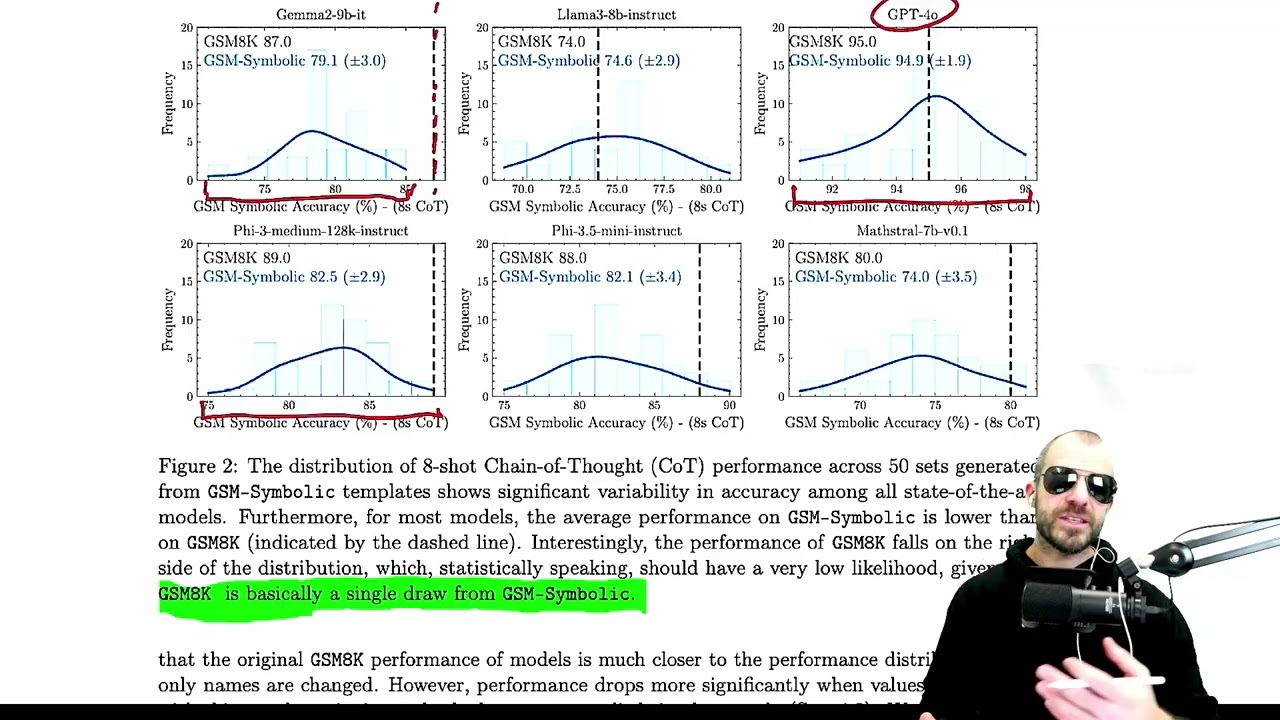

The GSM-Symbolic dataset is constructed by creating templates from existing GSM 8K questions, allowing for the generation of numerous variants while maintaining the core mathematical tasks. This approach aims to explore the robustness of LLMs against variations in question phrasing and numerical values. The video highlights that the dataset includes annotated solutions and processes, making it easier to evaluate the models’ performance on mathematical tasks. The researchers conducted extensive evaluations, revealing significant variance in LLM performance across different dataset variants, with larger models generally performing better than smaller ones.

One of the key findings of the paper is that LLMs tend to perform better on the original GSM 8K dataset compared to the generated variants, suggesting that they may have memorized or been trained on the original questions. The video emphasizes that this discrepancy raises questions about the validity of current benchmarks and the need for new evaluation methods. The researchers also examined how LLMs respond to changes in question difficulty, noting that performance drops significantly when additional conditions are introduced, which challenges the notion that LLMs can reason effectively.

The video critiques the paper’s conclusions, arguing that the authors fail to adequately define “reasoning” and that their interpretation of LLM performance may not accurately reflect the models’ capabilities. The presenter suggests that humans also struggle with similar mathematical tasks, raising the question of whether the expectations placed on LLMs are fair. The discussion highlights the complexity of reasoning and the potential for both LLMs and humans to exhibit similar limitations in problem-solving.

In conclusion, the video underscores the importance of reevaluating how we assess LLMs and their reasoning abilities. While the GSM-Symbolic paper provides valuable insights into the limitations of LLMs in mathematical contexts, the presenter argues that the conclusions drawn may not fully capture the nuances of reasoning in both machines and humans. The discussion encourages a broader perspective on the capabilities of LLMs and the need for more rigorous evaluation methods that account for the intricacies of reasoning and problem-solving.