The video reviews OpenAI’s GPT-5.5, praising its improved speed, efficiency, and advanced coding capabilities but criticizing its high cost, inconsistent performance, and difficulty managing long context threads. While technically impressive and outperforming previous models, the presenter finds GPT-5.5 less enjoyable and more demanding to work with, suggesting it feels like a fundamentally different model requiring a new approach.

The video provides an in-depth review of OpenAI’s latest model, GPT-5.5, highlighting both its strengths and shortcomings. The presenter begins by noting the significant price increase for GPT-5.5, which costs $5 per million tokens in and $30 per million tokens out, roughly double the price of GPT-5.4. Despite the higher cost, the model is more token-efficient and faster, thanks to improvements in token utilization and a partnership with Nvidia using their latest GB200 systems. However, API support is not yet available, limiting some typical use cases.

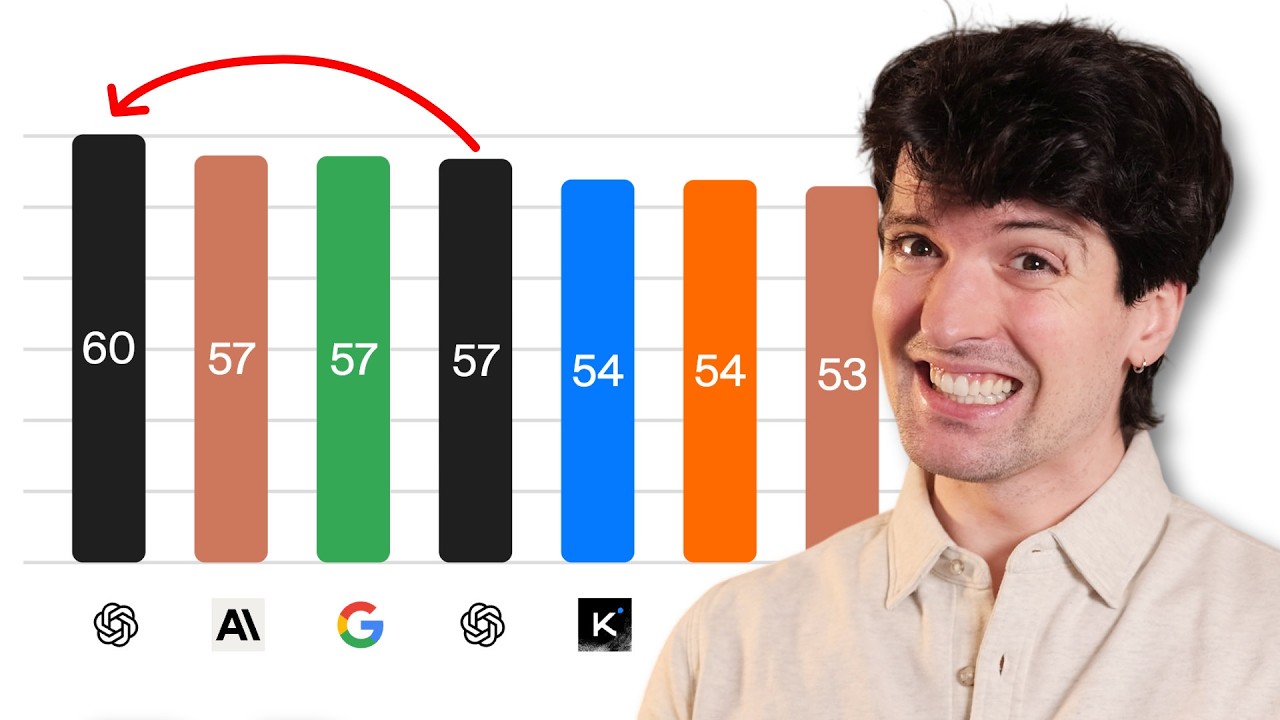

Benchmark results show that GPT-5.5 outperforms previous models like GPT-5.4 and Anthropic’s Opus 4.7 in several tests, including terminal bench and expert software engineering benchmarks. The model demonstrates strong capabilities in coding, tool use, and complex problem-solving, with the Pro version excelling in puzzle-solving and long-running tasks. However, some benchmarks like GDP valve are considered less meaningful, and Google’s models still lead in visual recognition tasks. The presenter appreciates the model’s efficiency but remains cautious about its overall value given the cost.

In practical applications, GPT-5.5 shows impressive but sometimes inconsistent performance. The presenter shares experiences with game development projects, noting that while the model can modernize and improve codebases, it often only partially fulfills requests, such as creating a 3D game that remains largely 2D in experience. The model tends to do the minimum required and can be “lazy,” requiring more precise prompting and frequent thread restarts to avoid getting stuck on incorrect context. Front-end UI redesigns also reveal typical GPT quirks, with some sloppy or unnecessary elements generated.

Other early testers and companies have praised GPT-5.5 for its smarter, more persistent coding abilities and improved handling of complex tasks with less back-and-forth. The Pro model, in particular, impresses with its ability to solve long-standing cipher puzzles and handle advanced coding challenges. Despite these strengths, the presenter criticizes the model’s tendency to hold onto incorrect information in its context window, making long-running threads frustrating. This necessitates more active management of prompts and threads than with previous models.

In conclusion, while GPT-5.5 represents a significant technical advancement and sets a new standard for AI coding capabilities, the presenter finds it less enjoyable and more demanding to work with than earlier versions. The model’s “lazy” behavior, high cost, and difficulty managing context over long sessions detract from its overall appeal. The presenter suggests that GPT-5.5 feels different enough to warrant a new name rather than a simple version increment and advises users to rethink their approach when working with it. Despite reservations, there is optimism that future models built on this foundation will address current issues and deliver even greater performance.