The video introduces the basics of AI large language model (LLM) systems, focusing on practical implementation using Ollama and Python, and emphasizes demystifying AI by treating it as a standard component in the tech stack. Through hands-on demonstrations, the instructor shows how to run LLMs locally, integrate them into web applications, and highlights the importance of understanding their limitations, responsible use, and ongoing learning within a supportive tech community.

Certainly! Here’s a five-paragraph summary of the video transcript for “Intro to AI LLM Systems with Ollama and Python (Asheville 26.03.17)”:

The session, led by an experienced tech educator, introduces participants to the fundamentals of artificial intelligence (AI) systems, focusing on large language models (LLMs) and practical implementation using Ollama and Python. The instructor begins by sharing his background in technology and education, emphasizing the importance of hands-on, accessible tech learning environments like Silicon Dojo. He highlights the shift from traditional, often impractical academic learning to skills that are directly applicable in the tech industry, and stresses the value of community, networking, and continuous, incremental learning.

A central theme is demystifying AI by presenting it as just another component in a technology stack, rather than a mythical or magical entity. The instructor explains the architecture of AI systems, breaking down the stack into web servers, frameworks, programming languages, LLMs, databases, and APIs. He discusses how LLMs, such as those managed by Ollama, fit into this stack and how they can be integrated into applications using Python. The importance of understanding the limitations and biases of LLMs, which are shaped by their training data, is also addressed, along with the need for thorough testing and validation.

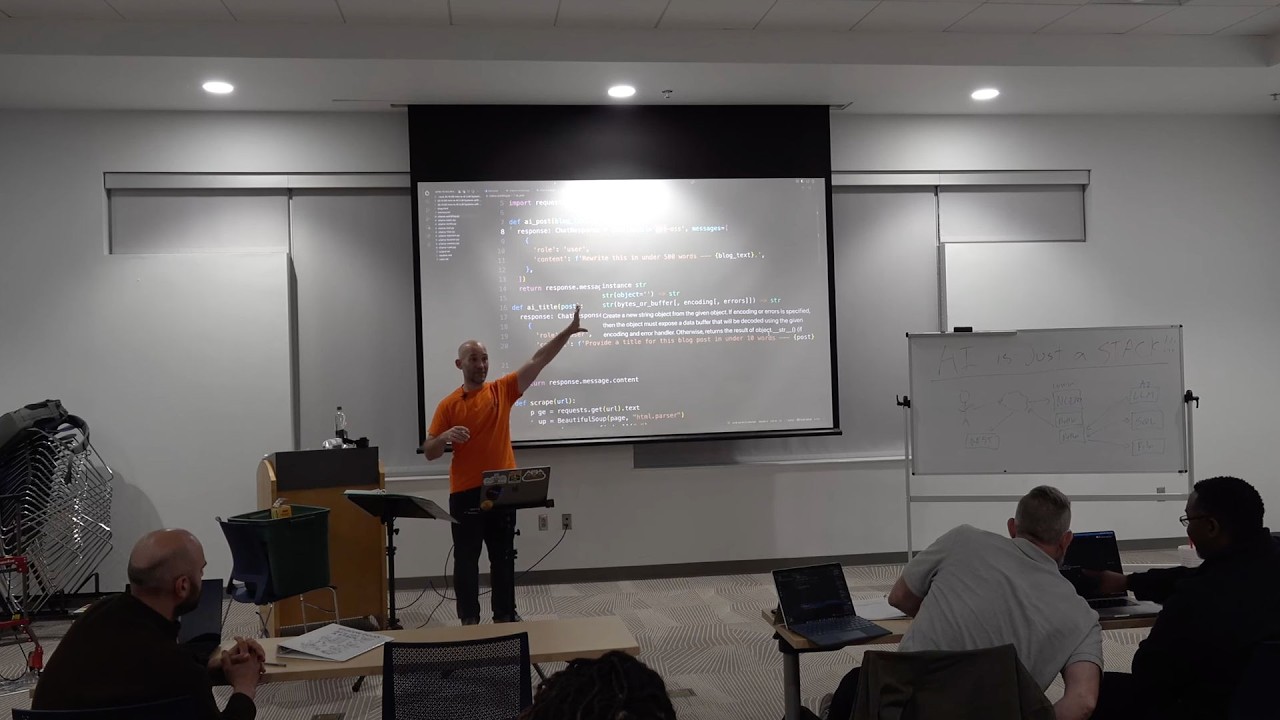

The practical portion of the class covers installing and using Ollama to run LLMs locally, demonstrating how different models (varying in size and capability) can be pulled, run, and interacted with via the command line and Python scripts. The instructor shows how to use Python to send queries to LLMs, handle responses, and implement features like prompt injections, rule-based behavior, and dynamic context using REST APIs. He also covers memory management for conversational context and the importance of cleaning up data (e.g., web scraping and text extraction) before feeding it to LLMs to ensure efficient and relevant processing.

To illustrate real-world applications, the class builds up to creating a simple web application using the Bottle framework, integrating LLM-powered responses into a user-facing interface. The instructor demonstrates how to automate content generation, such as creating blog posts from scraped web articles, and discusses the implications of such automation for the future of online content and misinformation. Throughout, he emphasizes the need for careful architecture, resource management, and awareness of the rapidly evolving AI landscape.

The session concludes with a Q&A, local tech community updates, and encouragement for ongoing learning and experimentation. The instructor reiterates that while the individual components of AI systems are relatively straightforward, complexity arises from integrating them effectively. He invites participants to future classes on related topics, such as using the OpenAI API and more advanced web frameworks, and underscores the importance of bridging the gap between technical skills and real-world job requirements through community engagement and practical projects.