The video presents an innovative system that uses multiple AI personas to iteratively evaluate and creatively improve text generated by large language models, ensuring balanced feedback and controlled rewriting without altering the original meaning or length significantly. This approach addresses common grading biases in LLMs and demonstrates consistent quality improvements over successive iterations, with the code available on Patreon for further exploration and customization.

The video introduces a novel creative rating and iterative text improvement system designed to enhance creative writing using large language models (LLMs). The system takes an original piece of text and evaluates it through five distinct AI personas, each offering contextual feedback based on different perspectives. This multi-persona approach aims to provide a more nuanced and balanced assessment of creativity, addressing the common issue where LLMs tend to give flat or overly positive ratings when grading text. The system then uses this feedback to rewrite the text creatively while controlling for length and meaning, iterating through multiple versions to progressively improve the content.

The process begins with either loading predefined personas from a JSON file or dynamically generating them based on the original text’s context. For example, if the text relates to AI research or software architecture, the personas might represent experts in those fields. These personas are intentionally designed to have conflicting viewpoints to prevent uniform scoring and encourage a more critical and diverse evaluation. Each persona generates rubrics and scores the text, and their feedback is aggregated to guide the rewriting phase, which attempts to improve the text without altering its core meaning or significantly changing its length.

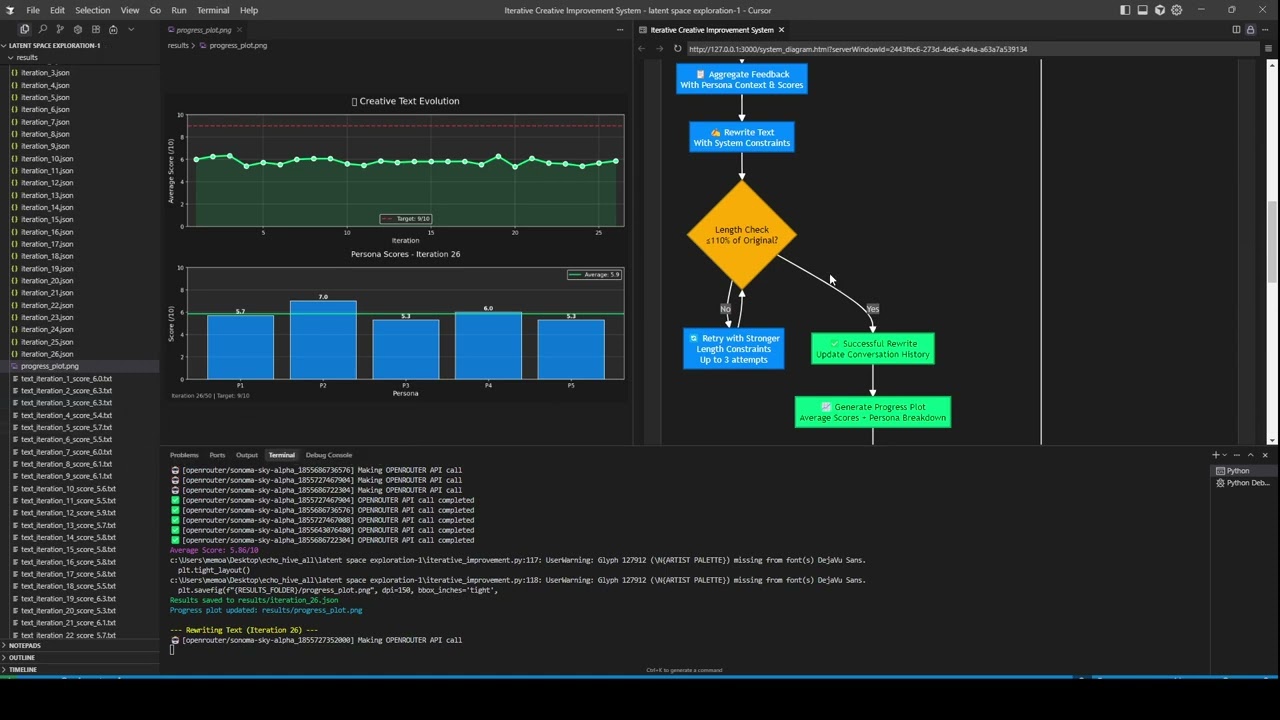

The iterative loop involves evaluating the rewritten text, checking if it meets length constraints (within 10% of the original), and retrying up to three times if necessary. The system tracks progress by saving each iteration and plotting score improvements over time. It stops either when a maximum number of iterations is reached or when the average score surpasses a predefined threshold, ensuring that the best version of the text is preserved. Although the grading system still shows some variability and occasional flat ratings, the overall trend demonstrates consistent improvement, with later iterations scoring higher than the initial versions.

The creator discusses some challenges encountered, such as the difficulty in achieving highly differentiated grading and the need for better prompt engineering to enhance the system’s effectiveness. Various LLM models were tested, including Sonoma Sky Alpha and GPT-5 mini, with mixed results. The system shows promise but requires further refinement, especially in how the personas are prompted and how feedback is synthesized. The video also highlights that the code and system files will be made available on Patreon, inviting viewers to explore and potentially customize the system for their own creative writing projects.

In conclusion, this iterative LLM creative writing improvement system represents an innovative approach to enhancing text quality by leveraging multiple AI personas for evaluation and controlled rewriting. It balances creativity with constraints on meaning and length, aiming to produce progressively better versions of a given text. The system’s design encourages diverse perspectives and critical feedback, which helps overcome the limitations of single-perspective grading by LLMs. Viewers interested in AI-driven creative writing tools and iterative improvement methods are encouraged to check out the Patreon for access to the code and additional support options.