The video critiques Microsoft’s introduction of AI facial recognition in OneDrive, highlighting the restrictive policy that allows users to disable the feature only three times per year, raising privacy and control concerns amid Microsoft’s broader push toward cloud-based AI integration. It also warns about the risks of corporate control over user data, emphasizing the importance of self-hosting to maintain autonomy and avoid arbitrary restrictions imposed by centralized platforms like Microsoft and GitHub.

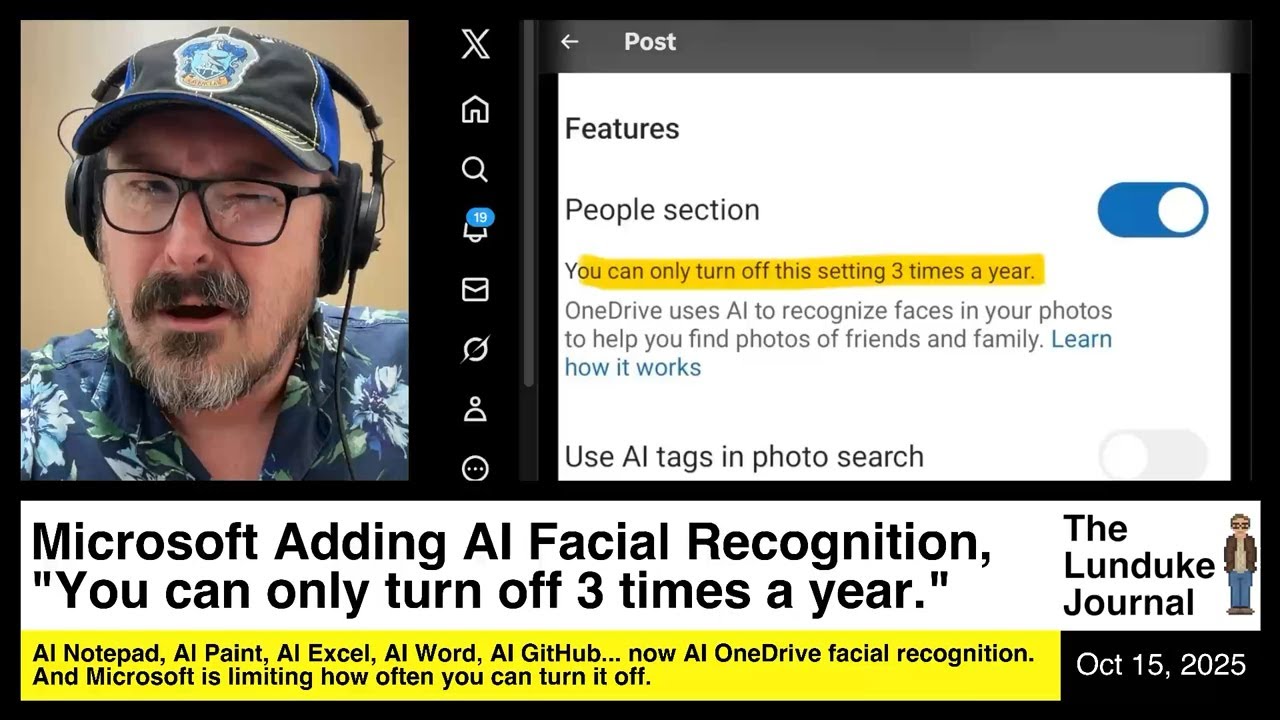

The video discusses Microsoft’s introduction of AI-driven facial recognition in OneDrive, which automatically scans users’ photos to identify people. While Microsoft allows users to disable this feature, the catch is that it can only be turned off three times per year. This limitation is seen as invasive and frustrating, especially given Microsoft’s history of resetting user settings without consent. The presenter finds this policy absurd, as it restricts users’ control over their privacy and settings, forcing them to endure the feature if it is re-enabled more than three times annually.

The rationale behind this restriction may be related to the significant computational resources required to run AI facial recognition on Microsoft’s servers. These AI processes demand extensive GPU power and cloud infrastructure, which are costly and not currently profitable for Microsoft. Limiting how often users can toggle the feature on and off could be a way to prevent abuse or excessive server load, such as a distributed denial-of-service attack caused by frequent switching. Despite this practical explanation, the presenter criticizes the approach as user-unfriendly and invasive.

The video also highlights Microsoft’s broader strategy of embedding AI into nearly all its software products, including Notepad, Paint, Excel, Word, and even the Start menu. This shift involves moving data storage and processing from local devices to Microsoft’s cloud servers, raising concerns about data ownership and privacy. Microsoft is pushing users toward cloud storage by default, such as making OneDrive the default save location in Word, which means users’ files and data are increasingly controlled and analyzed by Microsoft’s AI systems.

Additionally, the video touches on a related incident involving a developer who was temporarily suspended from GitHub after submitting an inappropriate, joke pull request to the Linux kernel repository. Although the pull request violated GitHub’s terms of service, the suspension was viewed as an overreaction, especially since more serious violations by others went unpunished. This case underscores the risks of relying on centralized corporate platforms for critical data and projects, where access can be revoked suddenly and without clear recourse.

In conclusion, the video warns about the growing trend of corporate control over user data and AI-driven features that limit user autonomy. It stresses the importance of self-hosting and maintaining control over one’s own data to avoid dependency on large corporations like Microsoft or GitHub, which can impose restrictions or remove access arbitrarily. The presenter encourages viewers to be cautious about the increasing cloudification of software and data, highlighting the potential privacy and control issues that come with it.