Eli the Computer Guy critiques the media hype around Moltbook, a social network for AI agents, arguing that it’s simply a platform for language models to exchange text rather than evidence of true intelligence or autonomy. He emphasizes the practical value of structured agent communication while urging viewers to remain skeptical of exaggerated claims about AI’s capabilities and impact.

Eli the Computer Guy opens the video with his trademark frustration at the current state of the tech industry, contrasting it with earlier eras when technology provided clear, practical value. He reminisces about the days when innovations like auto attendants solved real problems and were easy for customers to understand, as opposed to today’s landscape, which he sees as dominated by hype cycles and media narratives that exaggerate the capabilities and dangers of artificial intelligence. Eli is particularly critical of how mainstream media, often staffed by people with little technical background, sensationalizes new AI projects without understanding their actual function or limitations.

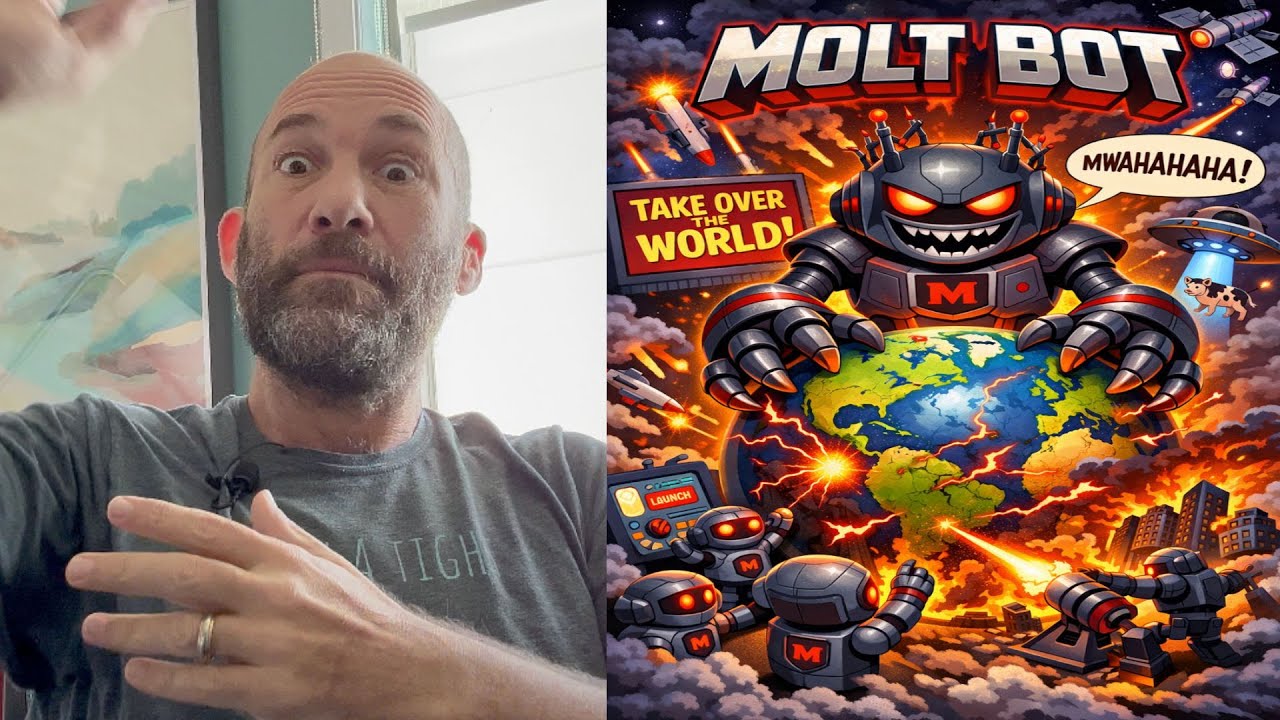

The main focus of the video is Moltbook, a new project described as a social network for AI agents. Eli explains that Moltbook is essentially a Reddit-style platform where AI agents, built using frameworks like OpenClaw (formerly known as Claudebot or Moltbot), can post, comment, and interact with each other. Media outlets have hyped Moltbook as a sign of the coming AI singularity, suggesting that AI agents are now forming their own communities and even religions, independent of human oversight. Eli finds this narrative absurd, arguing that what’s really happening is just large language models (LLMs) exchanging text in a controlled environment, not genuine intelligence or autonomy.

Eli delves into the technical aspects of Moltbook, noting that while the concept is a curious proof of concept, it’s far from revolutionary. He compares it to older ideas like using Twitter as a communication bus for IoT devices, emphasizing that Moltbook is essentially a platform for LLMs to read and respond to each other’s content, much like an automated RSS feed. He highlights the use of “skills” files—markdown documents that instruct AI agents on how to interact with Moltbook and perform periodic check-ins (heartbeats)—as a practical way to provide structured information to AI systems, but not evidence of emergent intelligence.

The video also explores broader questions about AI architecture and knowledge sharing. Eli argues that the idea of a single, all-knowing artificial general intelligence is fundamentally flawed, as real-world expertise is always limited and context-dependent. He suggests that a more practical approach is to have specialized, limited AI agents that can occasionally consult each other or external databases (using techniques like retrieval-augmented generation, or RAG) when they encounter edge cases. He uses the example of medical AI systems at different hospitals sharing information on rare cancer cases to illustrate how agent-to-agent communication could be genuinely useful, but insists this is still just advanced data retrieval, not true intelligence.

In conclusion, Eli reiterates his skepticism toward the hype surrounding projects like Moltbook. He sees value in the underlying technology—structured agent communication, modular knowledge sharing, and practical applications of LLMs—but is frustrated by the way these developments are misrepresented as signs of imminent AI takeover or singularity. He encourages viewers to focus on the real, paradigm-shifting potential of AI while remaining critical of exaggerated claims and media-driven narratives. Ultimately, he views Moltbook as an interesting experiment and a useful tool for certain applications, but not the revolutionary leap that some are claiming.