DeepSeek OCR introduces a novel method of compressing text within images by up to 10 times with around 97% accuracy, enabling large language models to process significantly larger context windows without a proportional increase in computational cost. This breakthrough, achieved through a sophisticated pipeline combining models like SAM, CLIP, and DeepSeek 3B, has the potential to revolutionize text recognition and language model input by replacing traditional tokenization with efficient image-based text representation.

DeepSeek has released a groundbreaking new paper and model called DeepSeek OCR, which focuses on optical character recognition (OCR) — essentially image-based text recognition. While image recognition itself is not new, DeepSeek OCR introduces a novel approach that could significantly enhance the capabilities of language models by compressing text within images. The key innovation is that they can represent text in an image format that compresses the text by a factor of 10 while maintaining around 97% accuracy. This breakthrough has the potential to dramatically increase the amount of text that can be processed within the limited context windows of large language models.

Currently, one of the biggest limitations in large language models like Gemini or ChatGPT is the size of the context window — the amount of text or tokens the model can consider at once. Expanding this window is computationally expensive, as the cost grows quadratically with the number of tokens. DeepSeek OCR proposes a solution by converting text into images, which can hold much more information in fewer tokens. This means that models could potentially handle 10 times more text within the same token budget, greatly expanding their context without a proportional increase in computational cost.

The DeepSeek OCR system works by taking an image of text, such as a PDF page, and dividing it into small patches. It then uses several models: SAM, an 80 million parameter model that detects local details like letter shapes; a downsampling step to compress the image further; CLIP, a 300 million parameter model that organizes and contextualizes the patches; and finally, DeepSeek 3B, a 3 billion parameter mixture of experts model that decodes the image back into text. This pipeline allows for efficient compression and decompression of text, enabling much larger context windows for language models with only a slight increase in latency.

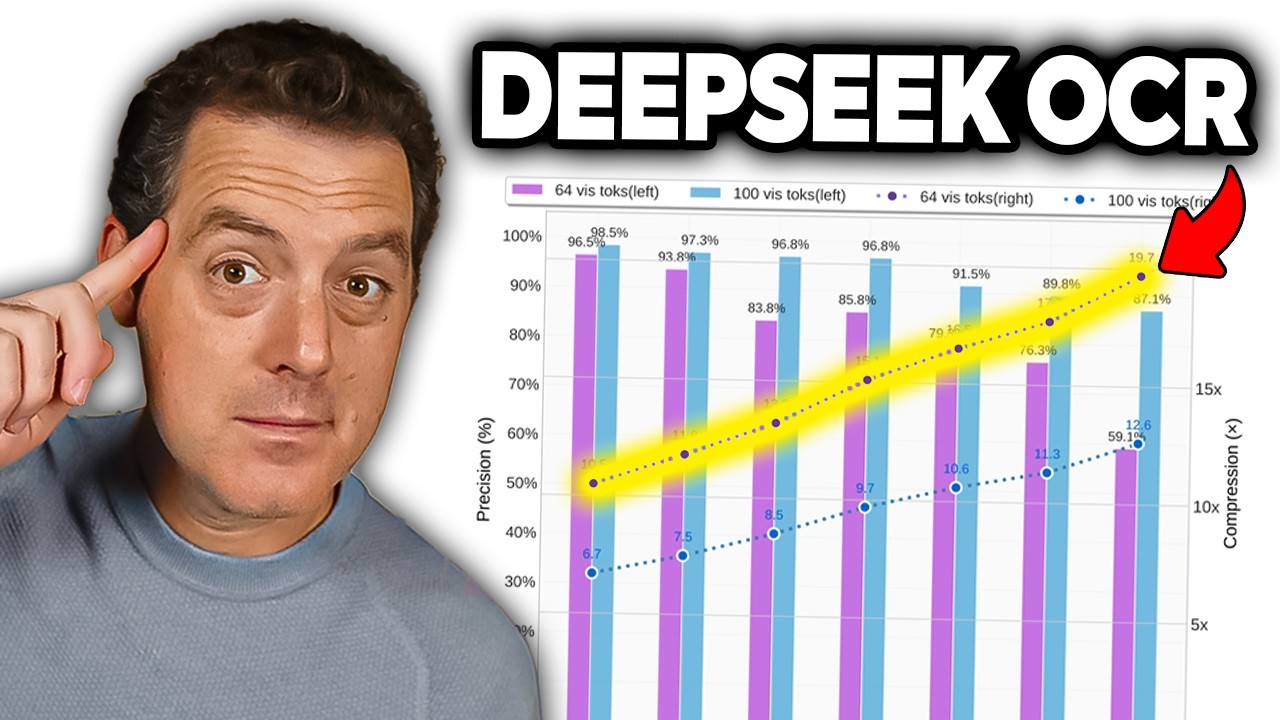

The paper reports impressive results, achieving over 96% OCR decoding precision at 9 to 10 times compression, 90% accuracy at 10 to 12 times compression, and 60% accuracy at 20 times compression. The research has garnered attention from notable figures like Andrej Karpathy, who suggests that feeding images instead of text tokens into language models could be more efficient and powerful. This approach could eliminate the need for tokenizers, allow for richer input types (like colored or bold text), and enable bidirectional attention mechanisms, potentially revolutionizing how language models process information.

DeepSeek trained their model on a massive dataset of 30 million pages from diverse PDFs in about 100 languages, with a focus on Chinese and English. This extensive training data and the innovative approach to text compression represent a critical breakthrough in overcoming the context window bottleneck. The technology promises to unlock new use cases and significantly enhance the performance of existing language models. The video concludes with excitement about the future proliferation of this technology and encourages viewers to like and subscribe for more updates.