NVIDIA has developed an AI technique called PPISP that detects and corrects misleading photo artifacts caused by camera settings like exposure and white balance, resulting in more accurate 3D reconstructions. By analyzing both the scene and camera parameters, the AI removes inconsistencies, though it struggles with modern cameras’ local adjustments, and the technology is being made freely available.

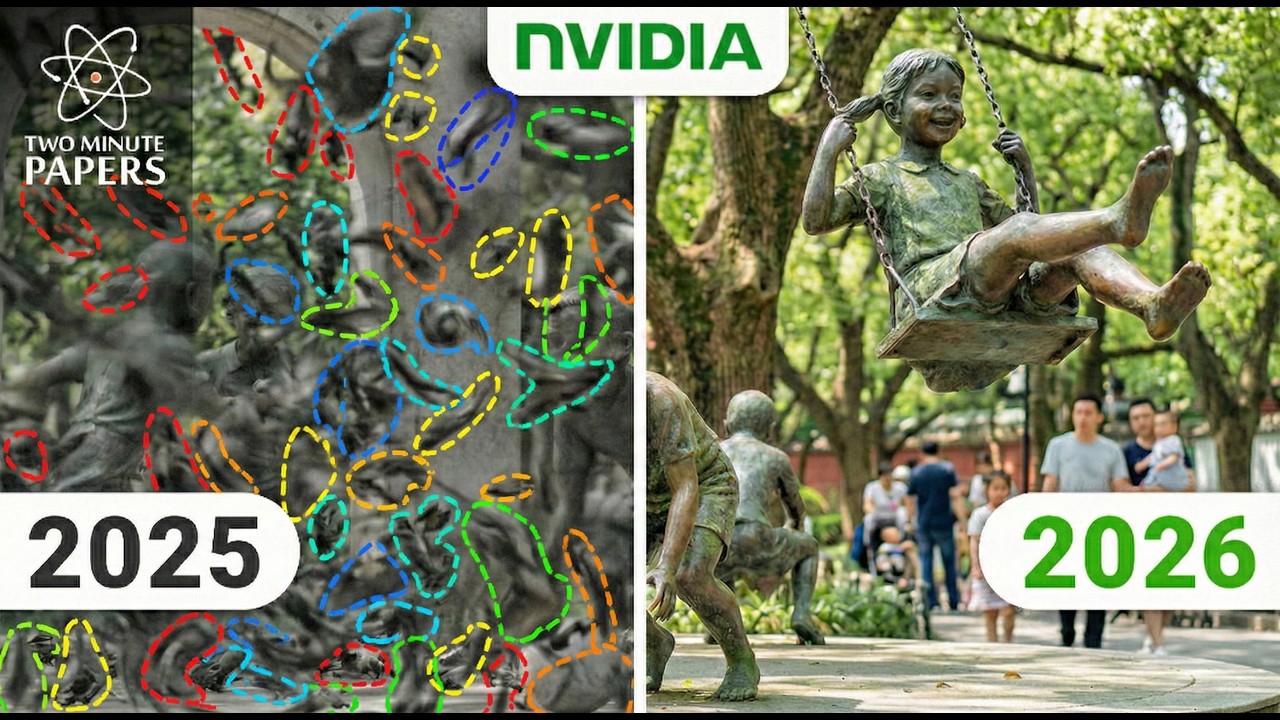

The video discusses NVIDIA’s new AI technique for detecting and correcting misleading information in photos, particularly in the context of 3D reconstruction. Traditionally, when multiple photos of a scene are taken under different lighting conditions or with varying camera settings, reconstruction algorithms can be tricked into thinking objects change color or appearance. This results in ghostly, blurry, or “floater” artifacts in the generated 3D models, making them unreliable for applications like virtual worlds, self-driving car simulations, and video games.

The core issue arises because cameras automatically adjust parameters like exposure and white balance, causing inconsistencies between photos. When these photos are used to reconstruct a scene, the AI mistakenly interprets lighting changes as actual changes in the objects themselves. This is humorously compared to viewing a house through different colored sunglasses each day, leading to confusion about the house’s true color.

NVIDIA’s new technique, called PPISP, acts like a “master detective” by analyzing not just the scene, but also the camera’s settings—essentially “looking at the sunglasses” instead of just the house. The AI identifies and corrects for exposure, white balance, vignetting (darkening at the edges of photos), and the camera’s non-linear response to light. By mathematically reversing these distortions, the AI reconstructs a more accurate and consistent representation of reality, free from the previous ghostly artifacts.

The video highlights how this approach is similar to how smartphone cameras automatically adjust exposure, but NVIDIA’s method embeds this logic within a neural network. The narrator draws a parallel to life advice, suggesting that just as the AI separates the object’s true color from the camera’s bias, people should strive to separate facts from feelings and recognize their own biases to see the world more clearly.

Despite its impressive capabilities, the technique has limitations. It assumes that the camera applies global adjustments to the entire image, but modern cameras often use local tone mapping—brightening faces or darkening windows independently. This can confuse the AI, as these local changes don’t fit its global correction model. The video concludes by praising the NVIDIA team for making this technology freely available and encourages viewers to appreciate and share such advancements in human ingenuity.