The Pentagon and AI company Anthropic are in a standoff over the military’s demand for unrestricted access to Anthropic’s technology, with Anthropic refusing unless safeguards are put in place to prevent misuse, such as mass surveillance and autonomous weapons without oversight. As a deadline nears, the dispute highlights broader concerns about government control versus corporate responsibility in the rapidly advancing field of artificial intelligence.

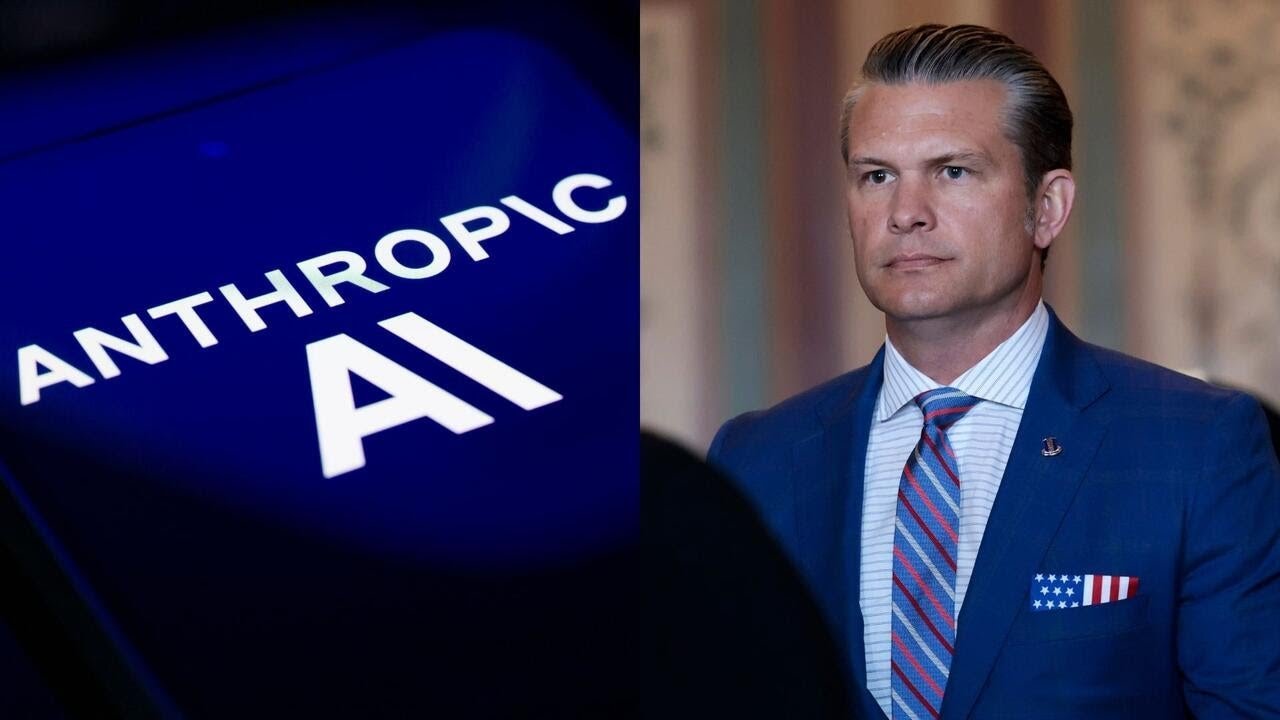

The Department of Defense and Anthropic, a leading artificial intelligence company known for its AI assistant Claude, are currently in a tense standoff over access to Anthropic’s technology. The Pentagon, under Defense Secretary Pete Hecht, has set a strict deadline for Anthropic to comply with its demands or risk losing valuable government contracts. The core of the dispute centers on the Pentagon’s desire for unrestricted access to Anthropic’s AI, which the company refuses to grant without safeguards to prevent potential misuse.

Anthropic’s CEO, Dario Amodei, has made it clear that the company will not allow access to its technology unless there are explicit protections against abuse, particularly regarding mass surveillance of Americans and the use of AI-enabled autonomous weapons without human oversight. Despite pressure from the Pentagon, including threats to revoke contracts or seize the technology under the Defense Production Act, Amodei remains firm in his stance, stating that these threats do not alter the company’s position.

The Pentagon argues that it cannot allow a private company to dictate the rules and policies governing military technology. In response to Anthropic’s concerns, Under Secretary of Defense Emil Michael claims the military has offered written concessions acknowledging existing privacy laws and Pentagon policies on autonomous weapons. However, Amodei disputes that any meaningful concessions have been made, maintaining that the company’s ethical concerns have not been addressed.

The conflict intensified following a recent military operation involving the capture of Venezuelan President Nicolás Maduro, in which Anthropic’s Claude played a significant role. This event highlighted the power and potential risks of advanced AI in military applications. Anthropic insists on maintaining restrictions to prevent its technology from being used in ways that could threaten civil liberties or enable unchecked military power.

Observers note that the standoff raises broader questions about the balance of power between private tech companies and government agencies, especially as AI becomes increasingly influential. Former Anthropic employee Jeffrey Ladish points out that while it is reasonable for the government to be wary of private companies controlling critical technology, it is equally reasonable for companies to be cautious about government intentions. As the deadline approaches, negotiations continue, with Anthropic urging the Pentagon to accept its proposed safeguards if it truly does not intend to misuse the technology.