The video explains how the Red-Green-Refactor cycle from Test-Driven Development (TDD) is especially effective when working with AI coding agents like Claude Code, as it ensures each feature is properly tested, implemented, and refactored for quality. The speaker recommends writing and implementing one test at a time to maintain focus and code quality, emphasizing that strong feedback loops are crucial for reliable AI-assisted software development.

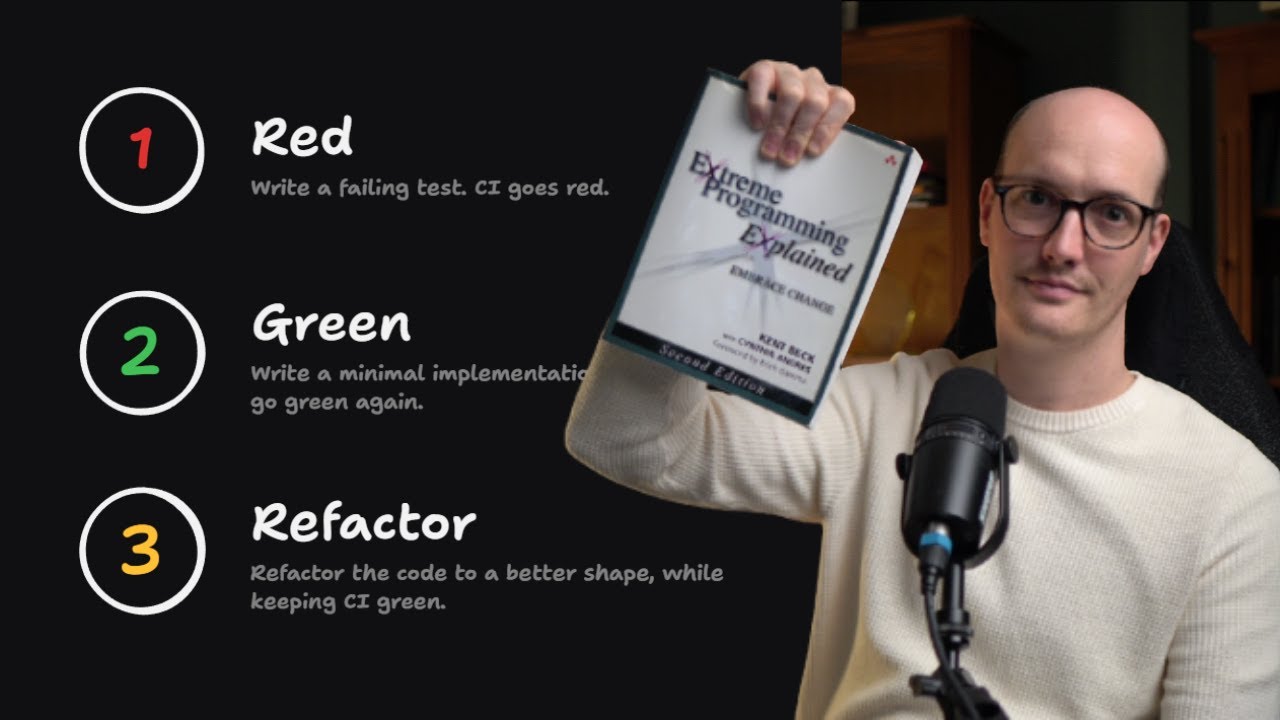

The video discusses how the classic software development practice known as “Red-Green-Refactor,” a core part of Test-Driven Development (TDD), is especially effective when working with coding agents like Claude Code. TDD, popularized by Kent Beck in the context of Extreme Programming (XP), revolves around writing automated tests before implementing features. The process begins by writing a failing test (red), then writing just enough code to make the test pass (green), and finally refactoring the code for clarity and maintainability. This disciplined approach ensures that every piece of functionality is both tested and thoughtfully implemented.

The “red” phase involves intentionally writing a test that fails, which is reflected in the continuous integration (CI) system showing a red status. This step is crucial because it verifies that the test is meaningful and that the feature or behavior being tested does not yet exist. Only after confirming the test fails does the developer (or coding agent) proceed to the “green” phase, where the minimal code necessary is written to make the test pass. This ensures that the implementation is directly guided by the requirements expressed in the test.

Once the code passes the test and the CI turns green, the “refactor” phase begins. Here, the developer can safely improve the structure and readability of the code, knowing that the tests will catch any accidental breakages. This cycle of red, green, and refactor creates a tight feedback loop, which is particularly valuable when using AI coding agents. The speaker emphasizes that seeing an agent follow this process builds confidence in the quality of the generated code, as it demonstrates that the agent is not simply generating code to pass arbitrary tests but is genuinely implementing the required functionality.

A key recommendation from the video is to have the agent write and implement one test at a time, rather than generating a large batch of tests and then attempting to implement all features at once. Large batches can lead to low-quality or redundant tests and make it harder to maintain focus on the specific behavior being developed. By iterating test by test, the agent produces more meaningful tests that directly guide the implementation, resulting in higher-quality code and a more maintainable codebase.

Finally, the speaker highlights the importance of strong feedback loops and code quality in the age of AI-assisted development. Since AI models tend to replicate the patterns they see, maintaining a clean and well-tested codebase is more critical than ever. The video concludes with an invitation to check out the speaker’s newsletter and upcoming Claude Code course, emphasizing that mastering practices like Red-Green-Refactor is essential for anyone working with AI coding agents to ensure robust and reliable software.