The video discusses a research paper that explores optimizing test-time compute for large language models (LLMs) to enhance performance on tasks like high school math questions, emphasizing strategies such as sampling multiple answers and using a verifier model. The findings suggest that while optimizing test-time compute can be more effective than scaling model parameters for simpler tasks, investing in pre-training is generally better for more complex problems, highlighting the need for a dynamic approach based on problem difficulty and compute budget.

The video discusses a research paper titled “Scaling LLM Test-Time Compute Optimally Can Be More Effective than Scaling Model Parameters,” a collaboration between Google DeepMind and UC Berkeley. The paper investigates how to effectively allocate computational resources at test time to enhance the performance of large language models (LLMs) on specific tasks, particularly focusing on a benchmark involving high school math questions. The central question is how to utilize additional compute during inference to improve the accuracy of the model’s outputs, especially as the complexity of the problems increases.

The authors explore various strategies for optimizing test-time compute, such as sampling multiple answers from the same prompt and selecting the most frequent or highest-scoring response. Techniques like Chain of Thought prompting are also examined, where the model is guided to develop a plan before generating an answer. The paper emphasizes the importance of a verifier model, which assesses the correctness of the model’s outputs, as it is often easier for a model to evaluate answers than to generate them accurately. This verifier is trained specifically on the math dataset used in the experiments.

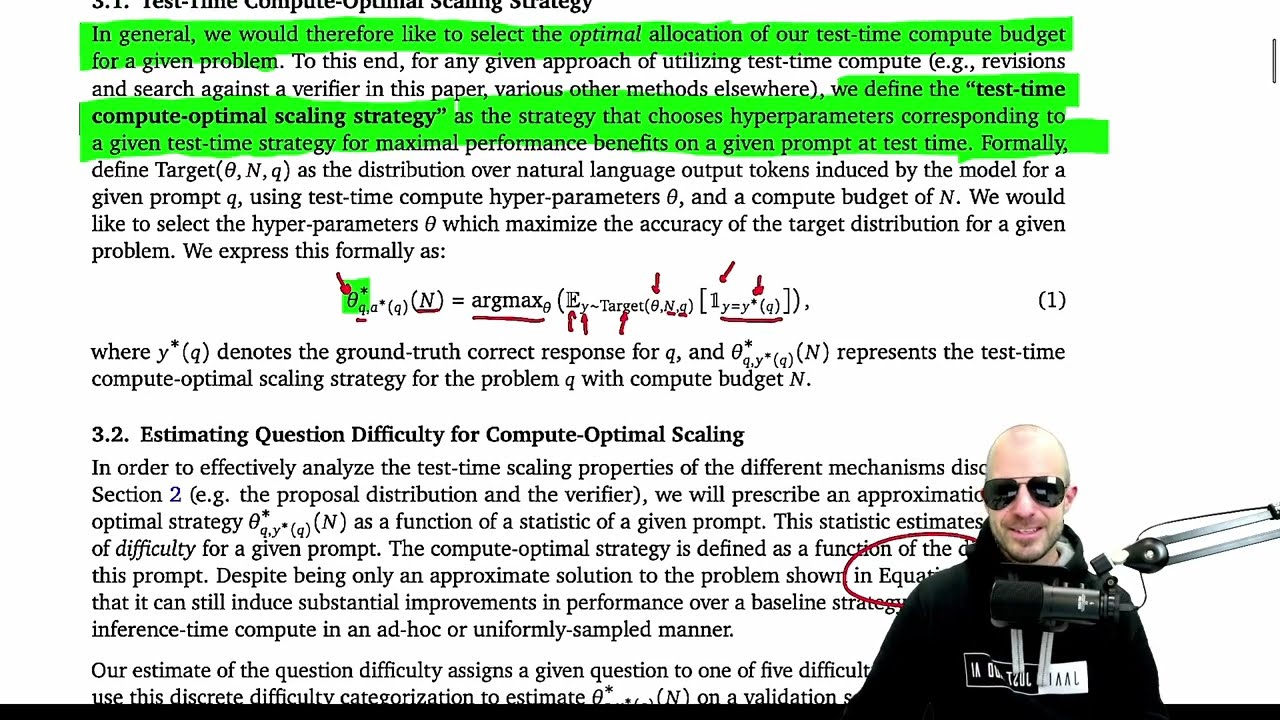

The research introduces a taxonomy for modifying the output distribution of LLMs at test time, distinguishing between input-level modifications (changing prompts) and output-level modifications (sampling multiple candidates). The authors conduct extensive experiments to evaluate different methods, including Best of N sampling, beam search, and look-ahead search, to determine which strategies yield the best performance under varying computational budgets. The results indicate that while some methods, like Best of N weighted by the verifier, outperform others, the more complex look-ahead search methods tend to underperform.

The paper also investigates the relationship between the difficulty of math problems and the effectiveness of different compute strategies. It finds that the model’s perception of difficulty may differ from human assessments, leading to varying performance outcomes. The authors conclude that there is no one-size-fits-all approach; instead, the optimal strategy depends on the specific problem, the compute budget, and the difficulty level. They suggest that a dynamic approach to selecting strategies based on these factors can lead to improved performance.

Finally, the video highlights the paper’s findings regarding the trade-off between investing in pre-training versus test-time compute. The authors argue that for tasks with lower inference loads, optimizing test-time compute can yield better results than scaling model parameters. However, for tasks with higher inference loads or more difficult questions, investing in pre-training is generally more effective. The video concludes with a note on the paper’s transparency and thoroughness in reporting results, while also cautioning that the findings may not generalize well beyond the specific dataset and methods used in the research.