The lecture reviews key transformer concepts including self-attention, position embeddings (sinusoidal and rotary), and normalization techniques (pre-layer and RMS normalization), alongside efficiency improvements like local attention and multi-query attention. It also explores transformer-based model families such as BERT, highlighting their architectures, training objectives, and subsequent advancements for improved performance and efficiency in NLP tasks.

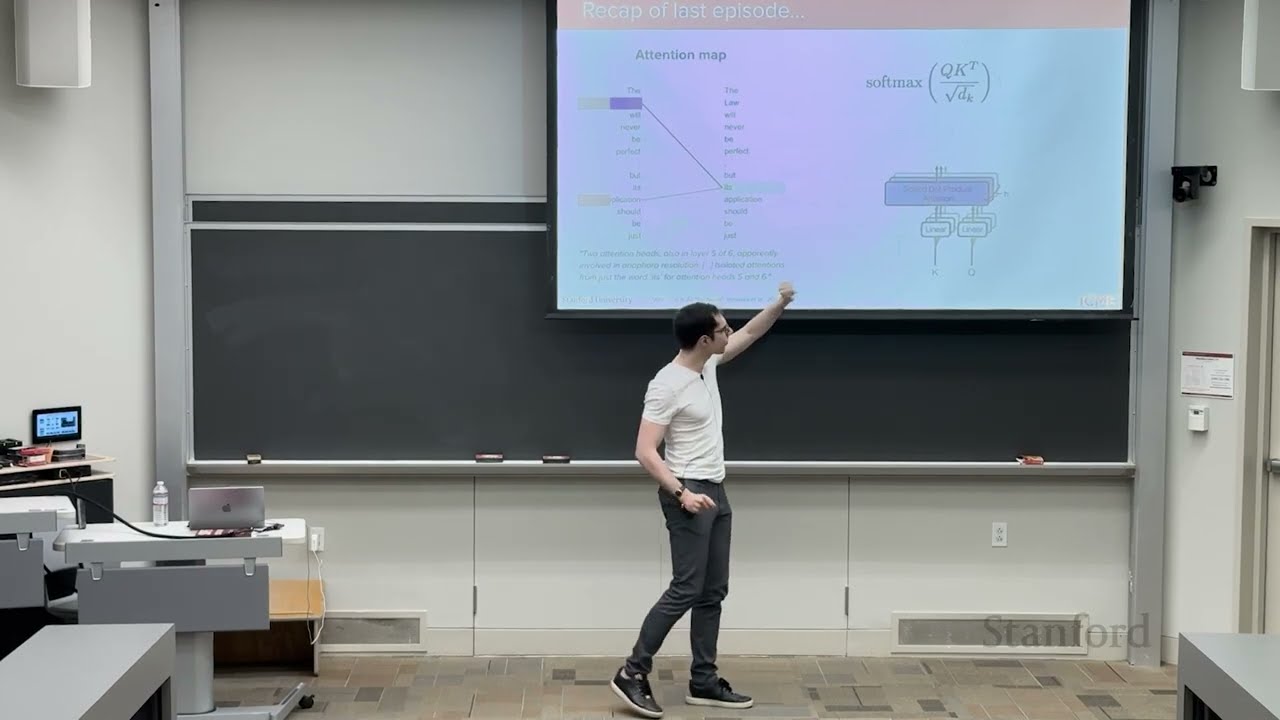

The lecture begins with a brief recap of the foundational concepts introduced in the first lecture, focusing on the self-attention mechanism in transformers. Self-attention allows each token in a sequence to attend to all other tokens, using queries, keys, and values to compute attention weights through optimized matrix multiplications. The transformer architecture consists of an encoder and a decoder, originally designed for machine translation tasks. The multi-head attention mechanism enables the model to learn different projections of the input, with each head capturing distinct relationships between tokens. Attention maps help visualize which tokens are most relevant to others, illustrating how the model associates related words in context.

A significant portion of the lecture is dedicated to position embeddings, which compensate for the transformer’s lack of inherent sequential order. The original transformer paper introduced learned position embeddings added to token embeddings, but this approach has limitations, such as fixed maximum sequence lengths and potential overfitting to training data. An alternative is the use of fixed sinusoidal position embeddings based on sine and cosine functions, which encode relative positions and generalize better to longer sequences. Modern models often use rotary position embeddings (RoPE), which rotate query and key vectors by angles dependent on token positions, effectively encoding relative distances directly within the attention mechanism. This method has become popular due to its mathematical properties and practical performance.

The lecture also covers changes in normalization techniques within transformer architectures. The original transformer used post-layer normalization, where normalization occurs after adding the sub-layer output to the input. Contemporary models prefer pre-layer normalization, applying normalization before the sub-layer, which improves training stability and convergence. Additionally, RMS normalization, a variant that normalizes based on root mean square values and learns fewer parameters, has gained traction for its efficiency. These normalization strategies help mitigate issues like internal covariate shift, ensuring more stable and effective training of deep transformer models.

Attention mechanisms have also evolved to address the quadratic complexity of full self-attention, especially for long sequences. Techniques like sliding window or local attention restrict each token’s attention to a neighborhood of tokens, reducing computational load while maintaining performance. Some models interleave local and global attention layers to balance efficiency and context capture. Another optimization involves sharing projection matrices for keys and values across attention heads, reducing memory usage without significantly impacting performance. Variants such as multi-query attention (MQA) and group query attention (GQA) explore different degrees of sharing to optimize speed and resource consumption.

The latter part of the lecture shifts focus to transformer-based model families, particularly encoder-only models like BERT. BERT uses a bidirectional encoder architecture without a decoder, enabling each token to attend to all others, which is beneficial for classification tasks. It introduces special tokens like CLS for classification and SEP for sentence separation, and employs multi-stage training with masked language modeling (MLM) and next sentence prediction (NSP) objectives. While BERT excels in producing contextual embeddings for various NLP tasks, it has limitations such as fixed context length and relatively high latency. Subsequent models like DistilBERT and RoBERTa address these by distillation techniques to reduce model size and by removing NSP to simplify training, respectively. Overall, the lecture provides a comprehensive overview of transformer-based models, their architectural innovations, and practical considerations in modern NLP.