The lecture covers advanced tuning techniques for large language models, starting from pre-training and supervised fine-tuning to preference tuning methods like RLHF and Direct Preference Optimization (DPO) that align models with human preferences using comparative feedback. It highlights the trade-offs between complex, high-performance approaches like RLHF and simpler, more efficient methods like DPO, emphasizing their respective benefits and challenges in practical deployment.

The lecture begins with a recap of previous content on training large language models (LLMs), focusing on two main stages: pre-training and supervised fine-tuning (SFT). Pre-training involves teaching the model the structure of language and code using vast amounts of data, which is computationally intensive and requires techniques like data and model parallelism. After pre-training, the model can predict the next token but is not yet aligned with specific tasks or behaviors. The second stage, SFT, fine-tunes the model on smaller, high-quality datasets to make it behave according to desired use cases, such as becoming a helpful assistant. Techniques like LoRA (Low-Rank Adaptation) are introduced to efficiently fine-tune models by adjusting fewer parameters.

The lecture then introduces the concept of preference tuning, a third stage aimed at aligning the model with human preferences beyond what SFT achieves. Preference tuning uses pairs of model outputs—one preferred and one less preferred—to teach the model which responses are better. This approach is easier and more practical than creating high-quality SFT datasets for every possible behavior, as it focuses on relative preferences rather than exact outputs. The data for preference tuning can be collected by generating multiple responses to the same prompt and having humans or automated systems rank them. This method allows injecting negative signals, teaching the model not only what to produce but also what to avoid.

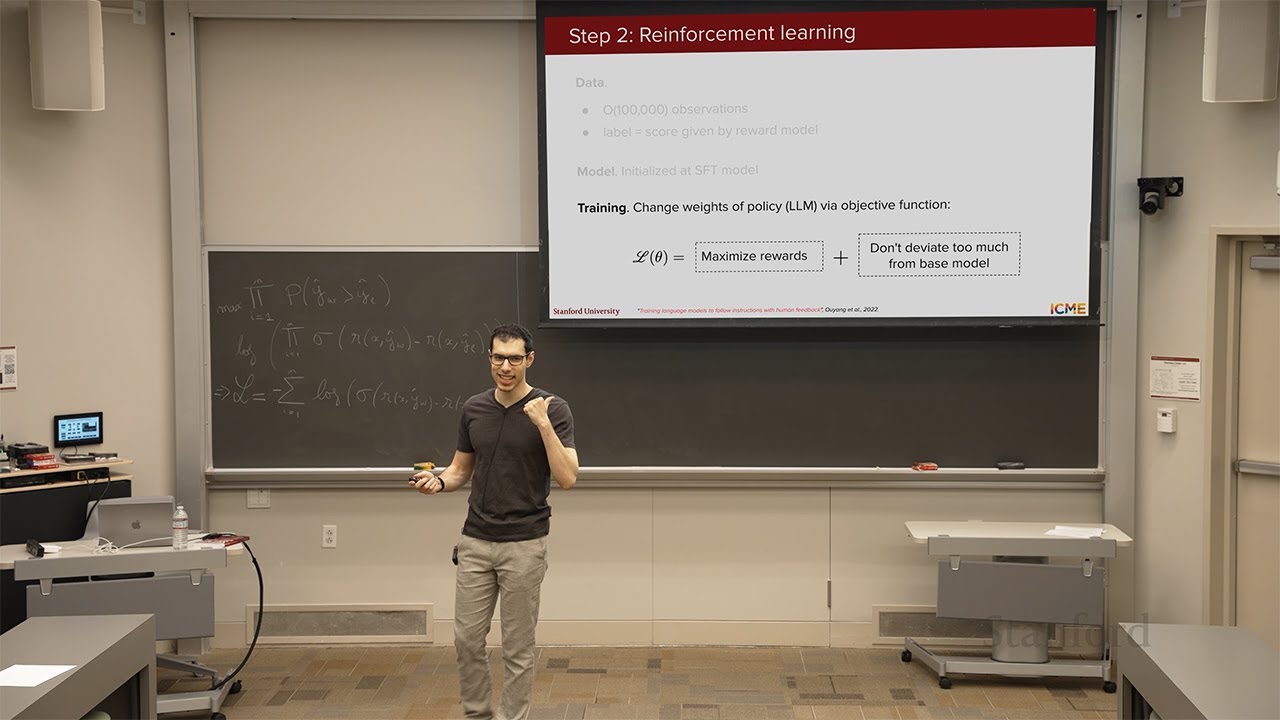

To implement preference tuning, the lecture discusses Reinforcement Learning from Human Feedback (RLHF), which frames the LLM as an agent in a reinforcement learning (RL) environment. The model generates outputs (actions) based on inputs (states) and receives rewards based on how well the outputs align with human preferences. RLHF involves two main steps: training a reward model to distinguish good from bad outputs using preference pairs, and then using this reward model to fine-tune the LLM via RL algorithms like Proximal Policy Optimization (PPO). PPO balances maximizing rewards with keeping the updated model close to the original to avoid issues like catastrophic forgetting and reward hacking.

The lecture also covers challenges with RLHF, including the complexity of training multiple models (policy, reward, value function, and base model), tuning numerous hyperparameters, and ensuring training stability. An alternative to RLHF is the “best of n” (BoN) approach, where multiple completions are generated, scored by the reward model, and the best one is selected at inference time. While simpler, BoN increases inference costs and latency, making it less practical for high-traffic applications. The lecture emphasizes the trade-offs between these methods in terms of computational cost, training complexity, and performance.

Finally, the lecture introduces Direct Preference Optimization (DPO), a supervised learning approach that directly optimizes the model using preference pairs without requiring a separate reward model or RL training. DPO simplifies the preference tuning process by optimizing a single loss function derived from the Bradley-Terry model, which compares the probabilities of preferred versus less preferred outputs. While DPO is easier to implement and requires fewer models, it may not achieve the same performance level as RLHF. The choice between RLHF and DPO depends on factors like compute resources, desired performance, and training complexity, with DPO being a practical option for quicker preference tuning and RLHF offering higher performance for expert practitioners.