Lecture 7 of Stanford’s CME 295 course explores enhancing LLMs by integrating Retrieval-Augmented Generation and tool calling to access real-time information and external functionalities, addressing limitations of static training data. It further delves into agentic LLMs that autonomously plan and execute multi-step tasks through iterative tool use, emphasizing scalability, safety, and practical deployment considerations.

In Lecture 7 of Stanford’s CME 295 course on Transformers and Large Language Models (LLMs), the focus is on enabling LLMs to interact with external systems and access up-to-date information beyond their training data. The lecture begins with a recap of previous discussions on reasoning models and reinforcement learning techniques like GRPO, which improve LLMs’ reasoning capabilities by generating intermediate reasoning chains before producing final answers. However, a key limitation remains: LLMs are constrained by their training data cutoff and cannot inherently access real-time or evolving knowledge, which motivates the need for methods like Retrieval-Augmented Generation (RAG).

RAG is introduced as a practical approach to augment LLM prompts with relevant external information retrieved from a knowledge base. Instead of overwhelming the model with all new data, RAG selectively retrieves and incorporates only pertinent document chunks into the prompt. The process involves building a knowledge base by chunking documents, embedding these chunks into vector representations, and then performing a two-stage retrieval: candidate retrieval using semantic similarity (often cosine similarity on embeddings) and optional reranking using cross-encoders that jointly consider the query and document. The lecture also covers evaluation metrics such as NDCG, reciprocal rank, precision, and recall to assess retrieval quality.

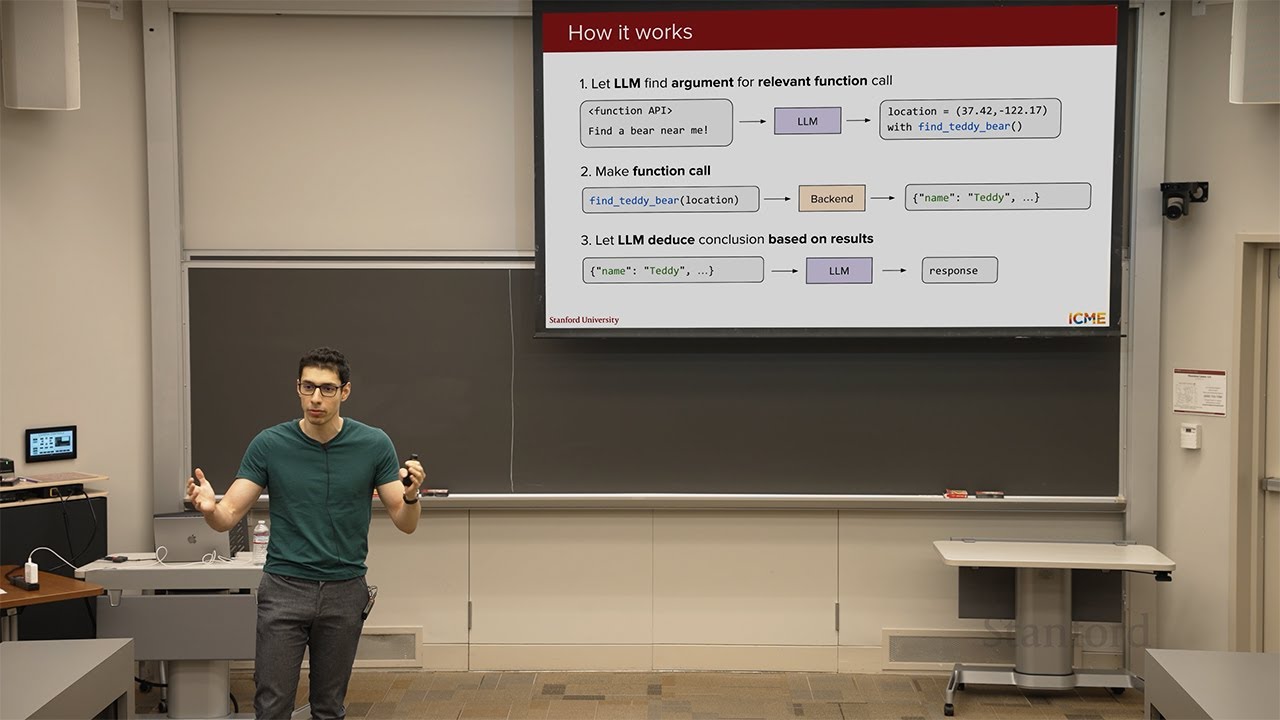

The discussion then transitions to tool calling, where LLMs can invoke structured external functions or APIs to perform tasks beyond text generation. Tool calling allows LLMs to dynamically access external resources, such as querying a location-based API to find nearby teddy bears, by generating function calls with appropriate arguments. Training LLMs to use tools typically involves supervised fine-tuning on examples mapping user queries to function calls and then mapping tool outputs back to natural language responses. Alternatively, prompt engineering and few-shot learning can be used to teach models tool usage without retraining, leveraging the models’ strong code understanding capabilities.

To manage scalability and complexity when many tools are available, the lecture introduces tool selection or routing, where an LLM first selects a subset of relevant tools based on the user query before invoking them. This approach helps mitigate context window limitations and reduces confusion from irrelevant tools. The lecture also highlights efforts to standardize tool interfaces through protocols like the Model Context Protocol (MCP), which defines a common vocabulary and structure for exposing tools to LLMs, facilitating interoperability and reuse across different systems.

Finally, the lecture explores agentic LLMs, which autonomously pursue goals by iteratively reasoning, planning, and acting through multiple tool calls in a loop. Agents decompose complex tasks into substeps, observe outcomes, and adjust plans accordingly, enabling more sophisticated workflows such as adjusting room temperature based on sensor data. The lecture discusses multi-agent systems and communication protocols, safety concerns including data exfiltration risks, and strategies for mitigation through training and inference safeguards. The session concludes with practical advice to start small when building tool-enabled agents and highlights the growing importance of agents in applications like AI-assisted coding, emphasizing the need for human oversight in evaluating generated outputs.