The speaker, an experienced AI researcher, reflects on how current AI technologies amplify longstanding societal issues like job displacement, misinformation, and loss of privacy, emphasizing that these challenges require collective, socially engaged responses rather than purely technical solutions. He argues that instead of focusing on extreme narratives or seeking to “solve” AI, society should prioritize ongoing, collaborative efforts to guide AI’s impact in ways that support human connection, meaning, and well-being.

The speaker, a veteran in the field of artificial intelligence (AI), opens with reflections on his long career, beginning at the MIT AI Lab and later at Stanford, and acknowledges the rapid evolution of AI technology. He admits that while the technical underpinnings of modern AI are beyond his expertise, the social and ethical questions remain strikingly similar to those faced in earlier eras. Rather than focusing on apocalyptic or utopian visions of AI, he emphasizes the importance of examining how AI is affecting society today, particularly in terms of its integration into daily life and its impact on longstanding social issues.

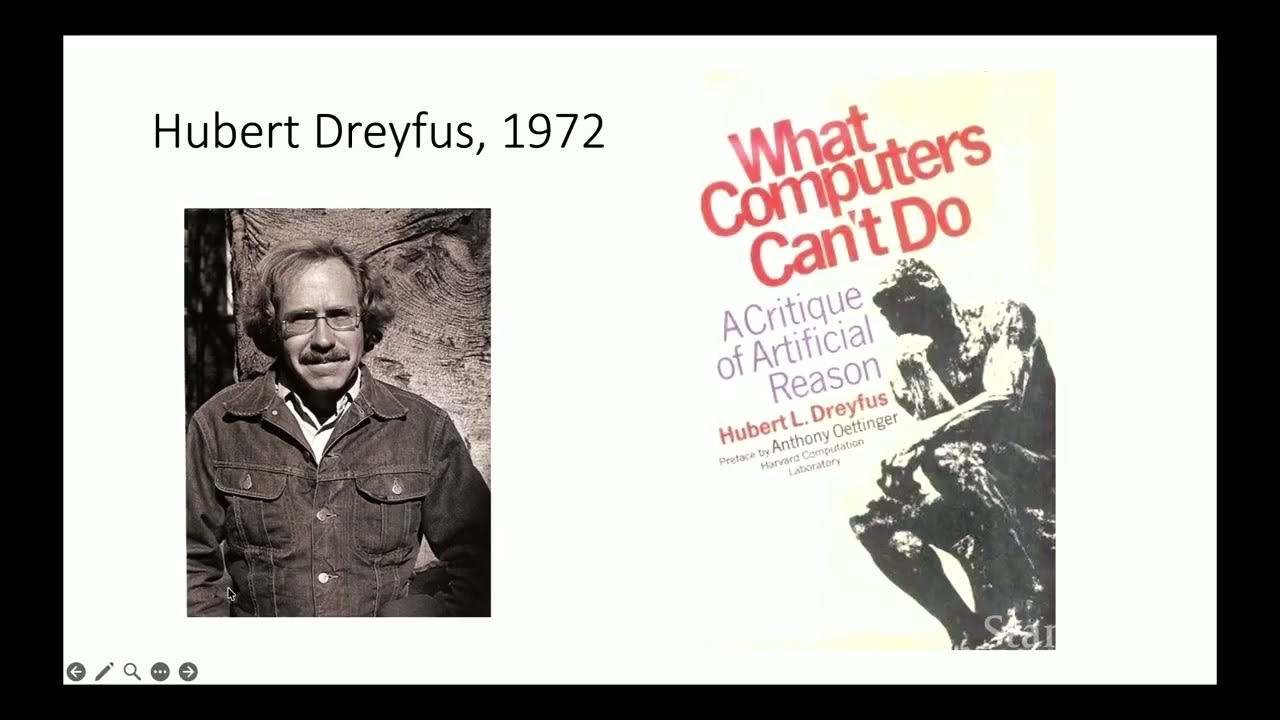

He draws historical parallels, noting that many concerns about AI—such as job displacement, misinformation, and loss of privacy—are not new but are being accelerated by current technologies. For example, he compares the optimism around early automobiles to today’s AI hype, highlighting how unforeseen negative consequences (like pollution for cars) were initially ignored. Similarly, he points out that AI is intensifying existing problems, such as resource consumption by data centers and the erosion of trust due to deepfakes and misinformation, rather than creating entirely new ones.

The talk delves into the societal impacts of AI, particularly on employment and human interaction. The speaker cites examples of AI replacing entry-level jobs, including software engineering, and references experts like Geoffrey Hinton who warn that AI may increase unemployment and economic inequality. He also discusses how AI tools are changing the way people interact, from students using ChatGPT instead of collaborating with peers, to the proliferation of chatbots that simulate human relationships. This shift, he argues, risks diminishing meaningful human connections and encourages a culture of optimization and efficiency at the expense of deeper values and relationships.

A significant portion of the lecture is devoted to the ethical and regulatory challenges posed by AI. The speaker critiques both the “doomer” and “booster” narratives, arguing that neither extreme is helpful. He reviews current regulatory efforts, such as California’s transparency requirements and the European Union’s AI Act, but notes their limitations in addressing the broader societal and cultural shifts driven by AI. He is skeptical of the idea that AI can be “aligned” with human values, pointing out that values are culturally and contextually defined, and that attempts to codify them risk oversimplification and exclusion.

In conclusion, the speaker advocates for a collective, patient, and socially engaged approach to steering AI’s development and integration. He stresses that technological solutions alone are insufficient; meaningful change requires shifts in social understanding, incentives, and collaboration. Drawing on metaphors of navigation and teamwork, he calls for society to focus on the underlying human capacity for care and responsibility, rather than relying solely on technical fixes or individual genius. Ultimately, he suggests that the challenge is not to “solve” AI, but to continually steer its trajectory in ways that preserve and enhance human meaning, connection, and well-being.