The video expresses deep concern over SUSE’s introduction of autonomous AI-powered Linux administration, warning that handing critical infrastructure control to AI systems poses significant risks of errors and catastrophic failures. It critiques the broader industry trend, led by companies like Red Hat and Microsoft, of aggressively embedding AI into Linux and other technologies primarily for corporate gain rather than genuine user demand, urging caution and resistance against unchecked AI adoption.

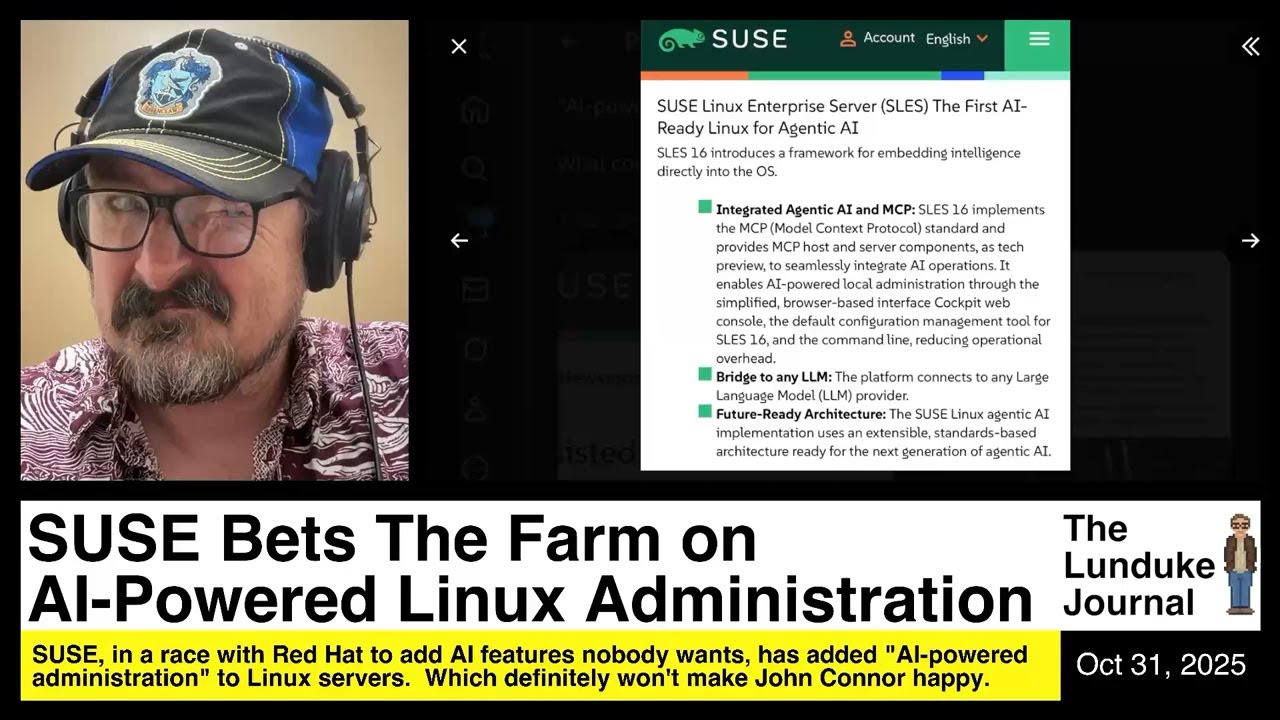

The video discusses the recent announcement by SUSE, the oldest Linux company, that they have integrated AI-powered Linux administration into their SUSE Linux Enterprise Server. This new feature, called “Agentic AI administration,” allows an AI engine to autonomously perform administrative and management tasks on servers and networks without human intervention or requests. The speaker expresses deep concern and skepticism about this move, likening it to handing over control to a potentially dangerous AI system reminiscent of science fiction scenarios like Skynet or the Master Control from Tron. The idea of fully autonomous AI managing critical infrastructure is seen as reckless and fraught with risks.

The speaker contextualizes SUSE’s decision within a broader industry trend, highlighting that Red Hat, a major competitor, has been aggressively pushing AI integration into their Linux offerings for nearly a year. Red Hat’s CEO, Matt Hicks, has publicly stated that “AI needs to be everywhere,” and the company has been acquiring AI firms and incentivizing employees financially to promote and embed AI features across their products. This push extends even to Fedora Linux, Red Hat’s community distribution, where AI-assisted contribution policies now allow AI tools to help write code. The speaker criticizes this as a corporate-driven initiative rather than a genuine community effort, emphasizing the pervasive and sometimes forced nature of AI adoption in Linux ecosystems.

The video raises serious concerns about the reliability and safety of AI systems managing critical infrastructure. An illustrative example is given of a school district’s AI-powered camera system mistakenly identifying a child eating Doritos as holding a gun, leading to an unnecessary police intervention. This incident is used to highlight the potential for AI errors with severe consequences. The speaker worries that similar mistakes or malicious actions by AI administrators could cause widespread outages, DNS failures, or other catastrophic disruptions in server and network operations if AI is given unchecked control.

The speaker also voices frustration with the broader tech industry’s enthusiasm for embedding AI into every possible product and service, including Microsoft’s integration of AI into everyday applications like MS Paint, Notepad, Word, and Excel. They argue that there is little demand from actual system administrators or DevOps professionals for AI to take over server management, suggesting that these AI initiatives are more about corporate marketing and hype than genuine user needs. The speaker calls for a pushback against what they see as an excessive and potentially dangerous overreliance on AI in critical technology infrastructure.

Finally, the video concludes with a thank you to the subscribers of the Lunduke Journal, which supports independent tech journalism free from big tech influence. The speaker promotes a current subscription sale and encourages viewers to support independent reporting that aims to reveal the truth about technology trends, including the unchecked rise of AI in areas where it may not be appropriate. The overall tone is one of caution and skepticism, urging the tech community and users to carefully consider the implications of handing over control to AI systems without sufficient oversight.