The video outlines how the adoption of transformer-based models, originally developed for natural language processing, has revolutionized robotics by enabling integrated vision-language understanding and precise motor control, leading to significant advancements in robot capabilities. However, despite these breakthroughs, current robots still struggle with real-time learning, intent understanding, and generalization in dynamic environments, highlighting the need for continued research to achieve truly autonomous and adaptable machines.

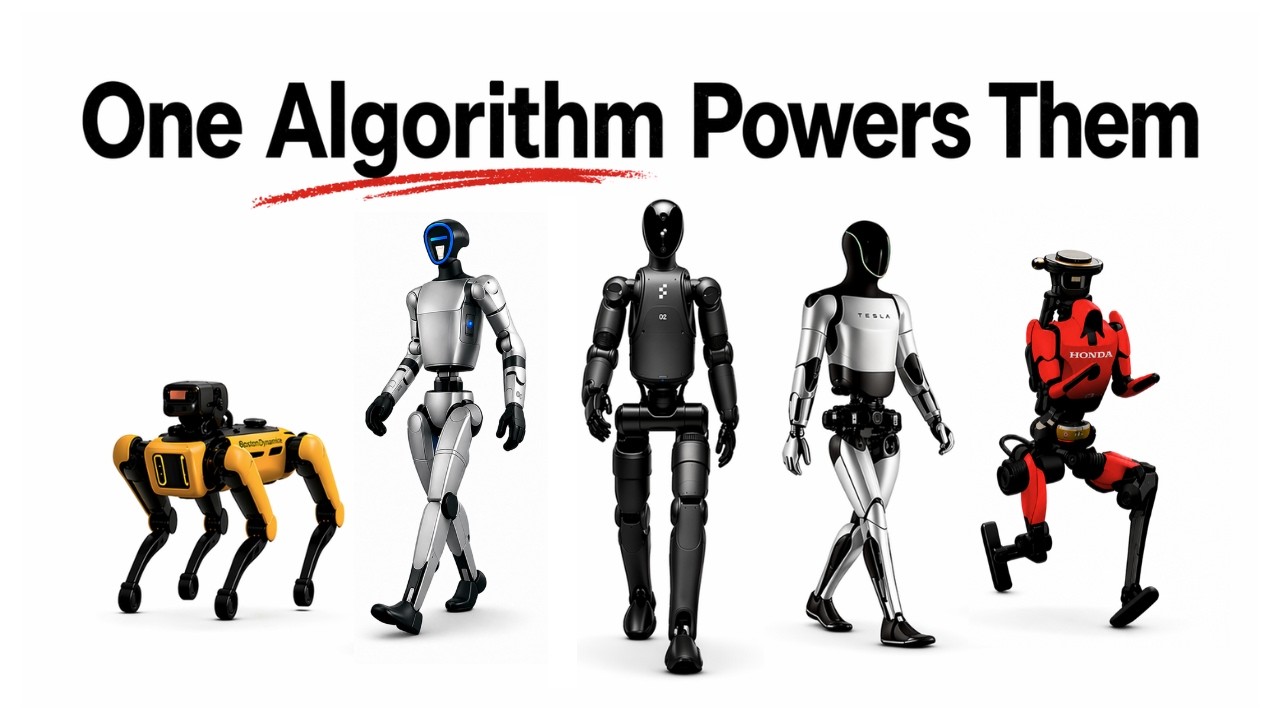

The video traces the evolution of robotics over the past few decades, highlighting the slow progress from early breakthroughs like Honda’s unassisted walking robot in 2000 and the autonomous vehicle challenges in 2004, to the limited capabilities of robot arms in controlled lab environments by 2012. For fifty years, robots lacked a true understanding of the world, operating only on raw sensor data without any meaningful model of objects or context. This gap persisted until the realization that the solution already existed in a different domain: natural language processing (NLP).

The breakthrough came with the development of the transformer architecture in 2017 by Google researchers. Originally designed for language translation, transformers predict the next token in a sequence, enabling them to learn complex relationships and meanings from vast amounts of text data. When scaled to internet-sized datasets, these models developed a deep understanding of language and the world, something robotics had been missing. The next step was to extend this approach to vision by breaking images into patches, encoding them as tokens, and using attention mechanisms to allow the model to understand both visual and linguistic information simultaneously.

This fusion of vision and language led to multimodal models like CLIP, which align images and their textual descriptions in a shared mathematical space. This unified representation allows robots to process visual scenes and language instructions as a single sequence, which can then be transformed into action commands. The final stage involves converting discrete action tokens into continuous motor signals that control the robot’s movements, enabling complex tasks like picking, placing, and manipulating objects with impressive precision.

Despite these advances, current models have significant limitations. They do not learn or adapt in real-time; any improvement requires collecting new data and retraining, which can take weeks. Robots lack an internal model of intent, so when they fail, they often repeat mistakes without understanding why. Moreover, these models struggle with generalization—small changes in environment, lighting, or object placement can cause performance to collapse entirely, revealing that the robots have learned appearances of success rather than the underlying structure of tasks.

In conclusion, while the integration of transformer-based vision-language-action models has propelled robotics forward in remarkable ways, the goal of creating robots that can seamlessly operate in everyday human environments remains elusive. The current technology is a significant step but still far from enabling robots that can autonomously adapt, understand intent, and reliably function in the unpredictable complexity of real homes. Solving these challenges will likely require years of further research and innovation.