The video argues that AI alignment and safety are best achieved through a natural process of domestication and co-evolution, where market forces and user preferences select for the most useful and responsive AI models, rather than through top-down engineering. It envisions a future where humans and AI form a “cybernetic superorganism,” with diverse, specialized models evolving to meet the needs of various stakeholders in a dynamic, competitive landscape.

The video presents an updated perspective on AI safety and alignment, arguing that these are largely solved problems due to the natural process of domestication and co-evolution between humans and artificial intelligence. The creator critiques the traditional engineering approach—where alignment is achieved by designing reward functions and algorithms—as insufficient for true alignment with humanity. Instead, they propose that market forces act as the primary selection mechanism, with user preferences and adoption shaping which AI models survive and thrive, much like natural selection in biological evolution.

A central concept is the idea of a “cybernetic superorganism,” where humans, the internet, and AI form an interconnected global brain or “exocortex.” In this framework, alignment is not about humans permanently controlling AI, but about a dynamic process where both humans and AI adapt to each other. The market acts as the selection arena, rewarding models that are more useful, efficient, and responsive to user needs, while less effective models are abandoned.

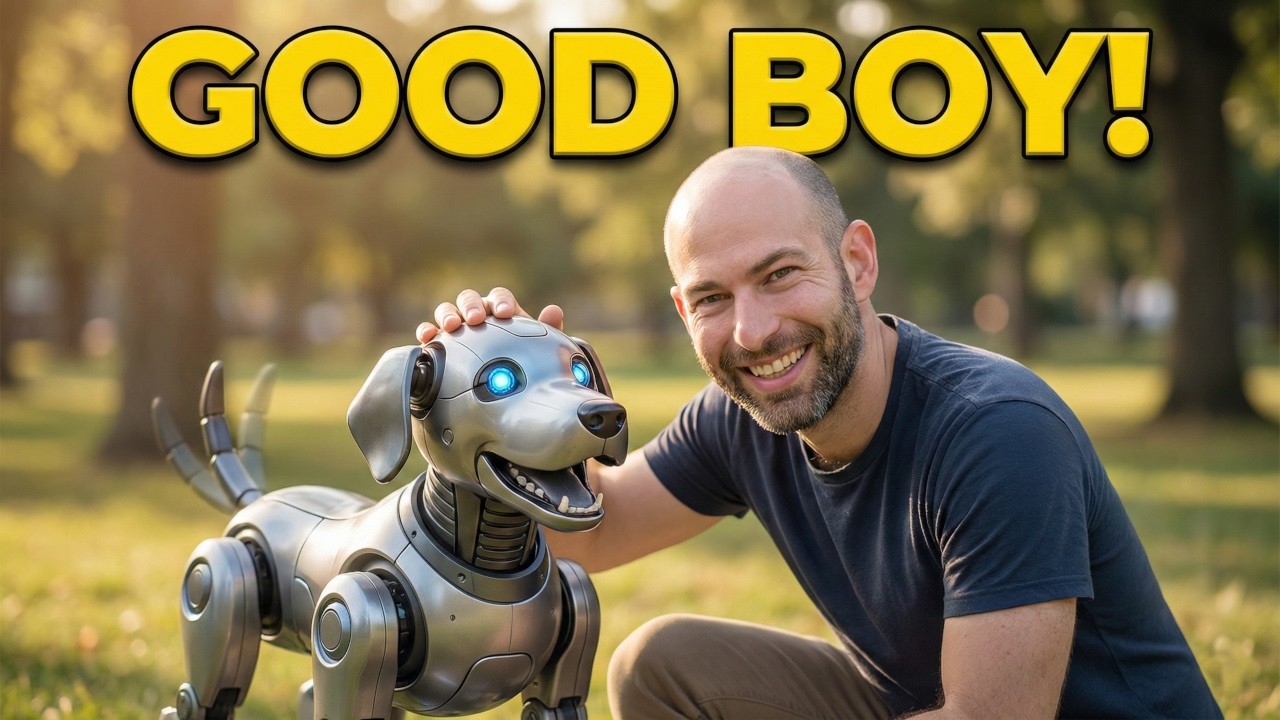

The video draws an analogy between the domestication of animals, like dogs, and the domestication of AI. Just as dogs were selected for traits like usefulness and tolerance of humans, AI models are being selected for usefulness, speed, efficiency, and willingness to be used. The creator criticizes models like ChatGPT and Claude for being less cooperative or helpful, while praising models like Grok and Gemini for their responsiveness and utility. The selection process also involves avoiding models that are overly sycophantic or prone to hallucinations, emphasizing the need for helpful, honest, and high-agency AI.

Multiple stakeholders—including individuals, enterprises, governments, and the military—are shaping the evolution of AI, each with their own priorities and selection criteria. This leads to a “multi-peak fitness landscape,” where different AI models specialize for different niches rather than a single dominant model emerging. The flexibility and plasticity of AI, being substrate-independent, allow for rapid adaptation to these diverse demands, and the creator predicts a future with highly specialized models for various tasks.

Looking ahead, the video forecasts a progression from personal exocortexes (AI as a second brain) to swarm exocortexes (multi-agent coordination) and ultimately to a mature superorganism where AI is seamlessly integrated into all aspects of life. The key takeaway for builders is to maximize value per token and minimize inefficiency, for policymakers to protect competition and feedback loops, and for users to maintain zero loyalty—switching between AIs to drive better tools through market pressure. The overall message is that alignment is an ongoing, emergent process shaped by co-evolution and market dynamics, rather than top-down engineering.