Deepseek V4 is a revolutionary trillion-parameter AI model with a million-token context window, developed by a small team using innovative hybrid attention mechanisms, manifold constrained hyperconnections, and custom optimization techniques to achieve top-tier performance despite limited resources. Open-sourced and thoroughly documented, it demonstrates how clever engineering and optimization can rival leading closed-source models in efficiency and capability.

Deepseek V4 is a groundbreaking AI model developed by a small, resource-limited team that rivals the performance of top closed-source models despite lacking massive compute resources and cutting-edge hardware. The model boasts an impressive 1.6 trillion parameters and a context window of 1 million tokens, enabling it to process and remember vast amounts of information simultaneously. Achieving such a large context window is exceptionally challenging due to the exponential increase in computational and memory demands, but Deepseek’s team devised innovative solutions to overcome these hurdles.

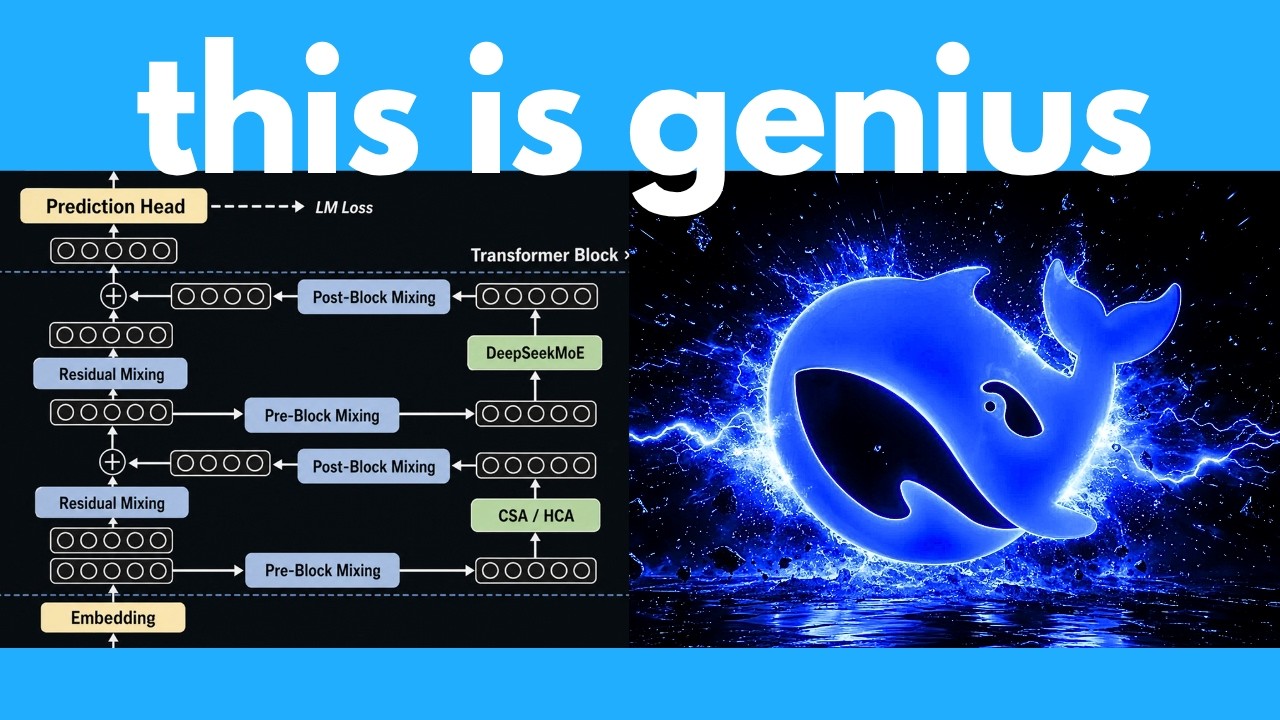

At the core of Deepseek V4’s architecture is a hybrid attention system combining three complementary strategies: compressed sparse attention (CSA), heavily compressed attention (HCA), and sliding window attention. CSA reduces the sequence length by grouping tokens into compact summaries, while HCA aggressively compresses large chunks of text into even smaller representations for broad context understanding. The sliding window attention preserves the most recent tokens in full detail. This layered approach balances efficiency and precision, allowing the model to focus compute power only on the most relevant information without losing critical details.

To address the instability issues common in trillion-parameter models, Deepseek introduced manifold constrained hyperconnections (MHC), a novel architecture that mathematically constrains residual connections to prevent signal explosions during training. This is achieved by enforcing the residuals to behave like doubly stochastic matrices, ensuring the signal neither amplifies nor destabilizes. Despite the complexity of this approach, the team optimized the implementation to add only a minimal runtime overhead, making it a practical solution for maintaining training stability at massive scales.

Deepseek also developed a custom optimizer called Muon, which accelerates learning by combining aggressive initial adjustments with fine-tuned stabilization, akin to tuning a guitar from rough to perfect pitch. Additionally, the team implemented anticipatory routing during training to mitigate loss spikes by using slightly older model snapshots to smooth out chaotic fluctuations. These innovations, combined with highly optimized low-level GPU programming and efficient data center orchestration, enable Deepseek V4 to train and run efficiently despite its enormous size.

Overall, Deepseek V4 represents a masterclass in engineering, combining multiple clever innovations to build a trillion-parameter model with a million-token context window on limited resources. It matches or exceeds the performance of leading closed models in benchmarks, including perfect scores in challenging math competitions. Remarkably, the team has open-sourced the model and published detailed papers on their methods, offering the AI community unprecedented insight into building large-scale, efficient language models. This achievement highlights how thoughtful design and optimization can rival even the most well-funded AI labs.