The video explains how traditional autoencoders compress and reconstruct images by encoding them into a low-dimensional latent space that clusters similar inputs but contains large empty regions, preventing the network from generating genuinely new images. It then introduces variational autoencoders (VAEs), which encode inputs as overlapping probability distributions in latent space, enabling meaningful image generation from any point and overcoming the limitations of standard autoencoders.

The video explores a fundamental question about neural networks: can they genuinely imagine something entirely new, rather than just retrieving or reconstructing existing data? This question is examined through the lens of an architecture called an autoencoder. An autoencoder works by taking an input image, compressing it into a much smaller representation, and then reconstructing the original image from this compressed form. The key insight is that if the reconstruction is accurate, the compressed representation must capture all the essential features of the input.

In the example given, the autoencoder is trained on 28x28 pixel images of handwritten digits, compressing each 784-pixel image down to just two numbers at a bottleneck layer called Z. The decoder then reconstructs the image from these two numbers. The network learns by minimizing the difference between the original and reconstructed images, forcing it to retain only the most important information. After training, the autoencoder can compress and reconstruct digits with impressive accuracy, demonstrating that it has learned the essential structure of the images.

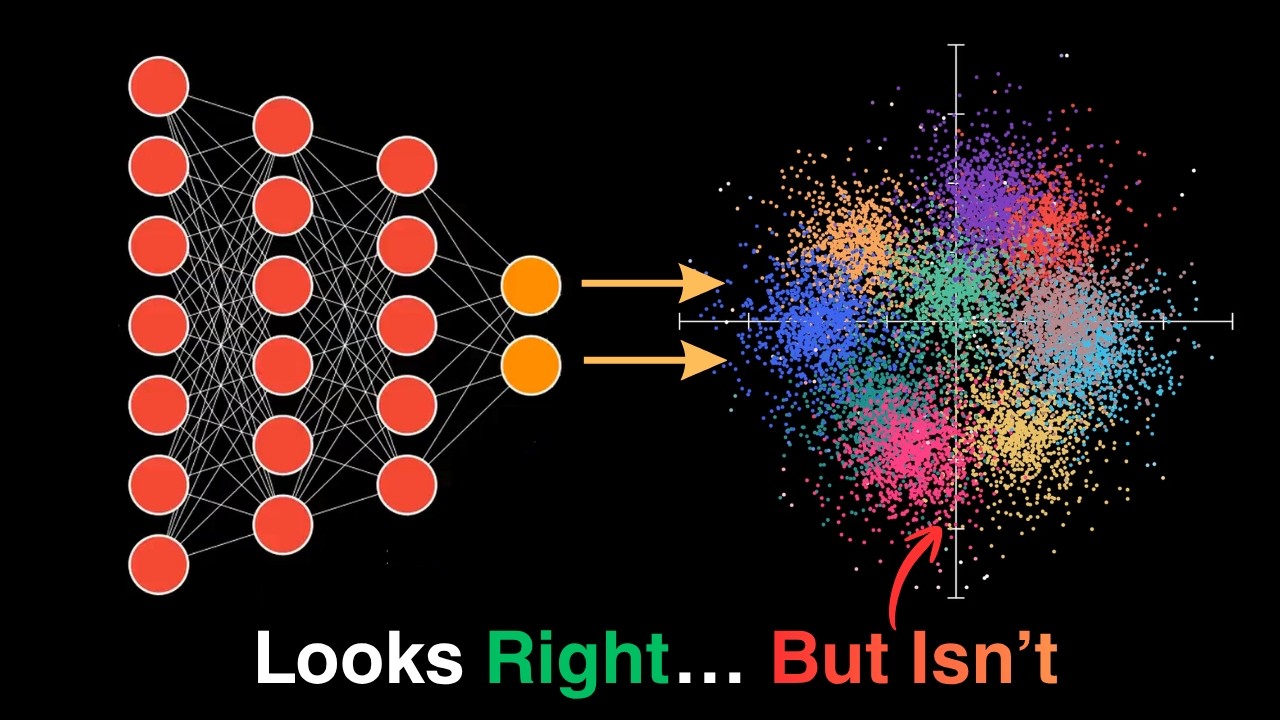

The video then visualizes the latent space formed by encoding all 60,000 training images into two-dimensional points. Remarkably, the network organizes these points into clusters corresponding to different digits, despite having no labels or explicit knowledge of digit categories. This latent space acts as a geometric map where points close together decode to visually similar digits. However, between these clusters lie vast empty regions—areas the network never encountered during training.

When the decoder is asked to generate images from points in these empty regions, it produces nonsensical outputs. This happens because the network was only trained to reconstruct images from points within the clusters, and it extrapolates poorly outside this domain. Thus, while the autoencoder excels at compression and reconstruction, it cannot genuinely invent new images by sampling arbitrary points in the latent space, as much of the space remains unstructured and meaningless.

To address this limitation, the video introduces the concept of variational autoencoders (VAEs). Unlike traditional autoencoders that encode each image as a single point, VAEs encode images as probability distributions, creating overlapping “clouds” in the latent space. This overlap fills the empty regions, allowing the decoder to generate meaningful images from any point in the space. The video promises to explore VAEs in more detail in the next part, highlighting their ability to enable genuine image generation and creativity in neural networks.