Flow matching is a physics-inspired generative modeling approach that efficiently transforms simple noise into complex images by learning a time-variant velocity field guided by the continuity equation and optimal transport theory. This method simplifies training through conditional velocity fields and accelerates inference by solving an ODE, offering faster and more stable image generation compared to traditional diffusion models.

Flow matching is a cutting-edge approach to image and video generation that has gained popularity in many open-source models like C dance, flux, and stable diffusion 3. Unlike traditional diffusion models that require many steps to refine noisy images, flow matching achieves similar results in fewer steps by learning a time-variant velocity field that transports a simple initial density into the complex data distribution. The method is grounded in physics, particularly the continuity equation, which describes how probability mass moves through space and time, analogous to conserved quantities like electric charge or water flow.

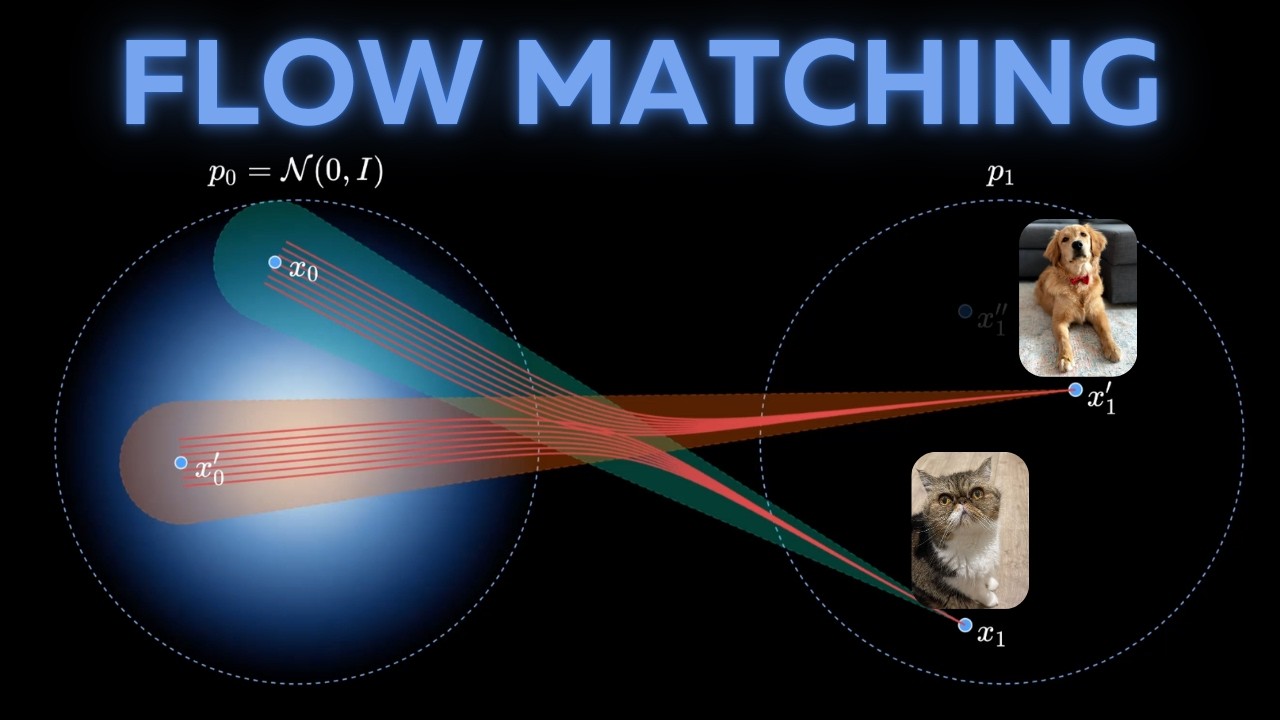

The core idea behind flow matching is to model the gradual transformation of a simple source density P0 into the target data density P data over a fictitious time interval. This transformation is represented by a velocity field that guides particles from noise to well-formed images. Training the model involves learning this velocity field by minimizing the mean squared error between the model’s predicted velocity and supervised velocity labels derived from optimal transport theory. These labels correspond to straight-line paths between noise samples and real images, which represent the most efficient way to transport one Gaussian distribution into another.

A key insight of flow matching is its divide-and-conquer training strategy. Instead of learning the complex unconditional velocity field directly, the model learns conditional velocity fields that transport the source density into Gaussians centered on individual training images. Although the training paths appear simplistic and independent, the model implicitly learns the marginal velocity field that governs the overall data distribution. This happens because the mean squared error loss averages conflicting velocity labels at intersecting paths, resulting in curved trajectories that better approximate the true velocity field.

The inference process in flow matching involves solving an ordinary differential equation (ODE) that moves particles along the learned velocity field from noise to images. The number of inference steps depends on the curvature of the velocity field: straighter paths require fewer steps, while more curved paths need finer discretization. A related method called rectified flow improves upon this by iteratively refining the training pairs to reduce path intersections and curvature, enabling potentially one-step image generation that combines the speed of GANs with the stability of flow matching.

Overall, flow matching offers a mathematically elegant and physically grounded framework for generative modeling that simplifies training and accelerates inference compared to previous flow-based methods. Its success lies in leveraging optimal transport theory, the continuity equation, and a clever training scheme that balances conditional and marginal velocity fields. While the equivalence between flow matching and diffusion models remains an open topic, flow matching stands out as a promising direction for future research and practical applications in generative AI.