The video compares convolution and self-attention in image processing, explaining that convolution aggregates information locally using fixed kernels, while self-attention allows each pixel to flexibly gather information from all other pixels using learned attention scores. This gives self-attention a global receptive field and greater expressive power for capturing long-range dependencies compared to the local scope of convolution.

The video explains the conceptual relationship between convolution and self-attention, particularly in the context of image processing. Both methods can be seen as transformations applied to images, but they operate differently in terms of how they aggregate information from pixels. In convolution, each pixel is processed by considering only its local neighborhood, using a fixed kernel to blend information from nearby pixels. This local approach is effective for capturing spatial patterns but is inherently limited in scope.

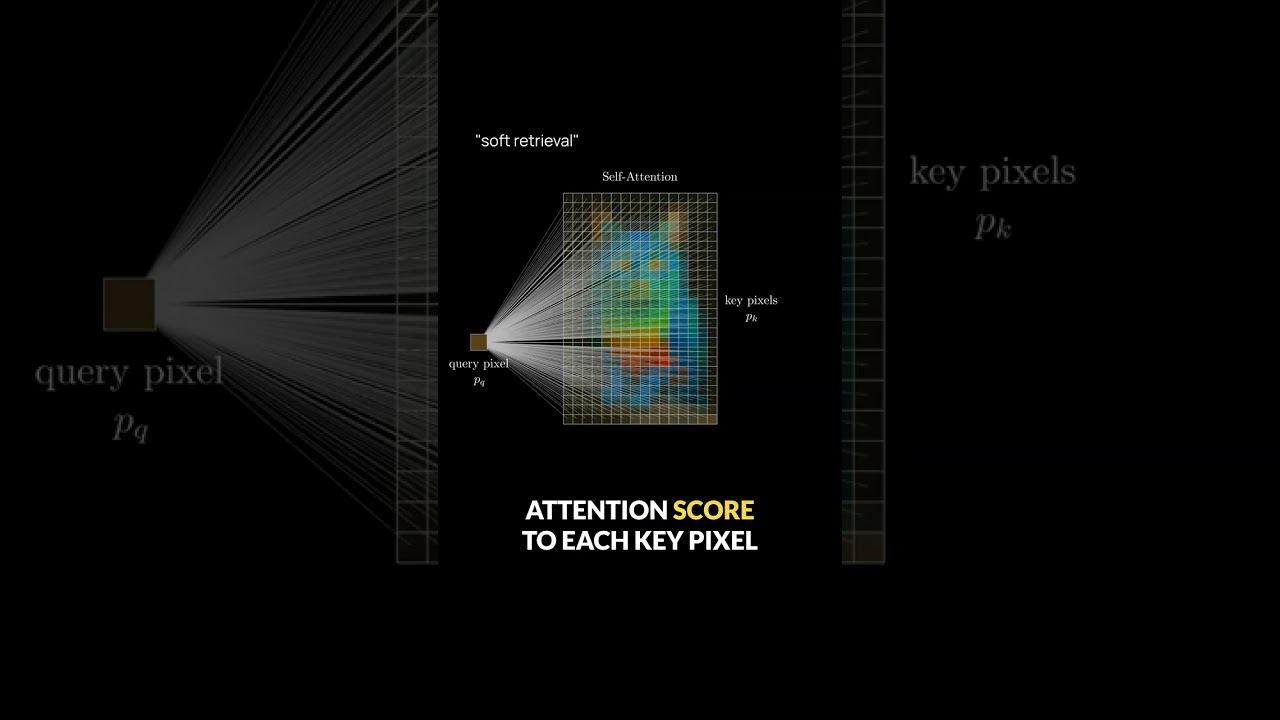

Self-attention, on the other hand, allows each pixel—referred to as the query pixel—to consider information from all other pixels in the image, which are called key pixels. The terminology comes from the world of search engines, where a query retrieves relevant items from a database. Here, the image acts as a database of pixels, and the query pixel seeks to retrieve the most relevant pixels to inform its output. Unlike traditional database retrieval, which selects the top k items (hard retrieval), self-attention performs soft retrieval by assigning a weight, or attention score, to every key pixel based on its relevance to the query.

The attention scores are determined by a similarity measure, such as the dot product, between the query and key pixels in a vector space. The output for each pixel is then computed as a weighted sum of all key pixels, with the constraint that the attention scores are positive and sum to one. This mechanism allows the model to flexibly aggregate information from anywhere in the image, rather than being restricted to a local neighborhood.

To make this process learnable, neural networks use linear transformations on the pixel vectors. Each pixel is passed through learned matrices: a query matrix for the query pixel, a key matrix shared among all key pixels, and a value matrix for the output. These learned transformations enable the network to adaptively determine how information should be aggregated, making self-attention a powerful and flexible alternative to fixed convolutional kernels.

When comparing convolution and self-attention directly, the most significant difference is the receptive field. Convolutional layers are limited to local interactions, meaning each pixel can only interact with its immediate neighbors. In contrast, self-attention has a global receptive field, allowing any pixel to attend to any other pixel in the image in a single step. This fundamental distinction gives self-attention greater expressive power, especially for capturing long-range dependencies in images.