The video examines JSON prompting for language models, highlighting its token inefficiency and introducing Tune, a token-efficient alternative that compresses JSON data while maintaining structure, particularly effective with uniform, shallow arrays. Despite some limitations and mixed opinions on JSON prompting overall, Tune shows promise in reducing token costs and improving LLM performance, encouraging viewers to experiment with it for large structured data inputs.

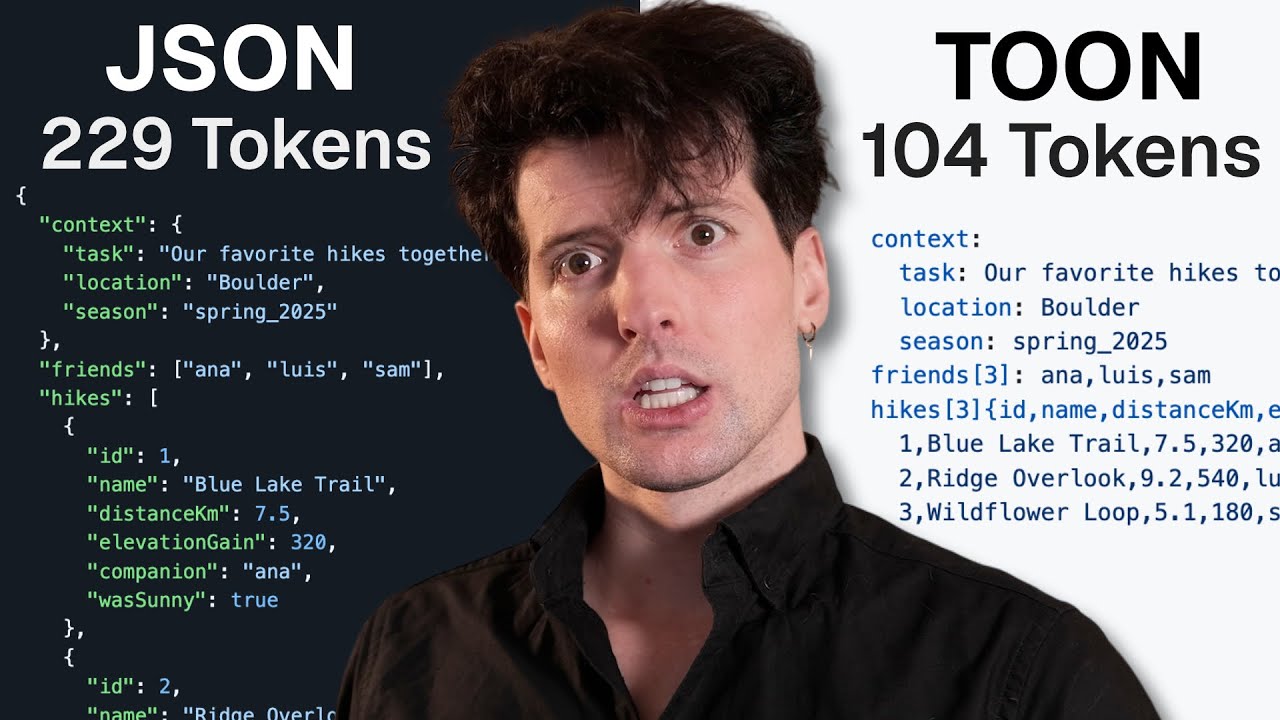

The video explores the concept of JSON prompting for language models (LLMs), a technique where instructions or data are formatted as JSON rather than plain text sentences. The idea behind JSON prompting is that JSON’s structured and consistent format might help LLMs better understand and process inputs. However, the presenter points out a major drawback: JSON tends to be token-heavy, meaning it uses more tokens than plain text or YAML, which can increase processing costs and reduce efficiency. To address this, the video introduces Tune, a token-oriented object notation designed as a more token-efficient alternative to JSON for LLM inputs.

Tune is presented as a lossless, drop-in replacement for JSON that significantly reduces token usage—sometimes by 40 to 60 percent—while maintaining readability and structure. The video demonstrates how Tune compresses JSON data into fewer tokens, making it more efficient for LLMs to process. However, the presenter also notes that Tune’s effectiveness depends on the data structure; it works best with uniform, shallow arrays but struggles with deeply nested or non-uniform data. Despite some limitations, Tune shows promise as a practical tool for passing large amounts of structured data to language models more efficiently.

The video also touches on the broader debate around JSON prompting itself. While some advocate for JSON prompting as a way to improve AI output precision, others argue it is overhyped and can lead to failures, especially with complex nested data. The presenter highlights the mixed opinions and the lack of definitive benchmarks, emphasizing that JSON prompting is not a silver bullet. Instead, the video suggests that better evaluation methods and more nuanced approaches are needed to truly improve LLM performance with structured data.

Benchmark results are discussed, comparing JSON, Tune, YAML, and other formats in terms of token cost and accuracy across different LLMs. Notably, newer models like Gemini 25 Flash and GBT5 Nano outperform others in retrieving information from large data sets, with Tune showing advantages in token efficiency for certain data types. The video also warns against using XML for LLM inputs due to its inefficiency and poor performance. Overall, the benchmarks reinforce that while Tune is not perfect, it offers a meaningful improvement in specific scenarios, especially when dealing with large, uniform arrays of data.

In conclusion, the presenter admits to initially being skeptical about Tune and JSON prompting but ultimately finds Tune to be a surprisingly useful tool for working with LLMs. The availability of simple CLI tools and libraries for converting JSON to Tune makes it accessible and practical. The video encourages viewers to experiment with Tune when passing large JSON arrays to language models to save tokens and potentially improve accuracy. The presenter invites feedback from the community on whether Tune is overrated or genuinely valuable, signaling an open-minded approach to evolving best practices in AI prompting.