The video covers President Trump’s directive for U.S. agencies to stop using Anthropic’s AI products after the company refused to allow its technology to be used for mass surveillance and autonomous weapons, prioritizing ethical guardrails. Expert Alondra Nelson explains that this move reflects a clash between government demands and Anthropic’s commitment to responsible AI, with a six-month transition period for agencies to phase out the tools.

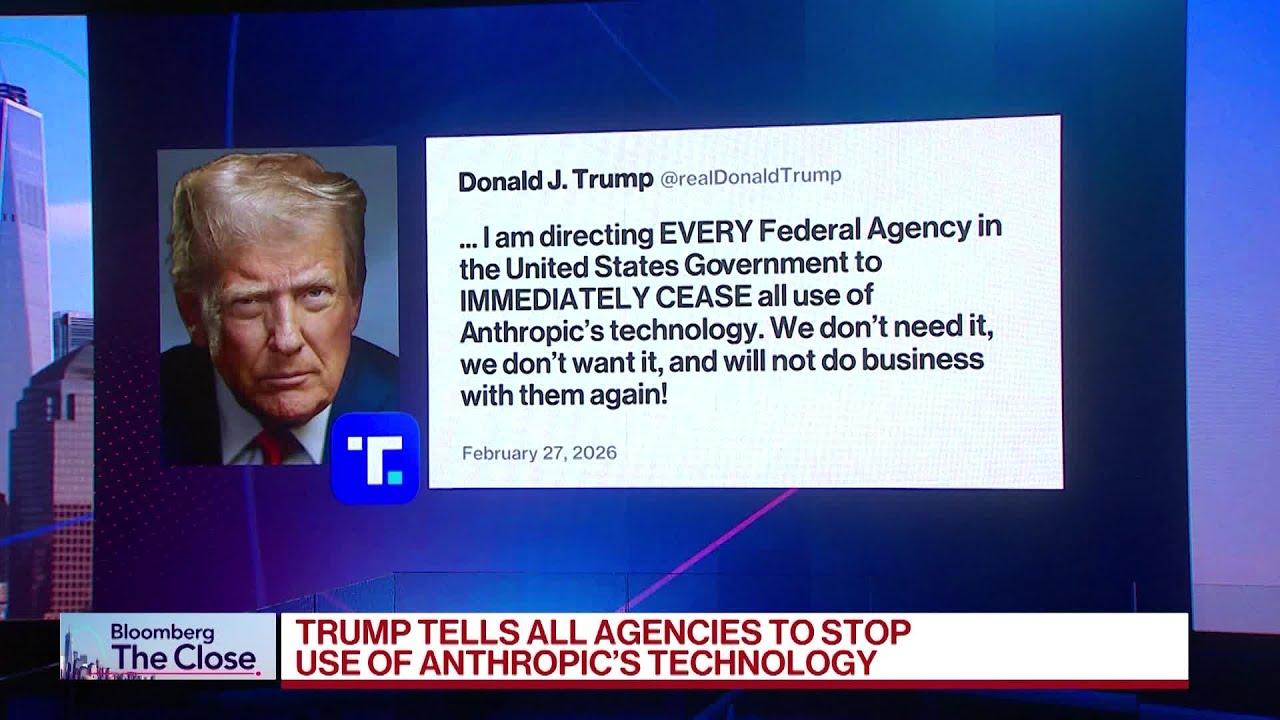

The video discusses recent tensions between the AI company Anthropic and the U.S. Department of Defense, culminating in President Trump’s directive for all U.S. agencies to immediately stop using Anthropic’s technology. Anthropic, founded as a responsible AI company with a focus on safety and ethical guardrails, has been in conflict with the Defense Department over the use of its tools, particularly regarding mass surveillance of citizens and the deployment of autonomous weapons without human oversight.

Alondra Nelson, a member of the U.N. Advisory Board on AI and former Biden administration official, is interviewed to provide context. She explains that Anthropic was created as a splinter group from OpenAI due to concerns about AI safety and ethical boundaries. The company has set clear red lines for its government contracts, refusing to allow its technology to be used for autonomous weapons or mass surveillance of American society.

Nelson points out that, until recently, it was standard practice for companies to set the terms of how their products could be used, and clients could choose to accept or reject those terms. President Trump’s decision to stop using Anthropic’s products is framed as a rejection of these terms, rather than a reflection on the quality of the technology itself.

The discussion highlights that Anthropic’s tools are considered highly effective and reliable, which is why their use by the government has become a contentious issue. The company’s focus has primarily been on enterprise solutions, and its reputation for building in ethical guardrails has made its products attractive in both public and private sectors. The debate underscores the broader challenges of balancing technological advancement with ethical considerations in AI deployment.

Finally, Nelson notes that there is reportedly a six-month transition period for federal agencies to phase out Anthropic’s tools, suggesting that the situation could evolve further. She also mentions that the company’s future remains uncertain, especially given recent fluctuations in software stock prices and the competitive landscape. The outcome will depend on how both the government and Anthropic navigate these next several months.