The video introduces Marble, a groundbreaking multimodal world model by World Labs that creates fully controllable, hyperrealistic 3D environments from text, images, and videos, enabling interactive simulations for entertainment and AI training. It highlights Marble’s potential to advance artificial general intelligence by mimicking human multimodal perception and showcases a live demo of its user-friendly platform, supported by a cloud provider offering accessible GPU resources.

The video introduces Marble, the first fully controllable multimodal frontier world model developed by World Labs under Dr. Fei-Fei Li, a renowned AI researcher. Unlike large language models that predict the next word in a sentence, world models like Marble predict what the world will look like, including physics, lighting, and spatial details. This approach, termed spatial intelligence, aims to reconstruct, generate, and simulate 3D worlds that both humans and AI agents can interact with. Such interaction is crucial not only for entertainment like video games and movies but also for training embodied agents such as robots in virtual environments, enabling scalable and efficient learning without real-world data collection.

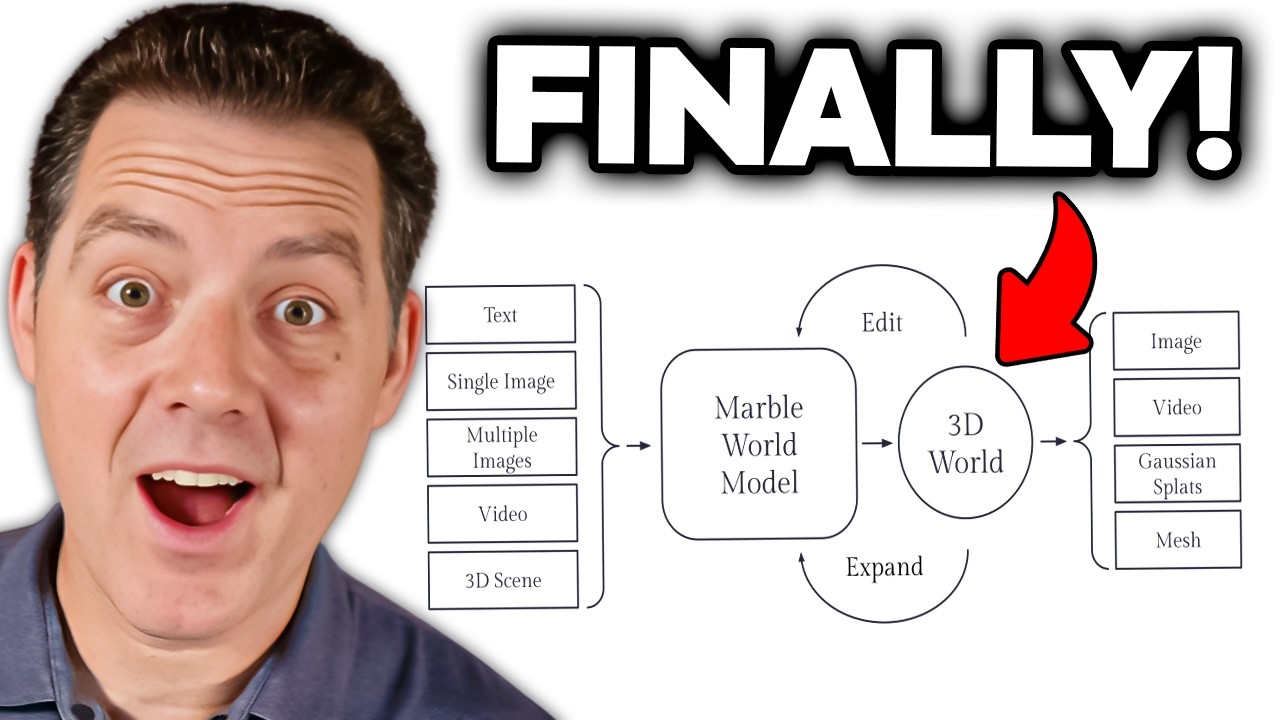

Marble is now generally available for public use and supports multimodal inputs including text, images, videos, and coarse 3D layouts to create detailed 3D worlds. Users can generate worlds from simple prompts or multiple images, and then navigate, edit, or expand these worlds with consistency. The model outputs 3D worlds in various formats such as Gaussian splats, meshes, or videos, offering flexibility for visualization and further use. This technology allows for hyperrealistic and editable 3D environments, demonstrated through examples like transforming a kitchen remodel or converting objects within a scene, showcasing its powerful creative and practical applications.

The video emphasizes the philosophical and technical reasons why Dr. Fei-Fei Li and her team prioritize world models over language models for achieving artificial general intelligence (AGI). Human perception is inherently multimodal, integrating sight, sound, touch, and language to build a comprehensive mental model of the world. Marble mimics this by combining multiple sensory inputs to create rich, interactive 3D representations. This holistic approach is believed to be essential for developing AI systems that can reason about and act within the real world more effectively than language models alone.

A live demonstration of Marble.worldlabs.ai highlights the platform’s capabilities, showing users navigating futuristic neon-lit hallways, space capsules, and even recreations of real-world spaces like an office from a single image upload. The system intelligently generates detailed 3D environments and even writes descriptive prompts based on uploaded images. Users can interact with these worlds, edit them, and share their creations in a social network-like environment. The platform offers free access with credits and paid tiers for expanded features, making it accessible for both casual users and professionals.

Finally, the video is sponsored by Vulture, a global cloud provider offering GPU resources optimized for AI projects. Vulture provides competitive pricing, low latency across multiple continents, and scalable infrastructure solutions like Kubernetes Engine. The sponsor’s offer includes $300 in credits for new users, making it easier for developers and researchers to experiment with AI technologies like Marble. The video concludes by encouraging viewers to try Marble, share their thoughts, and subscribe for more updates on cutting-edge AI developments.