The video highlights Apple’s Neural Engine as a key indicator of the industry’s shift toward on-device AI processing, emphasizing Apple’s unique vertically integrated chip design that enhances performance, privacy, and efficiency. It contrasts cloud-based and on-device AI approaches, concluding that Apple’s long-term commitment to embedded AI hardware positions it to lead the future of computing by enabling seamless, private, and powerful AI capabilities directly on consumer devices.

The video discusses the significance of Apple’s Neural Engine in the new MacBook Pro, emphasizing a broader narrative beyond typical specs like CPU speed or Geekbench scores. The Neural Engine, a specialized AI processor, has been growing in power and importance since its introduction in 2017. Apple, along with other major chipmakers like Qualcomm and Intel, is dedicating more transistors to AI components, signaling a major industry shift toward on-device AI processing. This trend reflects a strategic bet on the future of computing where AI capabilities are integrated directly into consumer devices rather than relying solely on cloud-based AI.

Apple’s approach to chip design is unique because it controls the entire technology stack—from silicon to software. Unlike traditional PC manufacturers who license chips from companies like Intel and then build their products around them, Apple designs its own chips, memory architecture, operating systems, and developer frameworks. This vertical integration allows Apple to optimize performance and efficiency in ways others cannot. For example, Apple’s unified memory architecture eliminates the need to copy data between CPU and GPU, improving speed and power consumption. The Neural Engine benefits from this integration by sharing the same memory and software frameworks, enabling seamless AI processing on the device.

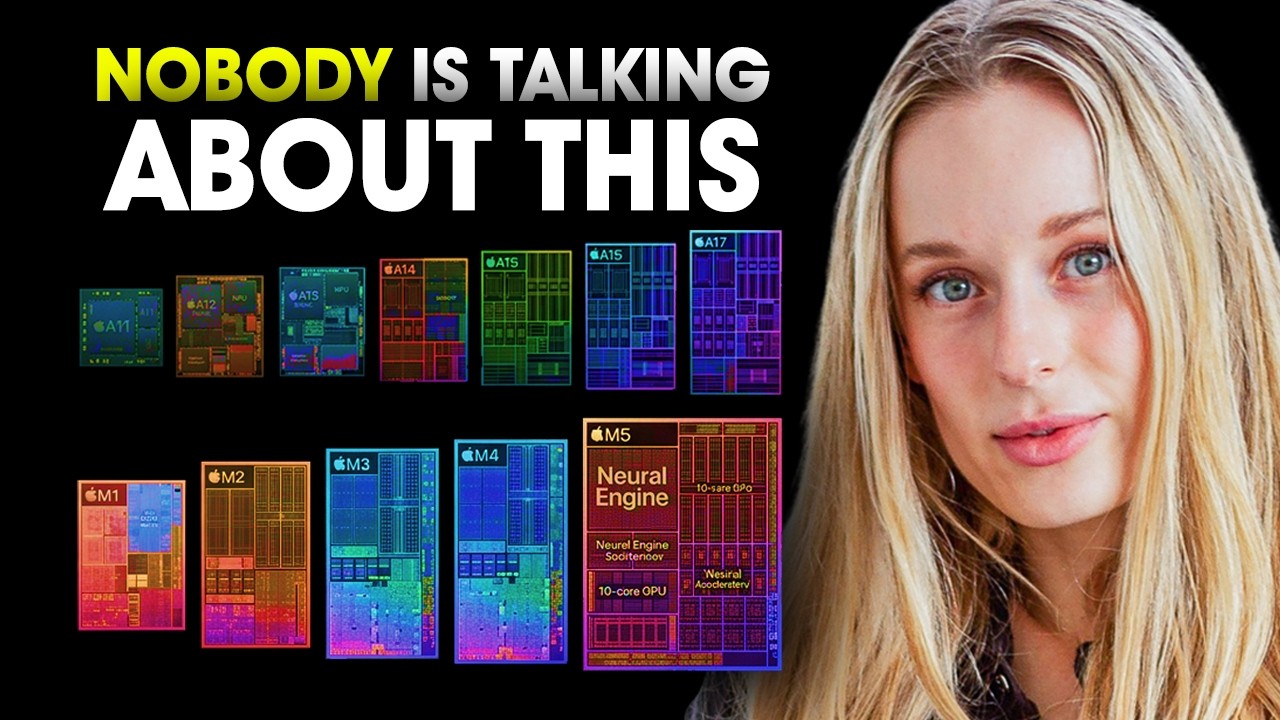

The evolution of Apple’s Neural Engine is remarkable, growing from a modest two-core design in 2017 to a powerful 16-core engine capable of trillions of operations per second in the latest M5 chip. Apple has steadily increased the Neural Engine’s capabilities while embedding neural accelerators directly into GPU cores, allowing AI workloads to run natively on multiple parts of the chip. This design decision, possible only through Apple’s control of the entire hardware and software stack, significantly boosts AI performance and efficiency. The Neural Engine now powers a wide range of on-device AI tasks, from Face ID and photo editing to voice transcription and health data analysis.

The video also contrasts two competing philosophies in AI computing: cloud-based AI versus on-device AI. While cloud AI offers massive computational power through data centers, it comes with latency, privacy concerns, and ongoing costs. Apple champions on-device AI, emphasizing privacy, speed, and independence from external servers. This approach allows AI to run locally on devices, keeping user data private and eliminating per-use costs. The future likely involves a hybrid model where some AI tasks run locally and others in the cloud, with developers deciding the best approach for each application. Apple’s investment in on-device AI hardware and software positions it strongly in this emerging landscape.

In conclusion, the video argues that the true story behind Apple’s new MacBook Pro lies in its Neural Engine and the company’s long-term commitment to on-device AI. This commitment is evident in the chip’s design and the broader industry trend toward integrating AI capabilities directly into consumer hardware. The Neural Engine’s growth over eight years reflects a strategic vision that prioritizes privacy, efficiency, and user control. As AI software continues to mature, the gap between hardware potential and software capability will close, unlocking new possibilities for developers and users alike. The video encourages viewers to look beyond marketing and benchmarks to understand the deeper technological shifts shaping the next decade of computing.