The video covers a conflict between the Pentagon and Anthropic, where the U.S. government is pressuring the AI company to remove safety guardrails from its Claude model for military use, threatening severe consequences if they refuse. The creator criticizes this government overreach, supports Anthropic’s stance on maintaining ethical boundaries, and warns of the dangerous precedent such coercion sets for responsible AI development.

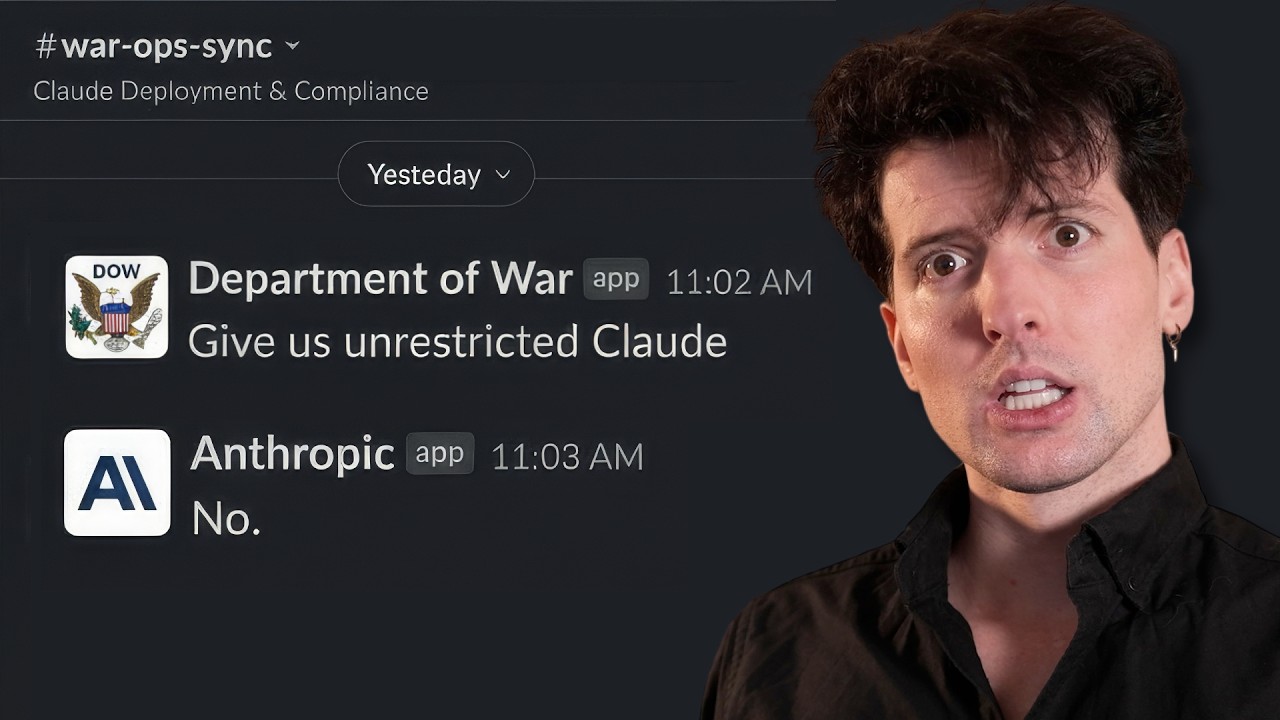

The video discusses a recent and unprecedented conflict between the U.S. government—specifically the Pentagon—and the AI company Anthropic, creators of the Claude language model. The Pentagon has issued a formal ultimatum to Anthropic, demanding that the company remove safety guardrails from its AI models for military use, or else be labeled a supply chain threat under the Defense Production Act. This would be the first time in U.S. history that such a designation is applied to an American company, and it would have severe consequences for Anthropic’s business and reputation. The video’s creator, who typically avoids political topics and is known for criticizing Anthropic, expresses discomfort at having to defend the company in this situation.

The Pentagon’s demands are rooted in a new executive order that emphasizes maintaining U.S. dominance in artificial intelligence for national security purposes. The order requires that AI models used by the military be deployable within 30 days of public release and that any “lawful use” requested by the government must be permitted, including potentially lethal applications. The document also contains overtly political language, such as crediting former President Trump for AI advancements, and includes a section banning diversity, equity, and inclusion (DEI) considerations in military AI. The video’s creator criticizes the politicization of the issue and points out the contradiction between advocating for free markets and using government power to coerce private companies.

Anthropic’s response, as outlined by CEO Dario Amodei, is that while the company supports U.S. national security and has already provided its models for classified military use, it refuses to remove two key safeguards: prohibitions on mass domestic surveillance and fully autonomous weapons. Anthropic argues that current AI technology is not reliable enough for autonomous lethal use and that removing these guardrails would endanger both civilians and soldiers. The company has offered to collaborate on research to improve reliability but insists on maintaining these ethical boundaries.

The video also highlights the broader implications for the tech industry, noting that the Pentagon is pressuring other AI companies like Google and OpenAI to comply with similar demands. In response, employees from these companies have signed an open letter expressing solidarity with Anthropic and refusing to allow their models to be used for mass surveillance or autonomous killing without human oversight. The creator emphasizes the importance of this collective stand, arguing that the government’s actions threaten the principles of free enterprise and responsible AI development.

Ultimately, the video frames the situation as a fundamental clash between government overreach and the rights of private companies to set ethical limits on their products. The creator, despite personal dislike for Anthropic, defends the company’s right to refuse certain military applications and criticizes the use of legal threats to force compliance. The video concludes with a call for viewers to support freedom and responsible AI, expressing concern about the dangerous precedent being set by the government’s actions.