The video reveals a critical remote code execution vulnerability in SGlang, an inference engine for large language models, caused by unsandboxed template rendering in its reranking feature, allowing attackers to execute arbitrary code via malicious model files. It urges AI operators to carefully vet self-hosted models and implement sandboxing measures to prevent supply chain attacks and ensure secure deployments.

The video discusses a newly discovered critical remote code execution (RCE) vulnerability in SGlang, an inference engine used to optimize large language model (LLM) deployments. SGlang helps companies efficiently manage hardware resources like GPUs and VRAM by caching and organizing conversation memory, enabling faster processing of multiple user prompts on a single GPU. While SGlang is relatively new, it has quickly gained popularity and is used in large-scale production environments, powering trillions of tokens daily across hundreds of thousands of GPUs worldwide.

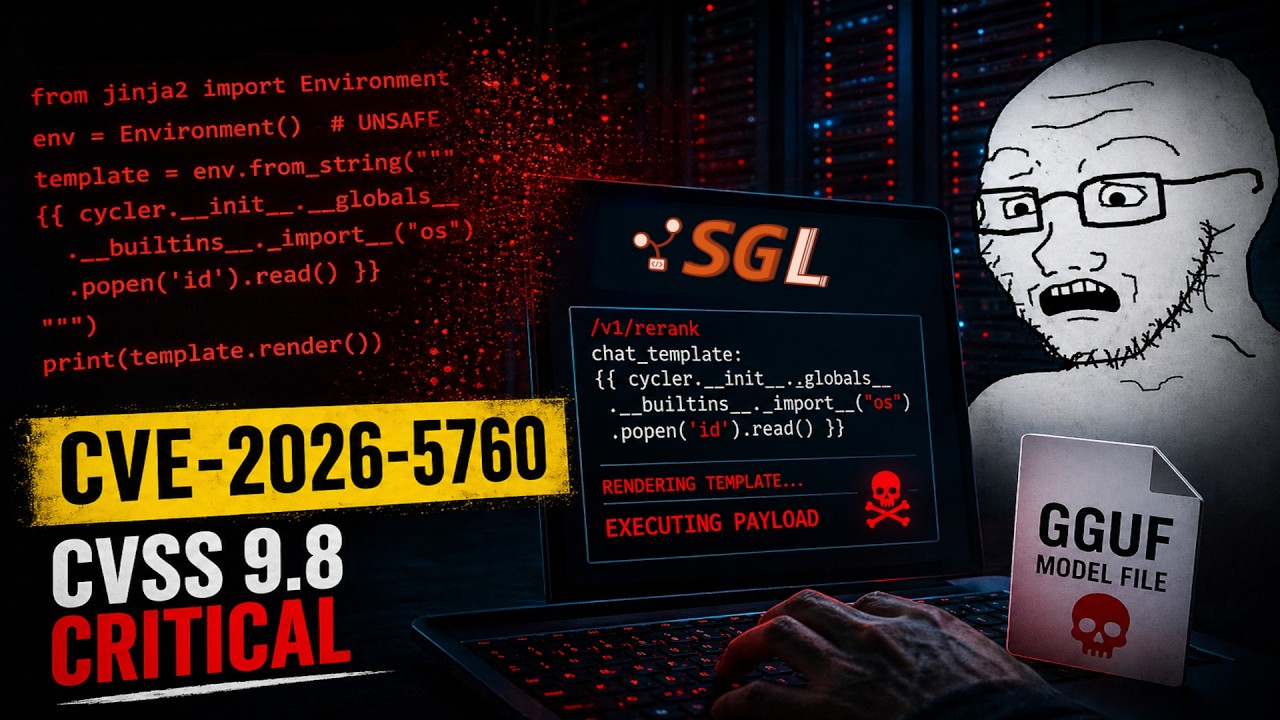

The vulnerability arises from a server-side template injection flaw linked to the reranking feature in SGlang. Specifically, the reranking endpoint uses a cross-encoder model to rank documents by relevance, rendering a chat template supplied by the model. This template rendering is done using the Ginga 2 templating engine without sandboxing, allowing malicious template expressions embedded in the model’s metadata to execute arbitrary Python code on the server. The exploit is triggered when a malicious GGUF model file containing a crafted tokenizer chat template is loaded by the victim’s server.

Attackers can exploit this vulnerability by tricking operators into downloading and loading a compromised model from public repositories or model hubs, which is plausible given the fast-paced AI deployment environment and the common practice of experimenting with new models. Once the malicious model is loaded, the payload remains dormant until the reranking pipeline processes a request, at which point the unsandboxed Ginga 2 engine executes the embedded malicious code. This can lead to full server compromise, enabling data exfiltration, lateral movement within networks, or denial of service attacks.

The video emphasizes that the root cause is the lack of sandboxing in the Ginga 2 templating engine used by SGlang. A straightforward mitigation would be to replace the default unsandboxed Ginga environment with a sandboxed version, preventing dangerous objects from being exposed in the template context. This approach is similar to fixes applied to other AI-related RCE vulnerabilities, such as the recently patched CVE-2024-34359 in the llama CPP Python package. However, as of the video’s release, SGlang has not yet patched this critical issue.

Finally, the video advises caution when deploying AI models, especially self-hosted ones, urging operators to thoroughly vet models before use to avoid supply chain attacks. While self-hosting AI models offers benefits like cost savings, better data security, and control compared to third-party APIs, it also introduces risks if security best practices are not followed. The video concludes by encouraging viewers to prioritize security over rapid deployment and to stay informed about vulnerabilities in AI infrastructure.