Transformers are replacing CNNs in image classification because their self-attention mechanism captures global context and complex interactions more effectively than CNNs’ local convolutional filters, leading to better accuracy and flexibility. Additionally, advances in hardware and the ability of transformers to handle multimodal data have made them more practical and versatile than CNNs, which are limited by their specialized, local processing.

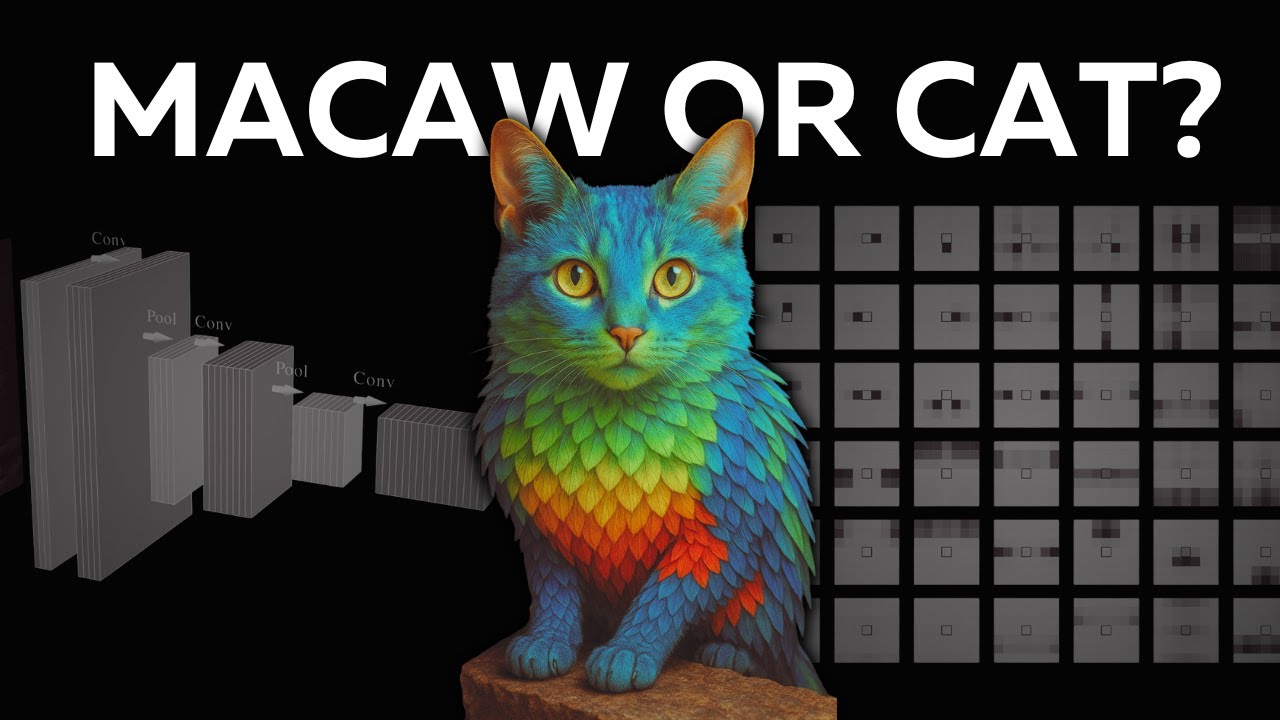

The video explores why transformers are replacing convolutional neural networks (CNNs) in image classification, despite CNNs being specifically designed for image processing. It begins by comparing two classifiers: Microsoft’s ResNet, a CNN, and Google’s Vision Transformer (ViT). When shown an image of a cat with colorful parakeet feathers, ResNet misclassifies it as a macaw due to its focus on texture, while ViT correctly identifies it as an Egyptian cat. This difference stems from the fundamental architectural distinctions between CNNs and transformers.

CNNs rely on convolutional layers that use small filters or kernels sliding over the image to detect local features like edges and textures. These kernels process pixels within a limited neighborhood, emphasizing locality, translation invariance, and hierarchical structure. Pooling layers reduce spatial dimensions to encourage abstraction. While this inductive bias helps CNNs excel at recognizing local patterns, it limits their ability to capture global context, sometimes causing misclassification when texture conflicts with overall object structure.

Transformers, on the other hand, use self-attention mechanisms that allow each pixel (query) to attend to every other pixel (key) in the image simultaneously. This global receptive field enables transformers to capture long-range dependencies in a single step, unlike CNNs which require multiple layers to propagate information across distant pixels. Self-attention is more flexible and less biased, capable of learning convolution-like operations but also much more complex interactions, making it a strict superset of convolution.

Two additional factors contributed to transformers overtaking CNNs in computer vision. First, the arrival of powerful hardware accelerators like Nvidia’s tensor cores and Google’s TPUs in 2017 enabled efficient parallel processing of transformers, which naturally leverage massive matrix multiplications. CNNs, constrained by local receptive fields, cannot parallelize information flow as effectively. Second, transformers support multimodality, handling text, images, audio, and video within a unified architecture, whereas CNNs are specialized for images and struggle to generalize across modalities.

The video concludes by acknowledging the computational challenges of applying self-attention at the raw pixel level, which is expensive and impractical for large images. It hints at modern architectures like Vision Transformers and diffusion transformers that address these issues to make self-attention tractable in real-world applications. Overall, the combination of attention’s generality, advances in hardware, and the need for multimodal models explains why transformers are replacing CNNs in computer vision.