Phil Hetzel from Braintrust explains that building effective evaluation platforms for AI agents is challenging due to the complexity of managing large, semi-structured trace data and the need for collaborative, scalable systems that integrate offline testing with live production feedback. He emphasizes that overcoming these technical and organizational hurdles is crucial for ensuring reliable agent performance, continuous improvement, and addressing advanced requirements like security and unknown failure modes.

Phil Hetzel, lead of solutions engineering at Braintrust, opens his talk by sharing his background and the importance of evaluation (eval) platforms in the context of large language models (LLMs) and AI agents. He explains that while LLMs are highly versatile and agents are becoming the norm for customer interactions, ensuring these agents perform reliably in production is critical to avoid risks related to brand reputation, compliance, and operational costs. Phil emphasizes that evals are essential for building confidence in agent performance before deployment and maintaining that confidence once agents are live.

Phil outlines the common starting point for many teams building eval platforms: using spreadsheets to document agent outputs and scores. While this approach is accessible and a good initial step, it quickly becomes cumbersome and limited, especially as teams grow and require more collaboration. He highlights that evals are inherently a team sport involving engineers, domain experts, and non-technical stakeholders, which spreadsheets do not adequately support. As a result, many teams move towards building bespoke UIs and databases to better manage eval data and involve more users, though these solutions often remain more about documentation than true experimentation.

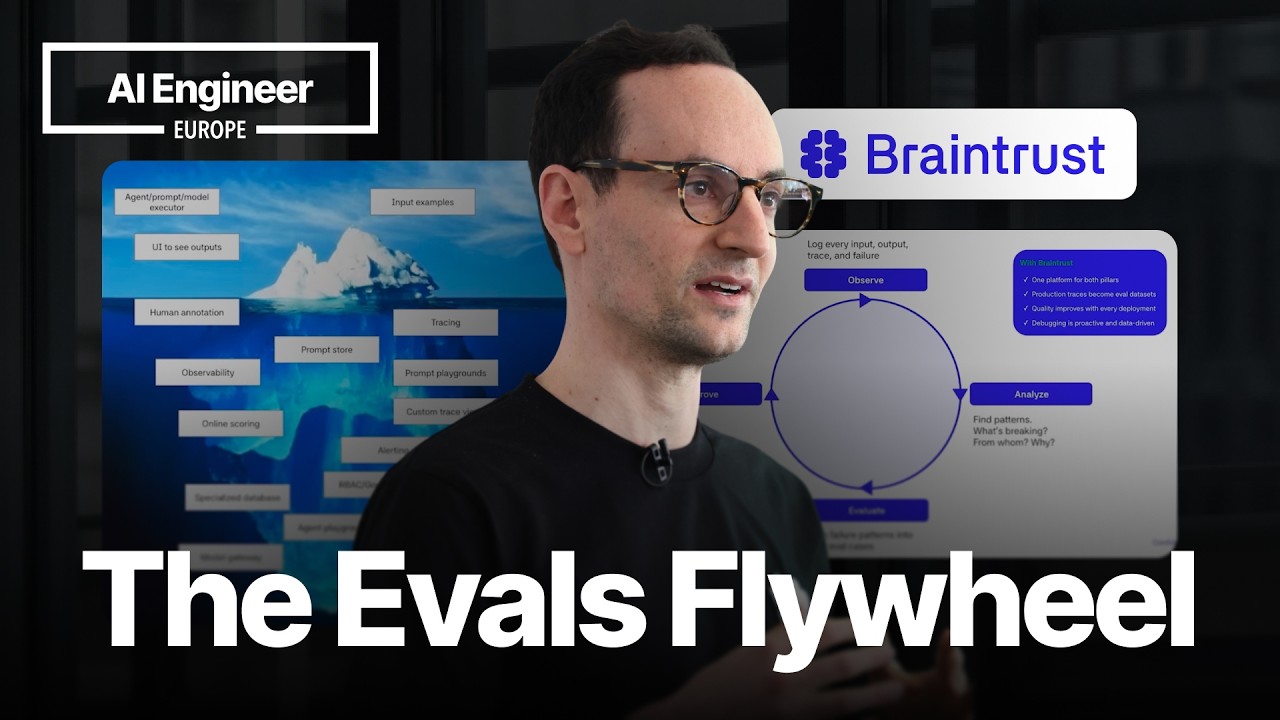

The next stage Phil describes involves enabling experimentation through more interactive platforms, often featuring playgrounds where users can tweak agent parameters and compare different configurations. This phase is crucial for identifying failure modes and developing scoring functions that reflect real-world agent behavior. He stresses the importance of integrating production trace data—real user interactions with agents—into the eval process to create a feedback loop that continuously improves agent quality. This loop connects offline evals with live observability, allowing teams to monitor and refine agents throughout their lifecycle.

Phil then delves into the technical challenges of building scalable eval and observability platforms. Agent traces are complex, large, and semi-structured, making them difficult to store and analyze using traditional tools. Braintrust initially used a combination of open-source data warehouses and custom query languages but found these inadequate for handling the volume and unstructured nature of trace data, especially for full-text search and low-latency queries. He underscores that building an effective eval platform is fundamentally a systems engineering problem requiring a robust data infrastructure capable of supporting diverse query patterns and high-velocity data ingestion.

Finally, Phil touches on future directions and advanced features for eval platforms, such as uncovering unknown unknowns through topic modeling, supporting agent-driven workflows, and implementing non-functional requirements like role-based access control and data masking. He also mentions the potential for automatic tracing via AI proxies to ensure comprehensive data collection. Throughout, Phil stresses that while building eval platforms is challenging, it is essential for delivering high-quality AI agents, and Braintrust is actively working to solve these problems at scale.