Fabio Akita argues that unsatisfactory results from large language models (LLMs) stem from users’ poor communication and vague instructions rather than the models themselves, emphasizing the need for clear, detailed prompts and iterative collaboration akin to human pair programming. He highlights that effective AI use requires upfront context-setting and active engagement, as LLMs lack innate understanding and rely entirely on the quality of user input to deliver meaningful outcomes.

Why LLMs Aren't Giving You the Result You Expect | Why I Prefer Claude Code Today – AkitaOnRails.com

The article “Why LLMs Aren’t Giving You the Result You Expect | Why I Prefer Claude Code Today” by Fabio Akita explores the common frustrations users face when working with large language models (LLMs) like Claude Code and Codex. Akita argues that the root cause of unsatisfactory results is not the models themselves but poor communication from users. He emphasizes that many users fail to clearly articulate their goals, constraints, and validation criteria, leading to misunderstandings and suboptimal outputs. The problem is not new; it reflects a longstanding issue in software development and team communication, now amplified by the speed and expectations around AI.

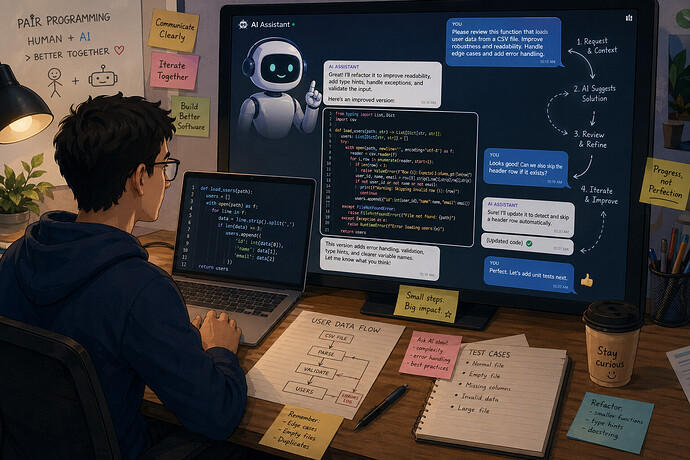

Akita shares his approach to interacting with LLMs, treating them like human pair programming partners. He stresses the importance of explicitly stating what he wants, what he does not want, how the task should be done, and how success will be validated. Using a detailed example of organizing a massive collection of ROM files, he illustrates how providing comprehensive context, constraints, and iterative feedback leads to reliable and accurate results. This method involves continuous engagement, monitoring progress, and adjusting instructions as needed, rather than expecting a perfect solution from a single prompt.

The article highlights that effective communication with LLMs requires upfront investment in context-setting. Once the model understands the environment and goals, Akita shifts from prescribing exact solutions to asking for suggestions and alternatives, leveraging the AI’s broad knowledge and creativity. This collaborative dynamic mirrors how he would work with a skilled human developer, emphasizing that the AI’s value lies in accelerating iterative development rather than replacing human insight or decision-making.

Akita also addresses a common misconception that AI should “figure things out” independently. He clarifies that LLMs do not possess innate domain knowledge or environmental awareness unless explicitly provided. The quality of AI output is directly proportional to the quality and detail of the input prompt. Users who provide vague or minimal instructions and then blame the AI for poor results misunderstand the nature of these tools. The article asserts that AI will not replace good professionals who know how to frame problems and validate solutions but will replace those who cannot communicate their needs effectively.

Finally, Akita uses the metaphor of Tony Stark and Jarvis from the Marvel Cinematic Universe to illustrate the relationship between human expertise and AI assistance. Just as Jarvis cannot build the Iron Man suit without Stark’s vision and iterative input, AI models require skilled human guidance to produce meaningful outcomes. He concludes by explaining the significance of his company’s name, Codeminer 42, referencing Douglas Adams’ “The Hitchhiker’s Guide to the Galaxy” to emphasize that a correct answer is meaningless without a well-formed question. The article encourages readers to focus on sharpening their questions to get the best results from AI.